Generative AI Apps With Amazon Bedrock: Getting Started for Go Developers

An introductory guide to using the AWS Go SDK and Amazon Bedrock Foundation Models (FMs) for tasks such as content generation, building chat applications, handling streaming data, and more.

- Amazon Bedrock Go APIs and how to use them for tasks such as content generation

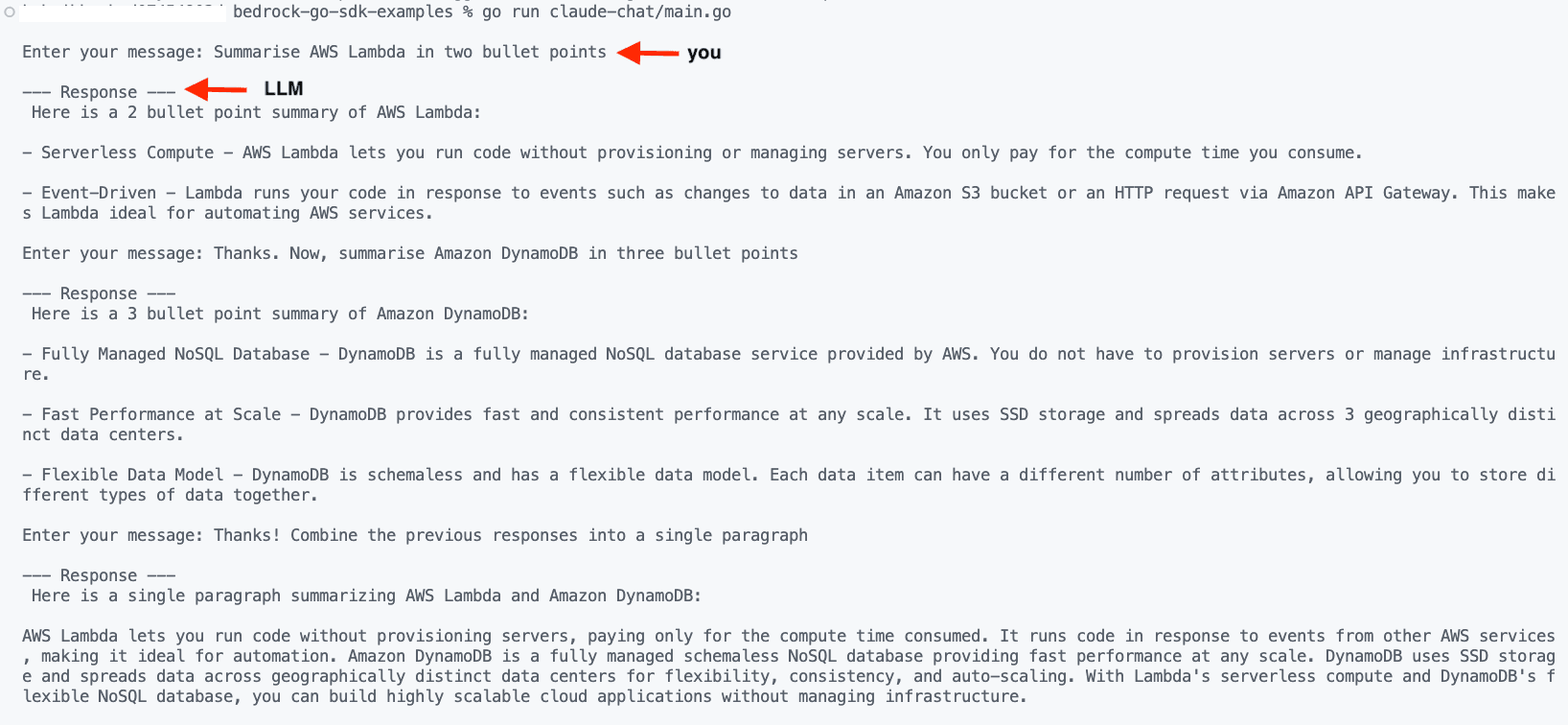

- How to build a simple chat application and handle streaming output from Amazon Bedrock Foundation Models

- Code walkthrough of the examples

The code examples are available in this GitHub repository

- Grant programmatic access using an IAM user/role.

- Grant the below permission(s) to the IAM identity you are using:

aws.Config instance using config.LoadDefaultConfig, the AWS Go SDK uses its default credential chain to find AWS credentials. You can read up on the details here, but in my case, I already have a credentials file in <USER_HOME>/.aws which is detected and picked up by the SDK.- The first one, bedrock.Client, can be used for control plane-like operations such as getting information about base foundation models, or custom models, creating a fine-tuning job to customize a base model, etc.

- The bedrockruntime.Client in the bedrockruntime package is used to run inference on the Foundation models (this is the interesting part!).

Note that you can also filter by provider, modality (input/output), and so on by specifying it inListFoundationModelsInput.

JSON formatted and its details are well documented here - Inference parameters for foundation models.ModelId in the call that you can get from the list of Base model IDs. The JSON response is then converted to a Response struct.Since it's a simple implementation, the state is maintained in-memory.

You should see the output being written to the console as the parts are being generated by Amazon Bedrock.

InvokeModelWithResponseStream API, which returns a bedrockruntime.InvokeModelWithResponseStreamOutput instance.InvokeModel API. Since the InvokeModelWithResponseStreamOutput instance does not have the complete response (yet), we cannot (or should not) simply return it to the caller. Instead, we opt to process this output bit by bit with the processStreamingOutput function.type StreamingOutputHandler func(ctx context.Context, part []byte) error which is a custom type I defined to provide a way for the calling application to specify how to handle the output chunks - in this case, we simply print to the console (standard out).processStreamingOutput function does (some parts of the code omitted for brevity). InvokeModelWithResponseStreamOutput provides us access to a channel of events (of type types.ResponseStream) which contains the event payload. This is nothing but a JSON formatted string with the partially generated response by the LLM; we convert it into a Response struct.handler function (it prints the partial response to the console) and make sure we keep building the complete response as well by adding the partial bits. The complete response is finally returned from the function.InvokeModelWithResponseStream API and handle the responses as per previous example.

So far we used the Anthropic Claude v2 model. You can also try the Cohere model example for text generation. To run:go run cohere-text-generation/main.go

You should see an output JPG file generated.

InvokeModel call result is converted to a Response struct which is further deconstructed to extract the base64 image (encoded as []byte) and decoded using encoding/base64 and write the final []byte into an output file (format output-<timestamp>.jpg).CfgScale, Seed and Steps); their values depend on your use case. For instance, CfgScale determines how much the final image portrays the prompt: use a lower number to increase randomness in the generation. Refer to the Amazon Bedrock Inference Parameters documentation for details.Titan Embeddings G1 - Text model for text embeddings. It supports text retrieval, semantic similarity, and clustering. The maximum input text is 8K tokens and the maximum output vector length is 1536.float64s 🤷🏽.Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.