How to Build and Deploy an API-Driven Streamlit Python Microservice on AWS

In this blog post, I explain the solution design and the steps required to implement a MACH kind of architecture using AWS CodePipeline and other services.

Published Dec 18, 2023

I wanted a feature that did not exist in the trading platform I'm using, so I built it as a Web App API-based microservice. To achieve this, I first read through the platform API documentation to gather the necessary parameters.

I then started building the application by creating a CLI version using Python. To ensure that each API parameter was functional, I tested them separately in a demo environment, followed by testing the entire application in a pre-production environment.

Recently, I came across several AI workshops that utilized the Streamlit framework for building plotting applications in a simple manner. Given the ease of use of Streamlit, I decided to build the trading web app version using Sreamlit as a microservice.

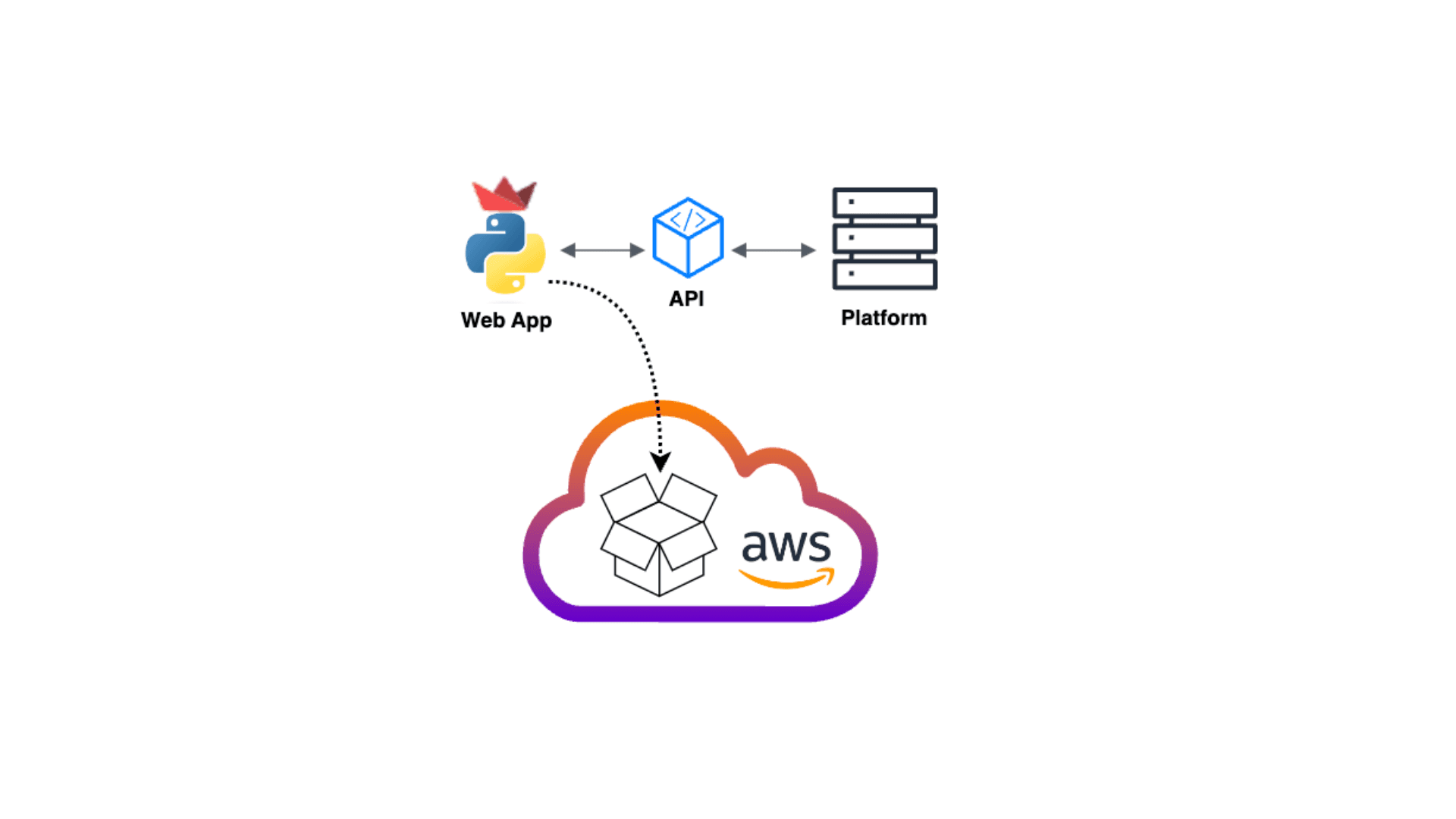

In this scenario, the communication between the frontend and the backend is through the API, the frontend is the Web App and the backend is the trading platform.

Streamlit is an open-source app framework written in Python. It is similar to Flask and is used for creating simple web applications quickly and easily. While it is commonly used for projects related to Machine Learning and Data Science, it works perfectly with major Python libraries and can include HTML code or markdown, making it an excellent choice for building simple applications.

As a beginner to Streamlit, I used GenAI to convert my Python code to Streamlit code. It didn't take long for me to create a functional web app. I then tested and refactored the app until I reached the final version.

As a beginner to Streamlit, I used GenAI to convert my Python code to Streamlit code. It didn't take long for me to create a functional web app. I then tested and refactored the app until I reached the final version.

During the preparation phase of building the web app microservice, I first created an ECR repository named 'webapp-base-image' to store the container base-image. Then, I pushed the Docker base-image (python:3.10-slim-buster) to it. Next, I created another ECR repository to store the Web App container image.

After that, I created a GitHub repository and pushed the source code and artifacts to the repository directory. Then, I created the Dockerfile required to build the Web App container. Finally, to build the CICD pipeline using CodePipeline, I created 'buildspec.yml' for CodeBuild and 'appspec.yml' for CodeDeploy.

It's important to note that the usage and content of these files would vary depending on the chosen computing service.

The following are the available compute options on AWS to deploy the container, each would require a different procedure and minor configuration changes.

- AWS App Runner (the fastest option, but limits infrastructure control)

- Fargate (Serverless container service)

- EC2 instance (Compute service with more control, but requires OS/Apps maintenance)

After the source code, artifacts, and configuration files were added to the web app repo, the container image was ready for deployment. I deployed the container on a free-tier EC2 instance as explained in the next phase.

To build and deploy the Web App, I first created the necessary resources using an EC2 instance as the host, an Application Load Balancer to decouple the TLS connection from the EC2 instance and provide load balancing for future scaling, a Build project to package and build the container using CodeBuild, CodeDeploy application to deploy the container on the EC2 instance, and finally CodePipeline to run the CICD pipeline.

Afterward, I created the remaining resources that are needed to access the Web App via the Internet, including the CloudFront distribution and a Route 53 hosted zone record.

In an upcoming blog post, I will explain how to optimize this solution to improve scalability and make it more cost-effective.

Next, are the steps required to implement this solution.

We will go through the steps required to build and deploy the Web App container.

First, we will create an ECR repository to store the base-image, pull the image from Docker Hub, then push it to the ECR repository.

After that, we will create another ECR repository to store the Web App container image.

After that, we will create another ECR repository to store the Web App container image.

- Run the following aws command to create the ECR repository:

aws ecr create-repository --repository-name webapp-base-image --image-tag-mutability MUTABLE- Set the Env variable on your machine

export AWS_ACCOUNT_ID=YourAWS-ID

export AWS_DEFAULT_REGION=AWS-Region

export ECR_PYTHON_URL="$AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/webapp-base-image"- Login to ECR

aws ecr get-login-password --region $AWS_DEFAULT_REGION | docker login --username AWS --password-stdin "$AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com"- Pull the image from Docker Hub

docker pull python:3.10-slim-buster

- Tag the image

docker tag python:3.10-slim-buster $ECR_PYTHON_URL:3.10-slim-buster- Push the image to ECR repository

docker push $ECR_PYTHON_URL:3.10-slim-buster- Run the following aws command to create the ECR repository:

aws ecr create-repository --repository-name webapp --image-tag-mutability MUTABLEWe will need to create a new GitHub repository for the Web App. Once done, you can clone the repository on the local machine and move the source code and artifacts to the root directory of the repository.

Next, you need to create the Python requirements.txt file which will include streamlit and other required packages and libraries for your Web App.

$ touch requirements.txt$ echo 'streamlit' > requirements.txtThen create a Dockerfile similar to the following

Then create a 'scripts' directory within the repository's root directory that will contain the deployment scripts, similar to 'start_app.sh' and 'stop_app.sh'.

Create the buildspec.yml at the GitHub repository's root directory.

CodeBuild will use the buidlspec.yml configuration file to build the container image and then store it in the ECR repository.

CodeBuild will use the buidlspec.yml configuration file to build the container image and then store it in the ECR repository.

Create the appspec.yml at the GitHub repository's root directory. CodeDeploy will use the appspec.yml configuration file to deploy the container on the EC2 instance.

Before creating the required resources, you will have to create IAM Roles for the EC2 instance and CodeDeploy.

It is recommended to customize each policy and create an inline policy to restrict access to the required resources, but for simplicity, we will use the policies managed by AWS

It is recommended to customize each policy and create an inline policy to restrict access to the required resources, but for simplicity, we will use the policies managed by AWS

This role has 2 policies to allow EC2 instance access to S3 and ECR ("AmazonEC2ContainerRegistryPowerUser", "AmazonS3ReadOnlyAccess")

This role has 2 policies to allow CodeDeploy access to EC2, ELB, Autoscaling and ECR ("AmazonEC2ContainerRegistryPowerUser", "AWSCodeDeployRole")

You will create an instance and attach this user-data file which will install the codedeploy-agent, and docker.

- Go To AWS EC2 console

- Choose Launch Instance.

- Enter the instance Name

- Application and OS Images: Choose AMI "eg: Amazon Linux 2023 AMI"

- Choose Instance type "eg: t2.micro"

- Choose Key pair (login)

- Network settings: Enter the following details

- VPC: "eg: default VPC"

- Subnet: "eg: SubnetPub1"

- Auto-assign public IP: Enable

- Security Group: Select Create security group

- Security group name: "eg: WebApp-SG"

- Skip Inbound rules for now

- Configure storage: add storage volume

- Advanced details:

- IAM instance profile: Choose the IAM role created in the previous step

- User data: upload the user-data file

- Choose Launch instance.

Before creating the Load Balancer, create a Security Group with an Inbound Rule (Type: HTTPS; Source: Custom 0.0.0.0/0) and note the security group ID.

Configure a target group then register the EC2 instance as a target

- Go to the AWS EC2 console

- Choose Target Groups from the navigation pane

- Choose Create target group

- Basic configuration:

- Target type: Select Instance

- Target group name: Enter a name "WebApp-TG"

- Select Protocol & Port

- Select IP address type: IPv4

- Select VPC: "eg: Default VPC"

- Health checks:

- Select Health check protocol: HTTP

- Select Health check path: enter the health check route or keep the default / "eg: /HeathCheck"

- Choose Next to register a target

- Register targets:

- Select the instance created before, and the port, and then choose Include as pending below

- Choose Create target group.

Once the target group is ready, set up an application load balancer.

- Go to the AWS EC2 console

- Choose Load Balancers from the navigation pane

- Choose Create Load Balancer.

- Under Application Load Balancer, choose Create

- Basic configuration:

- Load balancer name: Enter a name for your load balancer "eg: WebApp-ALB**

- Scheme: choose Internet-facing

- IP address type: IPv4

- Network mapping

- VPC: select the VPC "eg: Default VPC"

- Mappings: Choose the subnets

- Security groups: Select the security group created earlier

- Listeners and routing:

- Protocol: HTTPS

- Port: 443

- Default action: Select the target group created above

- Secure listener settings:

- Default SSL/TLS server certificate: Select Certificate source

- Choose Create Load Balancer

Now that ALB is ready, update the EC2 instance security group to allow ingress connection from ALB using a specific port

- Go to the AWS EC2 console

- Choose Security Groups from the navigation pane

- Choose the security group created for the EC2 instance

- Under Inbound rules Choose Edit inbound rules

- Choose Add rule 1

- Type: Custom TCP

- Port: Enter the web app port

- Source: Custom - select the ALB security group

- Choose Add rule 2

- Type: ssh

- Source: select My IP

- Choose Save rules

The build project will build the container image and then push it to the ECR repository. You will need to follow the steps below to create the build project.

- Go to the AWS CodeBuild console

- Choose Create build project

- Project configuration:

- Project name: Enter the project name

- Source:

- Source provider: Select Github

- follow the instructions to connect (or reconnect) to GitHub and authorize access to AWS CodeBuild

- Repository: Select Public repo, or repo in your GitHub account

- GitHub repository: Enter the repo link "eg: https://github.com/YourGitHubUser/RepoName.git"

- Source version: Enter the branch name

- Webhook optional: Rebuild every time a code change is pushed to this repository

- Environment:

- Environment image: Managed image

- Operating system: Amazon Linux

- Runtime(s): Standard

- Image: 5.0 or latest

- Select Privileged

- Service role: select New service role

- Role name: Enter a service role name

- Buildspec:

- Build specifications: select Use a buildspec file

- By default, CodeBuild looks for a file named buildspec.yml in the source code root directory

- Artifacts: select No artifacts for now, it will be updated during the setup of CodePipeline

- Choose Create build project

- Go to AWS IAM console

- Choose Roles from the navigation pane

- Search & select the service role name you entered while setting up the build project

- Choose Add permissions: select attach policy

- Search & select policy: AmazonEC2ContainerRegistryPowerUser

- Choose Add permission

In this demo, you will create a CodeDeploy application for an in-place deployment using the CodeDeploy console. Otherwise, it is recommended to create a Blue/Green deployment with multiple compute resources to ensure the availability of the Web App.

- Go to the AWS CodeDeploy console.

- expand Deploy, and then choose Getting started.

- Choose Create application.

- Application configuration:

- Application name: Enter an application name.

- Compute platform: EC2/On-premises.

- Choose Create application.

- Choose the application name.

- Choose Create deployment group under Deployment groups tab.

- Deployment group name: Enter a deployment group name.

- Service role: Select the service role created previously for CodeDeploy.

- Deployment type: In-place.

- Environment configuration:

- Select Amazon EC2 instances

- Enter the tag key used while creating the EC2 instance.

- Enter the tag value.

- Install AWS CodeDeploy Agent: Never

- Deployment configuration: CodeDeployDefault.AllAtOnce

- Choose Create deployment group.

Now that build GitHub repository, build project, and CodeDeploy application are ready for the pipeline, you will follow the steps below to configure it.

- Go to the AWS CodePipeline console.

- Choose Create pipeline.

- Pipeline settings:

- Pipeline name: Enter the pipeline name.

- Service role: Choose New service role or Existing service role if you have an existing role.

- Source:

- Source provider: GitHub (version 2)

- Connection: Choose exiting Github connection or choose Connect to Github to create a new connection.

- Repository name: Enter the repository name "eg: YourGitHubUser/WebAppRepo"

- Branch name: Enter the branch name.

- Output artifact format: CodePipeline default.

- Build:

- Build provider: AWS CodeBuild.

- Region: Select your region.

- Project name Choose the project name created earlier.

- Build type: Single build.

- Deploy:

- Deploy provider: AWS CodeDeploy

- Region: Enter your region.

- Application name: Choose the application you created earlier.

- Deployment group: Choose the deployment group you created earlier.

- Review the configuration then choose Create pipeline

The pipeline will run once it is created. starting by running the Source stage to get the files from the GitHub repository, then running the Build stage to build the container image then push it to the ECR repository. Finally, run the Deploy stage which will deploy the container on the EC2 instance.

You may choose to use CloudFront to cache your web app content and bring it closer to your users. This can help reduce the load on the ALB, resulting in lower costs. Additionally, you can add another layer of security by configuring WAF/Shield. However, it's important to note that using CloudFront is an optional step.

Follow the steps below to create a CloundFront Distribution.

- Go to the AWS CloudFront console.

- Choose Create distribution

- Origin:

- Origin domain: Select the ALB name

- Protocol: HTTPS only

- HTTPS port: 443

- Default cache behavior:

- Viewer protocol policy: Redirect HTTP to HTTPS

- Cache key and origin requests: Cache policy and origin request policy

- Cache policy: CachingDisabled

- Origin request policy -: AllViewerAndCloudFrontHeaders

- Settings:

- Custom SSL certificate: Select the ACM certificate

- Choose Create distribution

After deploying the CloudFront distribution, the final step is to publish your web application using Route 53. If you already have a domain name, you should create a new A record as an Alias and then choose the ALB URL or the CloudFront distribution URL if you have already created it. In case you do not have a domain name, you can create a new one using Route 53 and then add the A record.

Alternatively, you can use the CloudFront URL or the ALB URL if you are looking for a temporary option.

We have developed a MACH architecture for building and deploying a user-friendly Streamlit web application using AWS services. By utilizing CICD with AWS CodePipeline, we can dynamically build and deploy the web app on a container, which speeds up the process, provides flexibility, agility, and ensures availability. The solution is highly adaptable and can be deployed in any environment. It can also be optimized to scale using Auto-Scaling, which reduces costs based on the required traffic.