SimplifAI - Simplifying MLOps

MLOps aims to efficiently develop, deploy, monitor, & iterate ML models. To expedite ML/AI development & deployment, we developed SimplifAI, an MLOps framework using Amazon services.

Oshry Ben-Harush

Amazon Employee

Published Dec 18, 2023

MLOps (Machine Learning Operations) comprises a set of best practices for streamlining the end-to-end machine learning lifecycle from development to production deployment. Machine learning teams can directly leverage Amazon SageMaker, a fully-managed platform that automates MLOps by facilitating model building, training, deployment, monitoring, and iteration. However, many organizations requires a specific process, using a set of internal tools that does not allow directly using the streamlined SageMaker process, or lack the MLOps process for a friction-less machine learning development and deployment.

To enable scalable MLOps , internal stacks can then be created that are fully integrated into existing internal tools and orchestrated using managed machine learning services like Amazon SageMaker and AWS StepFunctions. This customized framework equips teams with the same rapid development and deployment capabilities afforded by SageMaker while remaining compliant with internal development policies. Such internal MLOps solutions empower machine learning teams to ship accurate, robust, and adaptable systems at pace.

In this post we review some of the underlying principles of MLOps and how they are reflected in such an internal MLOps framework we call SimplifAI.

The end-to-end machine learning pipeline consists of two core stages - model development and model deployment.

This stage involves collaboration between product management, business stakeholders, domain experts, data scientists and data engineers to frame a business problem that can be solved using data and models. Key steps include:

- Business Problem Formulation: Identify a business problem or opportunity that can benefit from a data-driven solution. Gather business requirements and success criteria.

- Data Exploration: Conduct exploratory data analysis to assess data relevance, quality, and adequacy to solve the business problem. This involves statistical analysis, visualization, feature engineering, and conversations with domain experts.

- Data Wrangling: Once data is deemed sufficient, perform cleaning, imputation, canonicalization, and preprocessing to prepare the analytical base tables and features.

- Feature Engineering (optional): Derive informative attributes from raw data that can further improve model performance. Examples include aggregations, lagging, transformations etc.

- Model Development: Leverage data science and machine learning to develop a performant yet interpretable model that meets the business metrics on train and validation dataset. Iterate as needed.

- Model Evaluation: Rigorously evaluate the final model on holdout test datasets to ensure generalization ability.

This stage focuses on building a reliable, scalable and automated infrastructure to serve ML models to drive impact. Steps include:

- Data Ingestion: Stream or batch new data from source systems into the deployment infrastructure.

- Feature Extraction (optional): Use productionized feature pipelines to transform raw data into model features.

- Model Training: Retrain model on new data using pre-determined hyper-parameter values.

- Performance Testing: Evaluate new model against business metrics on test data.

- Model Deployment: If performance criteria is met, replace existing model with latest version.

- Monitoring: Continuously monitor data, features and model performance post deployment.

- Reporting: Provide visibility into model and data drift to trigger retraining.

comment: there exists a set of machine learning and statistical models that support continuous or online learning. In this category of learning, the model continuously learns from new examples and a re-training process does not need to be initiated.

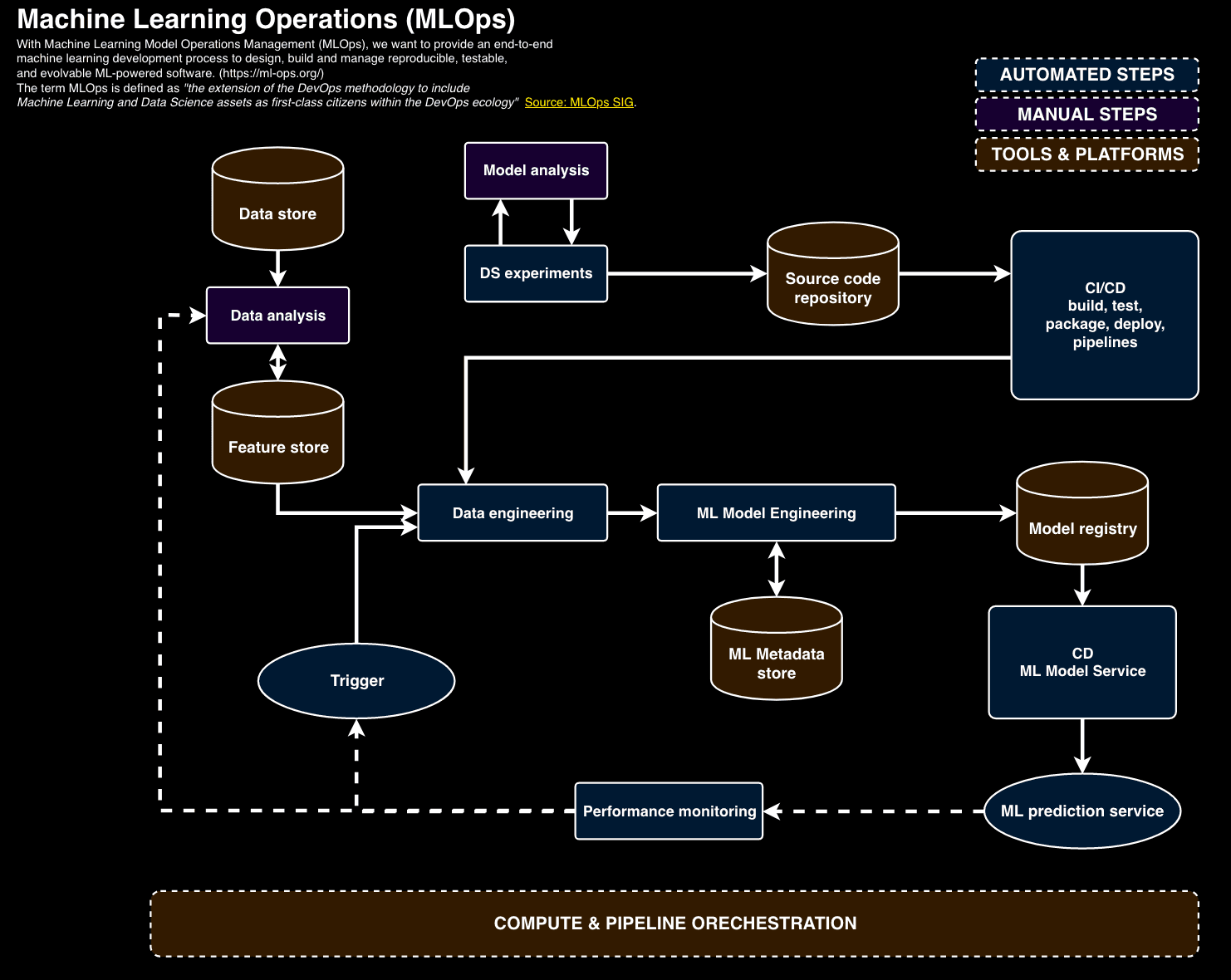

Automating machine learning workflows is crucial for successfully deploying models in production. An MLOps team aims to create a pipeline that trains and deploys ML models without any manual intervention. This end-to-end automation requires orchestrating several components:

First, the ML development environment supports experimentation and collaboration between data scientists, data engineers, and MLOps engineers. Version control, data sources, model registries, and metadata stores track iterative work during model creation. Standardized pipelines move models from this sandbox to production while preserving lineage.

Second, testing validates each pipeline component. Data and features are monitored for drift over time. Models undergo bias testing and confirm business objective alignment. Integration checks guarantee reproducibility and algorithmic correctness. Canary analysis compares staging and production environments.

Finally, monitoring maintains model and pipeline health. Alerts notify dependency changes, data schema deviations, and prediction quality degradation. Computational performance and model staleness indicate areas needing retraining or code updates. This vigilance ensures that productionized models continue meeting business needs over their lifespans.

In summary, MLOps combines development environment standardization, pipeline testing, and production monitoring to fully automate ML/AI workflows. This end-to-end approach produces reliable, reproducible, and relevant ML applications.

SimplifAI is built for scientists, data engineers and software developers who engage in machine learning solution development and deployment. It packages an MLOps process, framework, infrastructure and recommended practices in a set of core code libraries, templates, code examples and tutorials (starter kits). It systematically addresses our builder MLOps needs, and sets a framework that enables reduction of time, cost and resources and accelerated operation. SimplifAI has SageMaker at its core while utilizing other required internal and Native AWS (NAWS) services to simplify ML deployments. It eliminates operational overhead for scientists and engineers, thus expediting the development and deployment of ML-based solutions. Platform, pipelines, container images, packaging, machine learning and other algorithms required to deploy the machine learning application are all maintained in source control, built with automated builder tools, deployed through Amazon Pipelines and use stable and scalable NAWS services for any deployment. For example, when deploying a lead prediction engine, the team spent weeks figuring out the right strategy, tools and mechanisms for deployment, and then invested a great deal of effort establishing the necessary infrastructure and writing the code required. Using SimplifAI as a framework, the team could create the deployment in a matter of days.

SimplifAI provides an end-to-end machine learning operations (MLOps) framework, expandable architecture, and libraries to enable rapid development and deployment of ML solutions.

It defines a collaborative set of responsibilities during research, experimentation, and deployment, enabling flexibility, clarity, and agility:

Science Team Responsibilities:

- Demonstrating viable ML solutions

- Production-ready ML code

- Data ingestion for training/feature data

Engineering Team Responsibilities:

- Data ingestion pipelines

- CI/CD for training and inference

The SimplifAI framework follows accepted ML good practices:

- SageMaker for scalable training and deployment

- Future-ready architecture supports integrating emerging tools like feature stores and model registries

- Monitoring, alerting, and performance tracking

- Comprehensive metadata for reproducibility

These are packaged into a set of core libraries and templates to accelerate future ML deployments with upcoming enhancements include:

- Automated data and concept drift detection

- Rollback capabilities

- Specialized components like feature stores and model registries

- Flexible routing logic

The development stage focuses on demonstrating performant ML solutions and generating production-ready code:

- Exploration with scientists' tools of choice

- Production-Ready Code with SageMaker

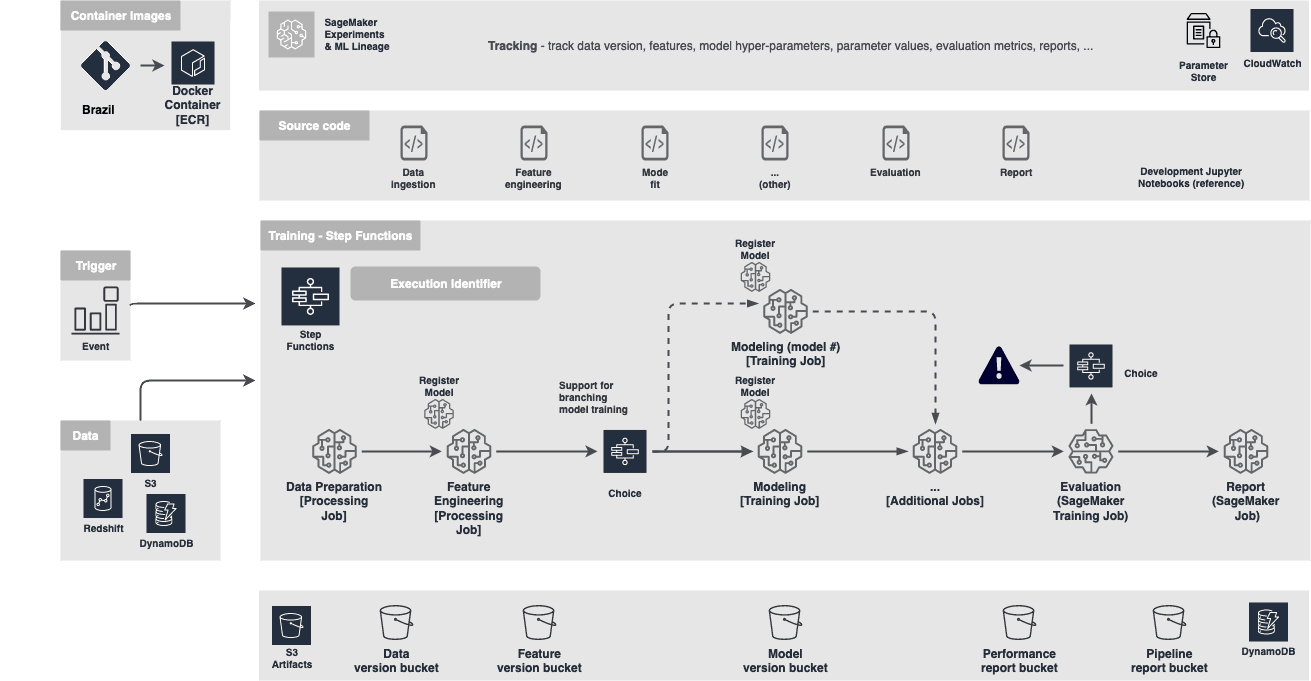

Training in production continuously replicates initial development:

- SageMaker jobs orchestrated by Step Functions

- Monitoring model performance and stability

- Logical decisions and routing

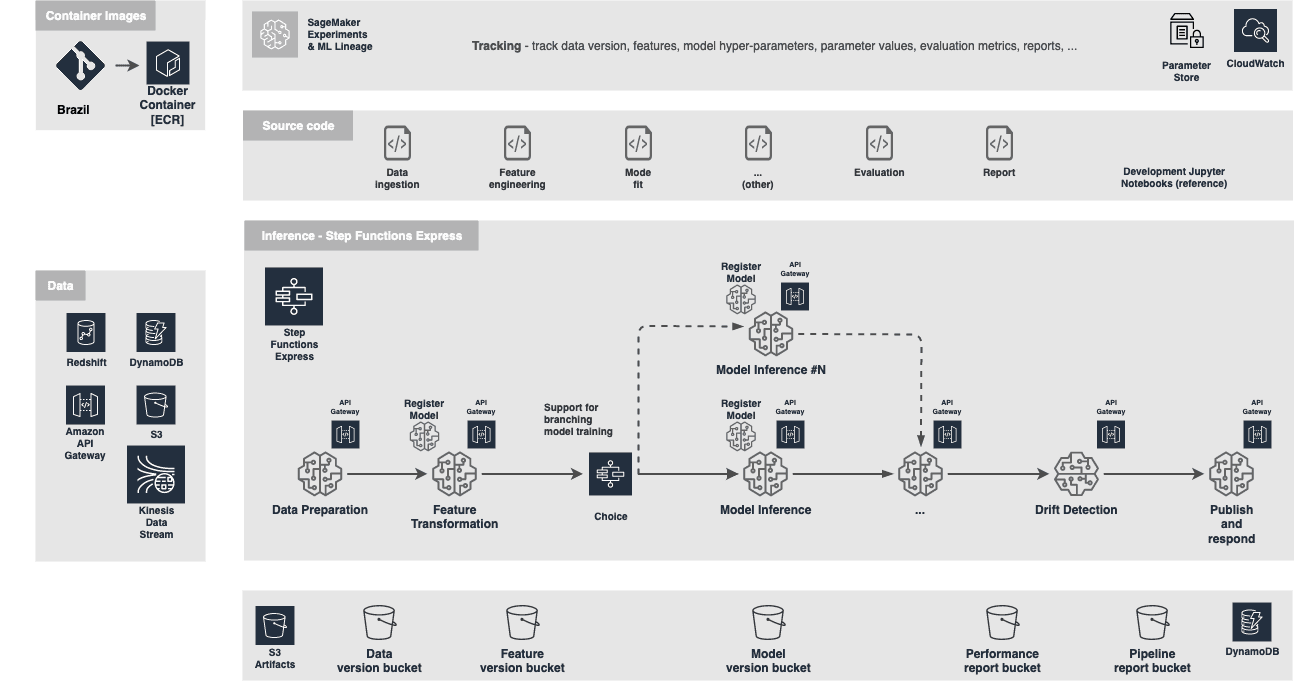

Trained models are deployed for inference:

- SageMaker models and endpoints orchestrated by Step Functions

- Monitoring and drift detection

- Logical decision making

- Routing between different model flavors or versions

SimplifAI is an open framework aimed at accelerating the development and deployment of machine learning (ML) and artificial intelligence (AI) solutions. It provides opinionated guidelines and tooling to build robust MLOps pipelines while ensuring compliance with software development standards.

At its core, SimplifAI jumpstarts the process of taking models from research to production by providing well-architected blueprints for the ML lifecycle. This includes standardized workflows for data ingestion, feature engineering, model training, evaluation, and monitoring. Pre-built integrations with AWS services such as SageMaker, ECS, and CloudWatch facilitate rapid implementation without compromising reliability or scalability.

By leveraging SimplifAI, we have already operationalized multiple AI solutions on AWS significantly faster compared to building custom pipelines. We have received strong positive feedback from early adopters indicating they were able to deploy high-quality models in weeks rather than months. The accelerated time-to-value combined with enforced best practices for MLOps unlocks the potential of AI/ML while managing risk.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.