Automated Assistants: Creating Seamless Workflow Integrations with Language Models

Alfred workflow automates access to language models, enabling streamlined integration into writing workflows for augmented creativity and productivity.

Oshry Ben-Harush

Amazon Employee

Published Dec 27, 2023

Authors: Oshry Ben-Harush, Joe Standerfer

Language models have exploded in capability in recent years. They can now generate human-like text on demand on an endless array of topics. As off-the-shelf products available through APIs, anyone can leverage them to augment their writing. This has leveled the playing field between average writers and excellent writers. With language models, we can get ideas and refine content more easily than ever before.

However, integrating language models into daily workflows remains clunky. Typically it requires manually copy-pasting content back and forth between apps. Alternatively, specialized software may be used, but these niche programs frequently lack support for desired functionalities. The friction introduced by these inelegant integrations hampers the ability to harness the power of language models to fluidly enhance writing.

Alfred is a Mac productivity app that allows automating workflows through customizable hotkeys and keywords. With some configuration, it can provide quick access to language models directly within our existing workflows.

In this post, I'll walk through creating a simple Alfred workflow to integrate Amazon Bedrock API. Amazon Bedrock offers advanced models like Claude, Llama, Jurassic 2 and others in an easy to use API.

The workflow will allow triggering Bedrock's text generation capabilities through custom Alfred keyword. We can directly send question prompts to Bedrock, or select text from our workflow, ask for Bedrock to augment it and Bedrock returns the response text generated by its underlying language model. This allows rapidly exploring ideas that we can then refine and incorporate as needed into longer form writing.

By handling the busywork of calling APIs and rendering responses, Alfred streamlines bringing AI-powered language models directly into our daily writing. No more context switching between apps and windows. The creative flow can continue uninterrupted.

Though simple, workflows like this preview how AI could fundamentally change how we write. Language models reduce barriers that previously made excellent writing time-intensive and difficult. Powerful automation handled in the background by Alfred allows the full capabilities of large language models to be integrated directly into existing workflows.

The impacts on writing and content creation could be profound. High quality, customized content generated with the ease of a conversational agent will soon be accessible to anyone. As these technologies continue rapidly improving and streamlining access reduces friction, they may one day match human capabilities across all facets of communication.

As conversational AI continues to advance, these systems will be able to automatically retrieve the most relevant information needed, recommend optimal components for workflows, and even determine which tasks can be automated and subsequently build the automation workflows required.

Moving forward, conversational agents will automatically find the best pieces of information required, recommend the right components for a workflow and even identify what actions can be automated and build the required automation workflow.

While Alfred is featured here, it is by no means the only automation tool out there. Most operating systems come bundled with built-in automation utilities as well. For example, Windows has PowerShell and macOS offers AppleScript. There are also various third-party apps available across platforms.

The key is finding a tool that enables straightforward integration of language models into your daily tasks. Whether it is through textual interfaces, voice commands, or graphical workflow builders, the automation software should allow you to leverage AI to streamline repetitive jobs. This frees you up to focus more time on creative and meaningful work.

As AI capabilities continue advancing, the possibilities for merging them with personal automation tools will exponentially increase. Any program that facilitates this human-AI collaboration effectively stands to significantly augment how we work and live.

Here are some suggestions for alternative automation software to Alfred for Windows and Mac:

Windows:

- AutoHotkey - Open-source automation and scripting language for Windows

- AutoIt - Automation language for Windows to automate repetitive tasks

- PowerToys - Utilities from Microsoft that include a keyboard manager and other automation tools

Mac:

- Keyboard Maestro - Macro program for macOS to automate workflows and tasks

- Hammerspoon - Automation tool for macOS using the Lua scripting language

- BetterTouchTool - Highly customizable input manager and automation tool for macOS

- Automator - Apple's built-in automation tool in macOS for creating automated workflows

The main alternatives across both platforms tend to be scripting languages and tools like AutoHotkey, AutoIt, Keyboard Maestro, and Hammerspoon that allow binding actions and sequences to shortcuts, along with creating more complex automation tasks and workflows. Both Windows and Mac also have some built-in automation utilities as well from Microsoft and Apple.

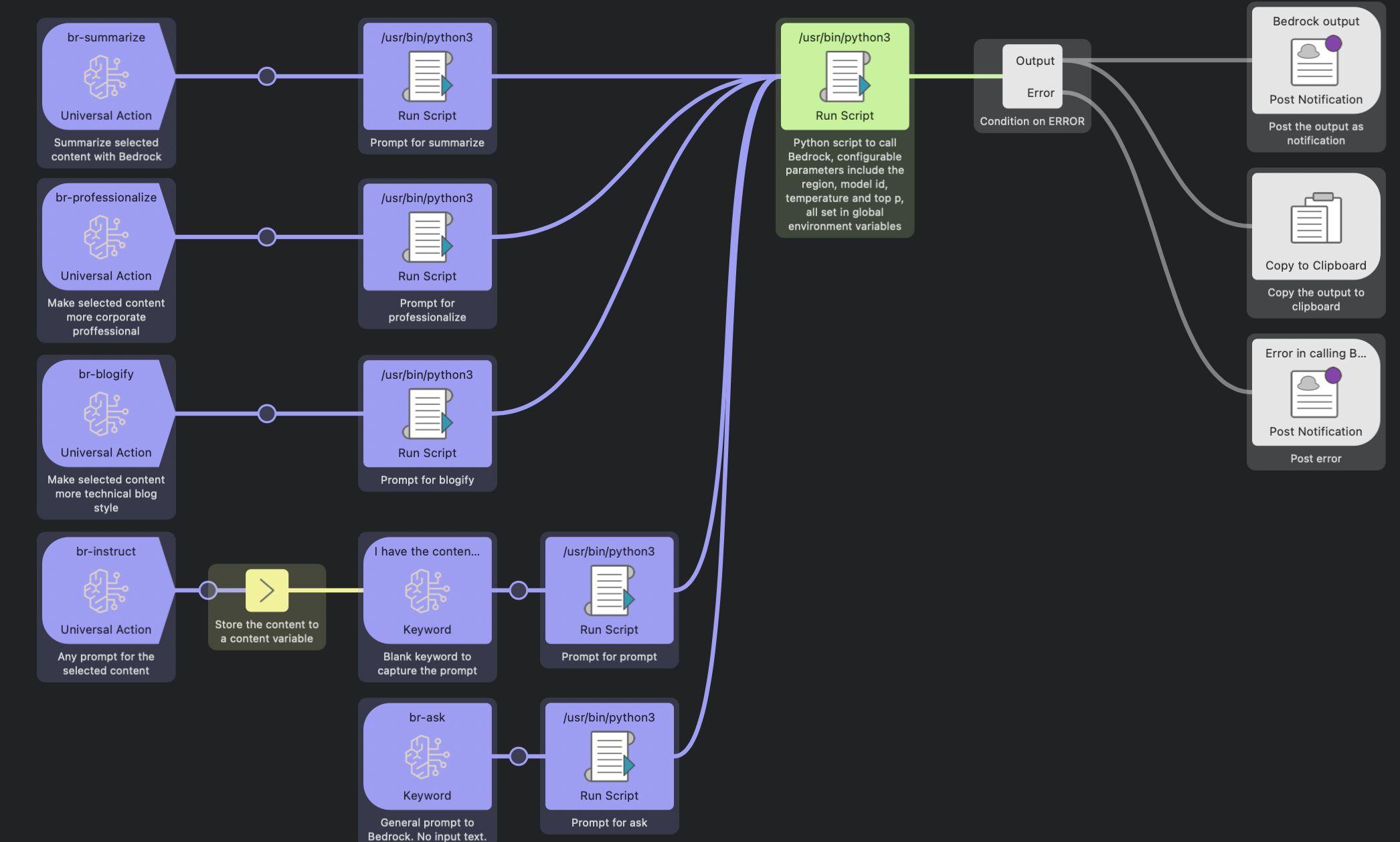

The main workflow comprises a set of universal actions, keywords, and Python scripts designed to accomplish the following:

- Generate summaries of selected text

- Revise the selected text to enhance professionalism

- Transform the writing style of the selected text to resemble a blog format

- Execute instructions provided by the user on the selected text

- Enable users to pose questions or issue directives to the Bedrock LLM model

Each operation generates a prompt that is passed to the main Python script designed to interface with and invoke the Bedrock model. The script code:

The prompt is constructed for each of the individual actions, for example, the summarize action:

Obtained response is then posted as a notification and copied to the clipboard to be used to replace or augment the original text.

This post introduced using Alfred, a Mac productivity app, as a means to create custom workflows that integrate with language models through Amazon's Bedrock API. We walked through building a simple Alfred workflow with keywords to trigger Bedrock's text generation capabilities, allowing users to rapidly explore ideas by posing questions and getting AI-generated responses. The post argues this streamlines bringing powerful language models into daily writing workflows by handling the busywork of calling APIs and managing responses. While focused on Alfred, we noted similar automation tools across platforms can achieve comparable integration. Finding tools that facilitate straightforward merging of language models with existing workflows to maximize human-AI collaboration. A sample workflow is outlined to demonstrate invoking Bedrock for actions like summarizing text, revising phrasing, and transforming writing style.

[The summary and several other paragraphs in the text were made more professional through streamlined interaction with Amazon Bedrock and Anthropic Claude :-)]

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.