Automating Your Boring Work With S3 Batch Operations

During re:invent 2023, AWS added the ability for developers to apply S3 batch operations to specific objects. Let's learn about the S3 operation feature.

Published Jan 29, 2024

During the daily work period of engineers managing blob files within the S3 service on AWS, they often have tickets requiring them to perform large-scale administrative tasks on S3 buckets such as copying or replicating files from one bucket to another, deleting files matching a certain filter, or even renaming a category of files to contain a prefix and many other reasons.

To avoid wasting time performing these large-scale operations manually, most engineers prefer to automate the process through shell scripts that use AWS S3 API endpoints or SDKs to achieve their results. Although they may seem to work, using scripts to execute your S3 operations presents other challenges such as error handling, security of credentials, and the maintenance overhead of the scripts.

Aside from the use of scripts, what other way can engineers seamlessly perform bulky operations on S3 buckets? — AWS S3 Batch Operations!

Batch Operations is a feature of the S3 service that allows developers to design and execute large-scale operations on S3 buckets directly from the AWS console. The operations are executed as CRON jobs providing the developer with visibility into the state of the running job.

Following the releases from re:invent 2023, developers can now utilize batch operations to execute tasks on objects matching a specific prefix, suffix, creation date, or storage class. Some of the operations you can perform through a batch operation include managing object tags, access control lists (ACLs), object locks or even copying or replicating objects from staging to production bucket. For more custom logic such as compressing all objects within a bucket through a third-party service, Batch Operations provides the feature to invoke a Lambda function within AWS.

Read the Batch-transcoding videos with S3 Batch Operations, AWS Lambda, and AWS Elemental MediaConvert tutorial to learn how to use lambda functions within a batch operation.

Within this tutorial, you will learn how to use the Batch Operations feature of the S3 service by copying objects from one bucket to another.

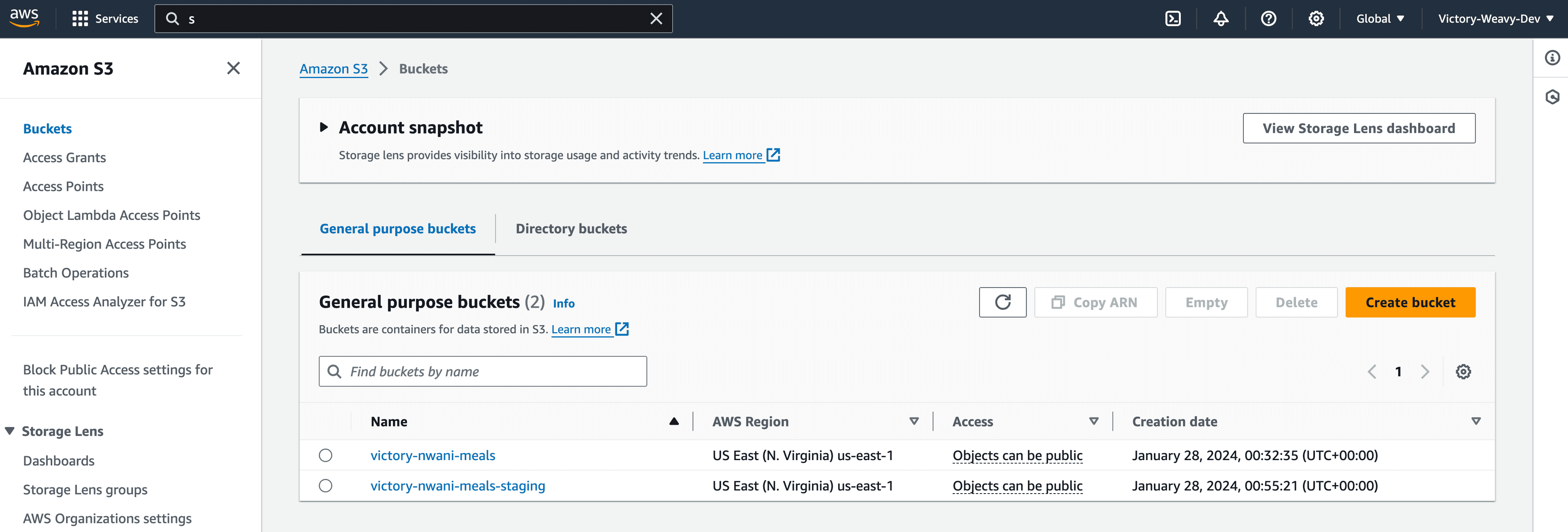

Before you proceed with the tutorial, please ensure that you have two S3 buckets within your AWS accounts as shown in the following image

One bucket should have objects not present in the second object. The batch operation will copy the files from the first bucket to the second bucket.

The first step to using Batch Operations is to configure the source bucket to generate Inventory Configurations which contain the object's metadata. AWS offers the option to create inventory configurations either via the console, CLI, or REST endpoints.

Launch your AWS console and navigate to the source bucket you want to use for this tutorial to begin the steps of creating an inventory configuration.

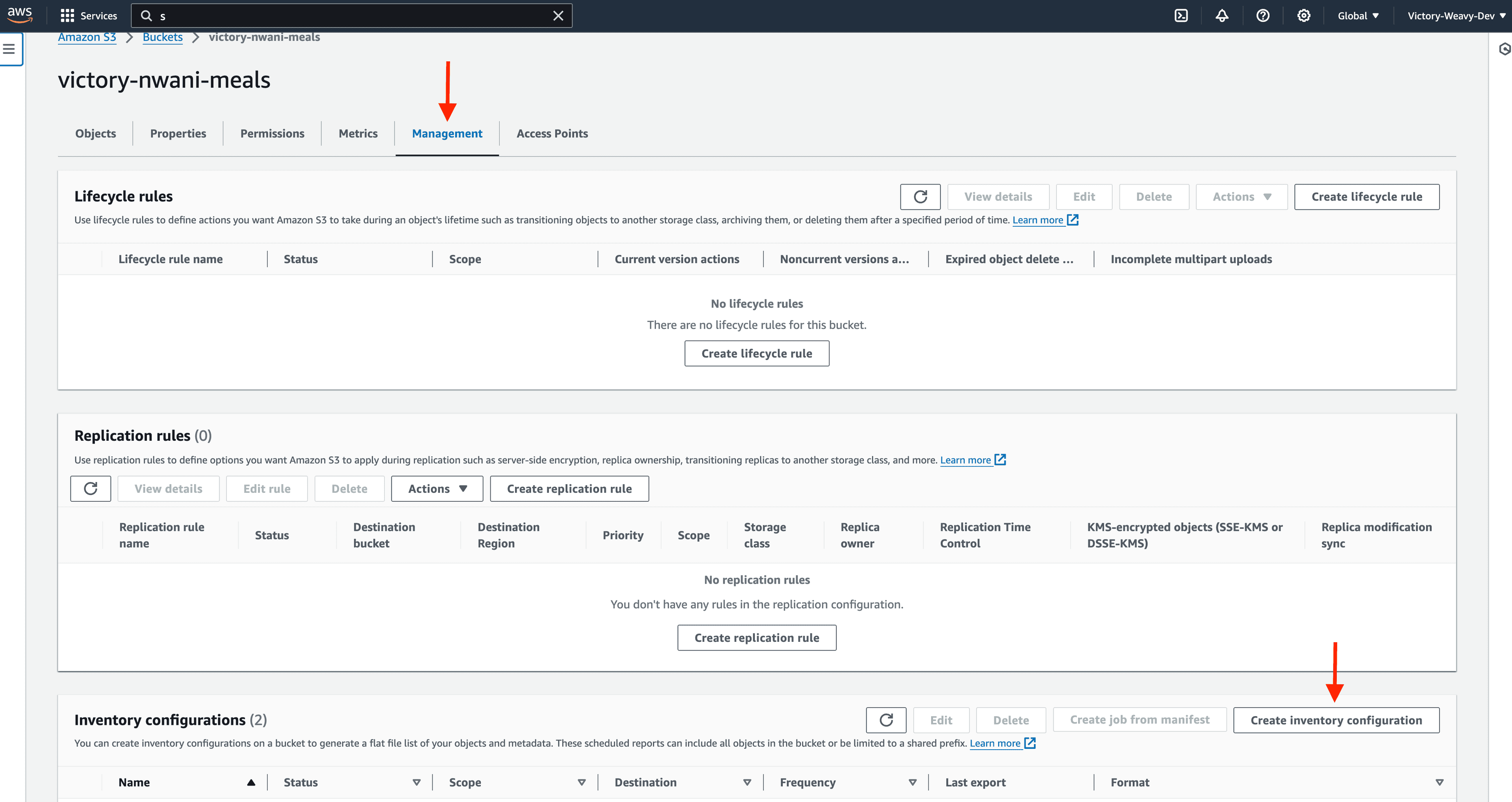

Click the Management tab, scroll down to the Inventory Configuration section, and click the Create Inventory Configuration button.

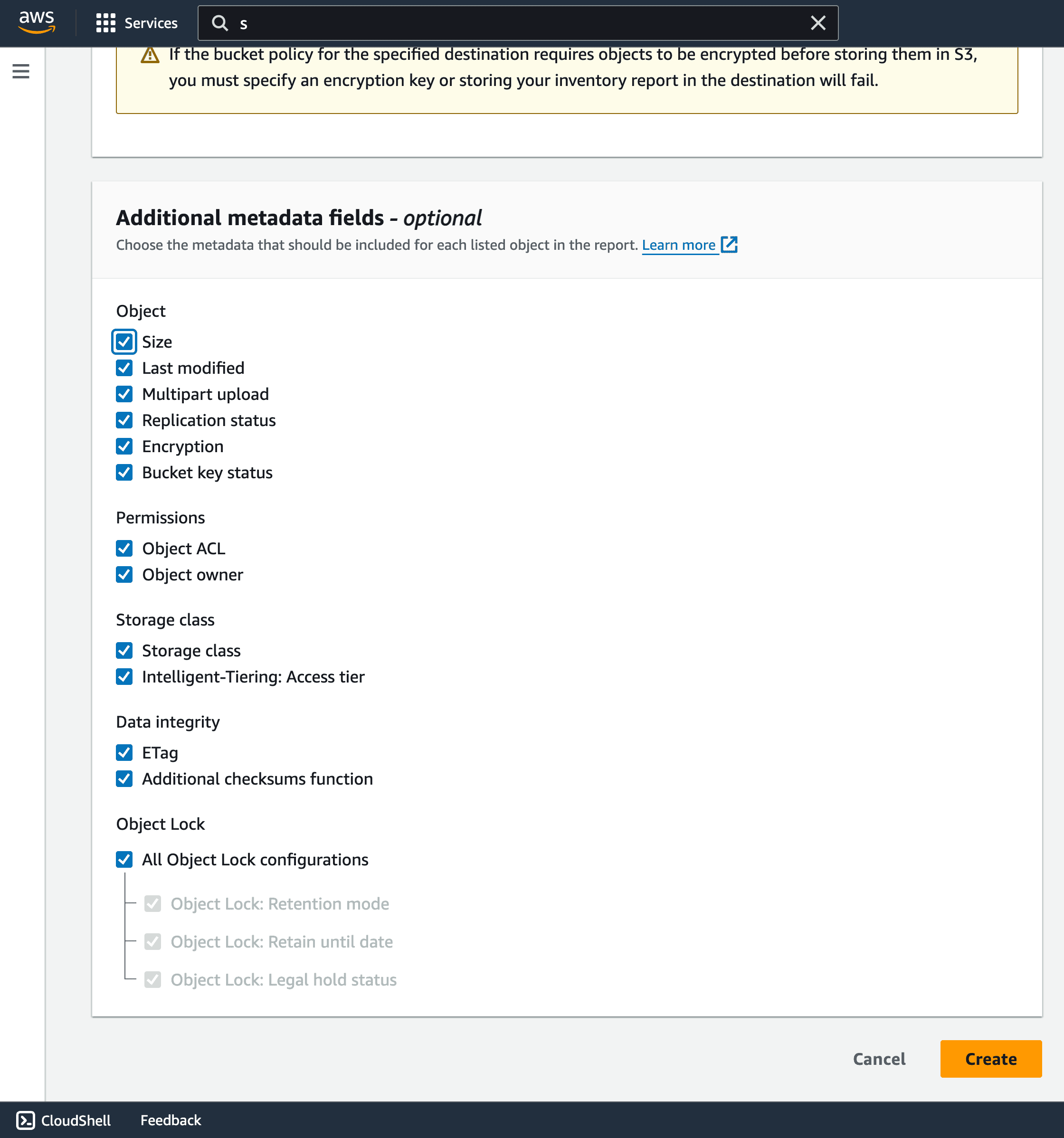

Specify your preferred value in the Inventory Configuration Name, select a destination bucket for the report details, and select all options within the additional metadata fields section to be included in the report.

NOTE: It takes a maximum of 48hours for the first inventory confirguration report be exported. For this tutorial, it took 18hours to have the the first inventory configuration export.

Considering that Batch operations perform automated actions on behalf of the developer, it needs sufficient Identity Access Management (IAM) permissions to access the S3 service. This section will guide you in creating an IAM policy and role for S3 Batch Operations.

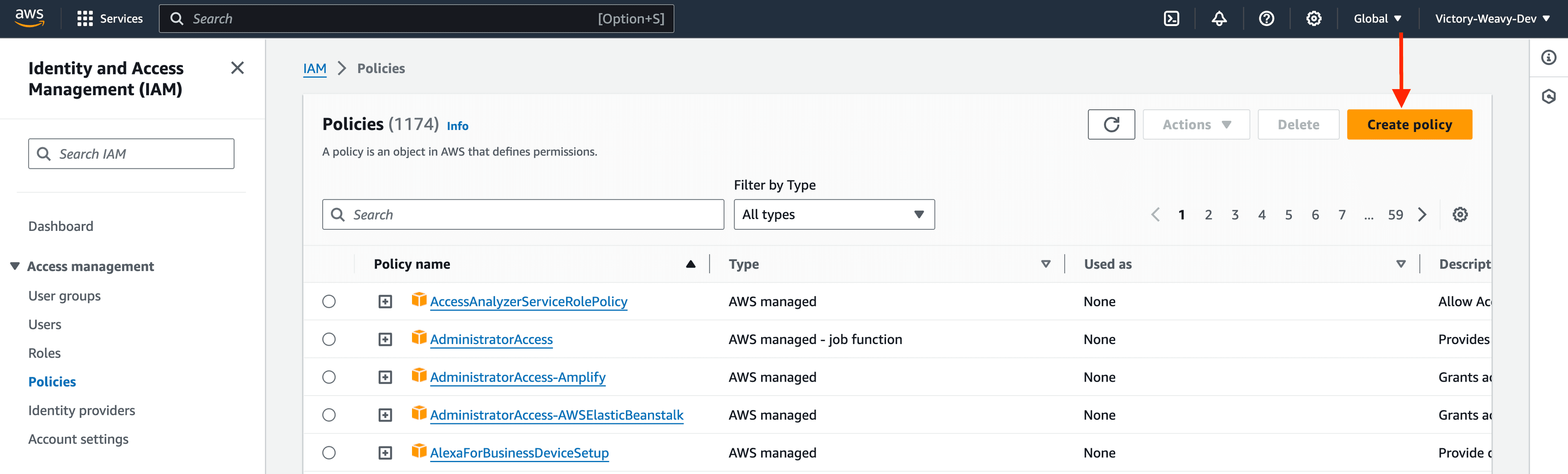

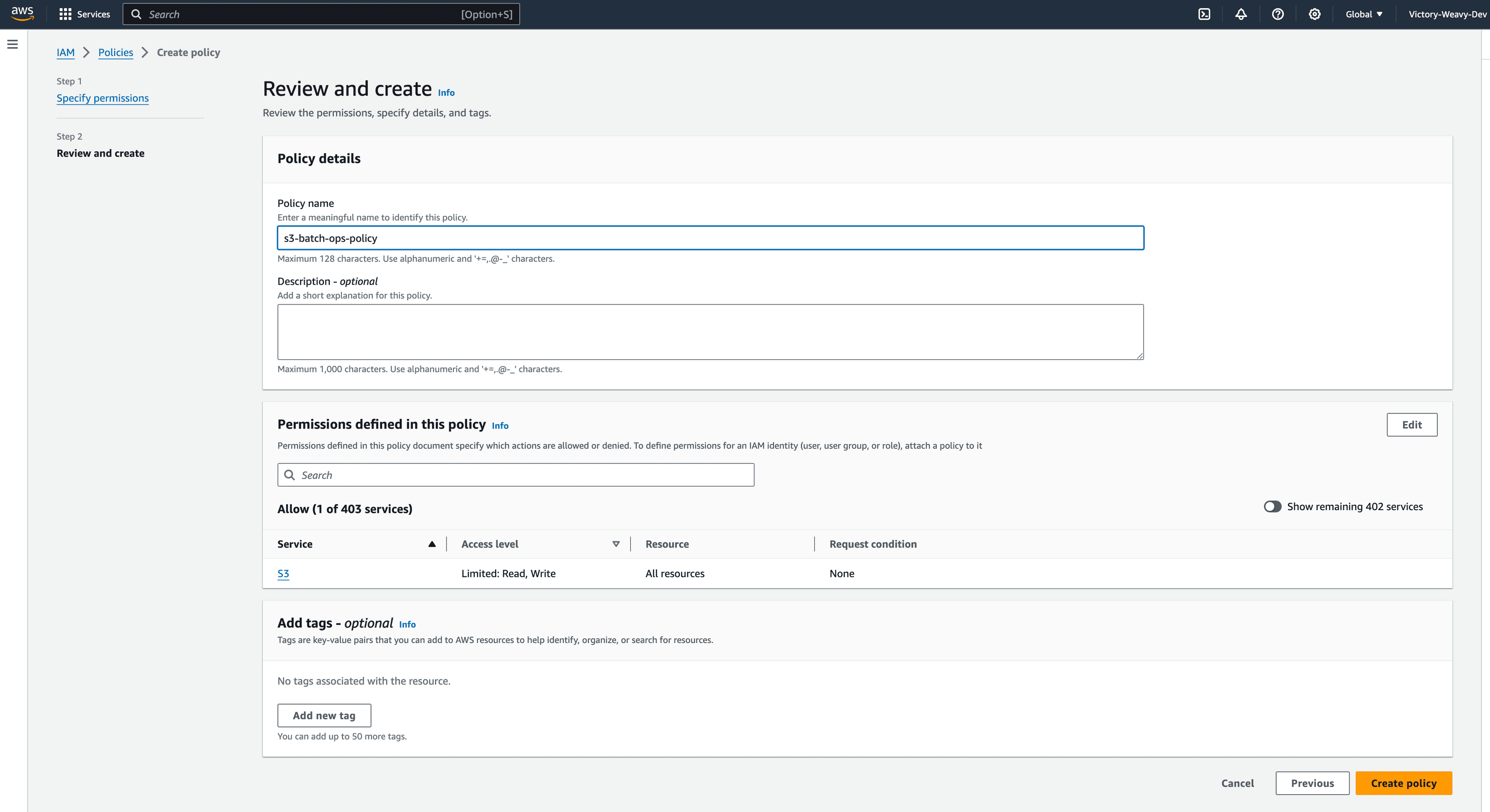

Navigate to the IAM > Policies page and click the Create Policy button to begin creating a policy.

Select S3 as the service for the policy and add the following permissions using the Visual Policy editor. Each of these permissions will permit READ and WRITE operations to objects within your bucket.

Optionally, you can tie the policy to a specific bucket by specifying the ARN before creating.

Click the Next button to specify your preferred name and description details for the policy.

Click the Create Policy button to finalize the entire process of creating the policy.

The previous section guided you through the process of creating a reusable IAM policy for the S3 service. This section will further guide you through creating a role. You will attach the IAM policy from the previous section to the IAM Role.

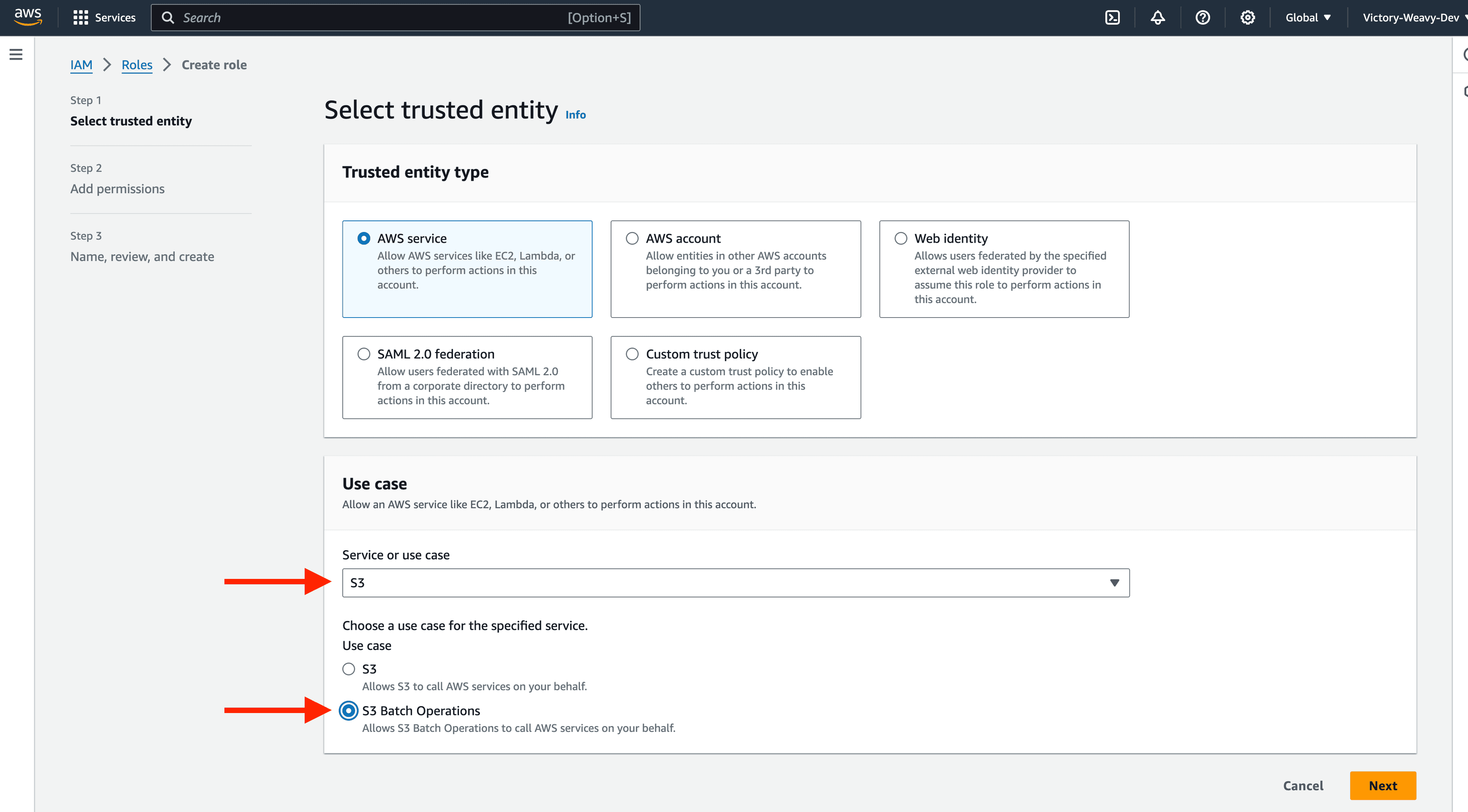

Navigate to the IAM > Roles page and click the Create Role button to begin the process of creating an IAM role.

Select S3 as the service and S3 Batch Operations as the use case for the role.

Click the Next button and attach the policy you created from the previous section to the role.

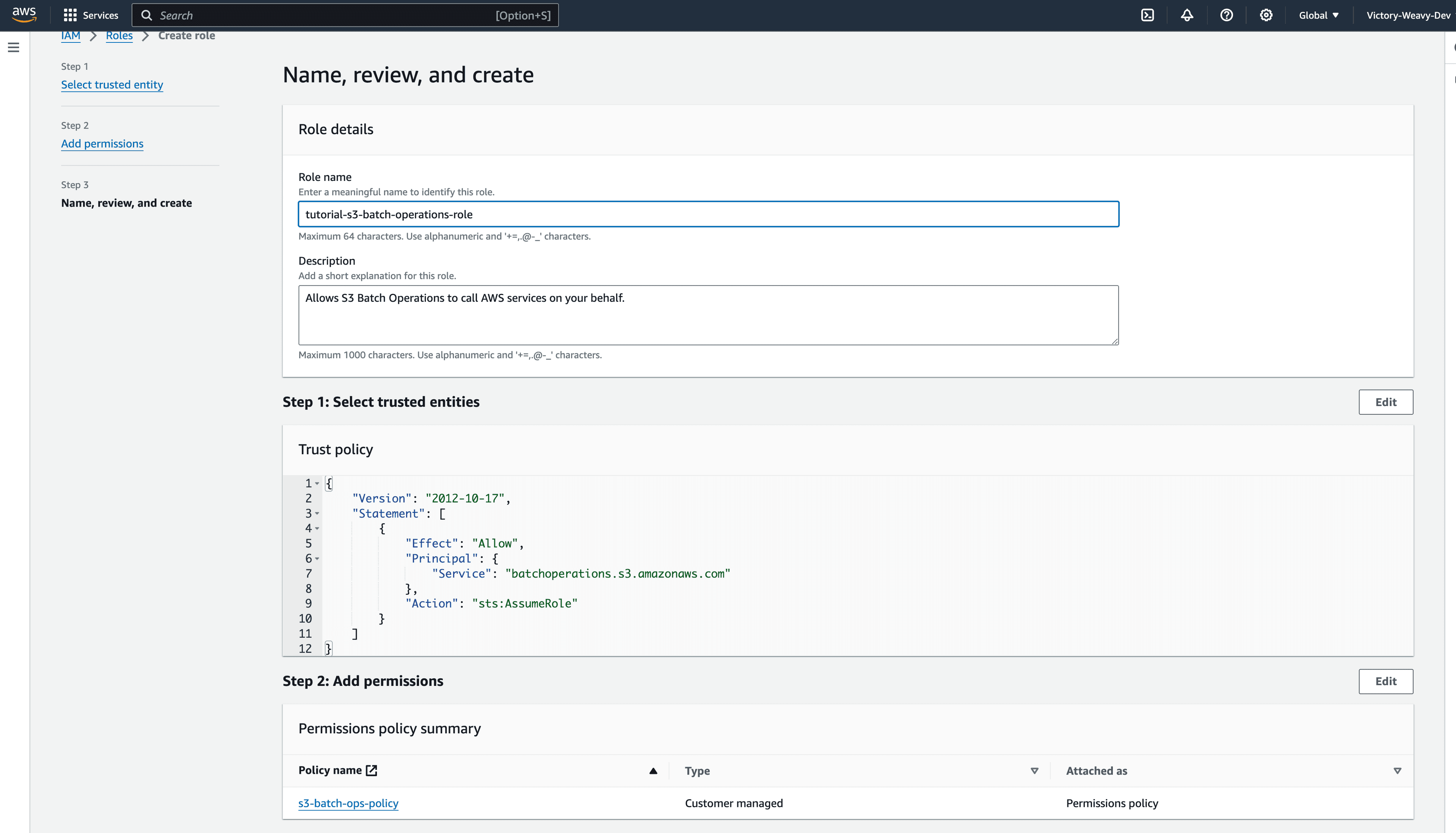

Click the Next button to specify your preferred name and description details for the IAM role.

Click the Create Role button to finalize creating an IAM role.

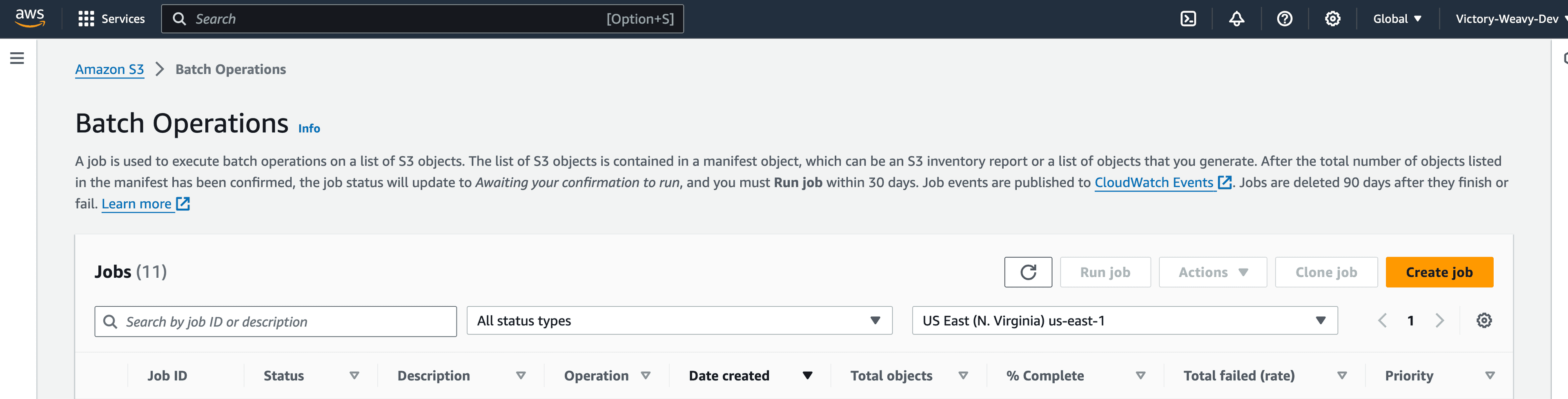

Navigate to the S3 > Batch Operations page and click the Create Job button to create your first batch operation.

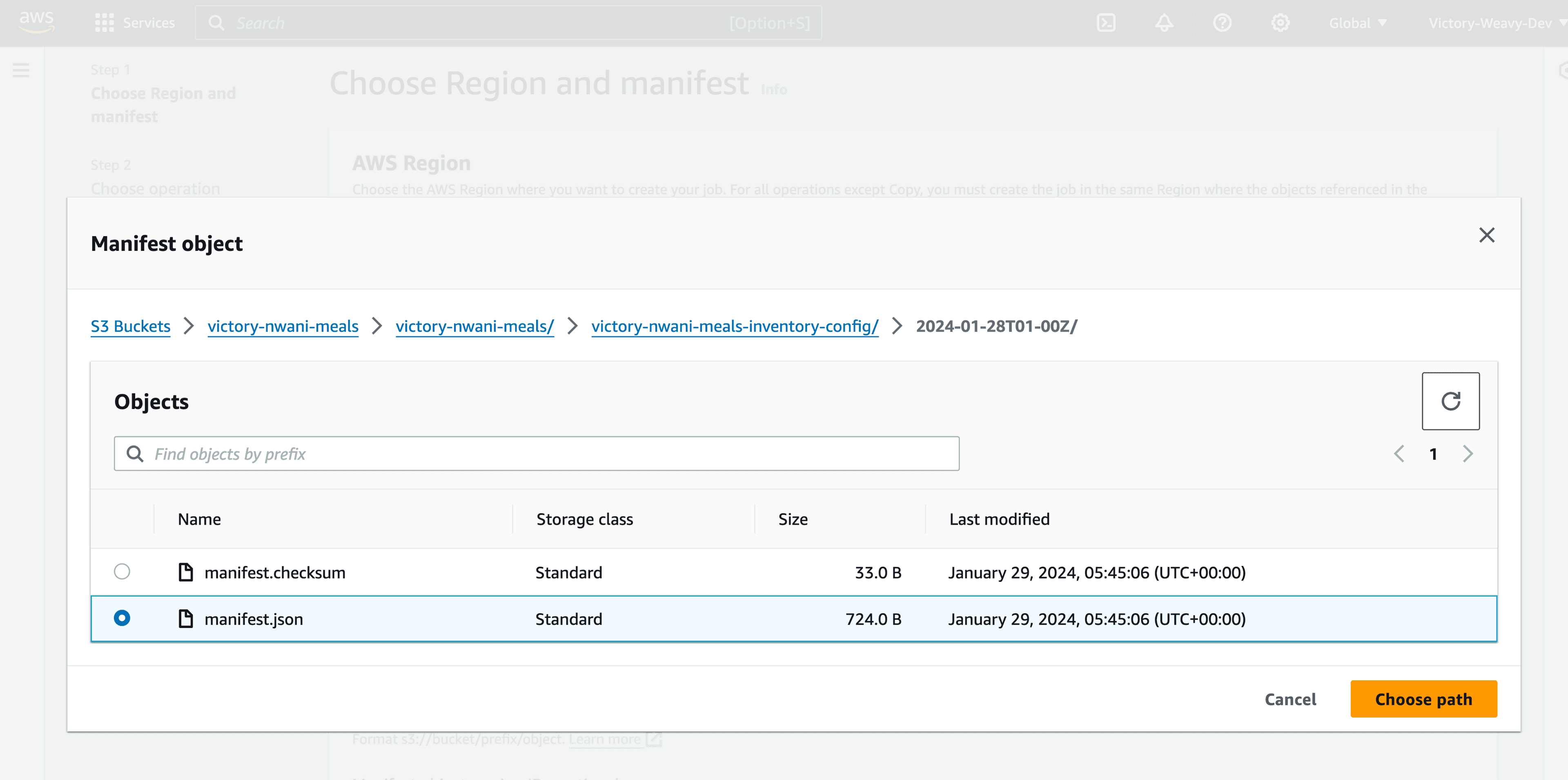

Click the Browser Object button to find your manifest object exported by the Inventory configuration into your bucket.

The image below shows the manifest object generated in the bucket for this tutorial;

If you find searching for the manifest file difficult, you can also create a Batch Operation from the Inventory Configuration section of the Management tab. This will automatically select the exported manifest for the configuration.

Click the Next button after selecting your manifest object to select what operation you want to perform within the batch job.

Select the Copy operation type to copy files from the current bucket to another destination bucket.

Click the Browse S3 button and select the second bucket you want to store the copied objects.

Other configuration options allow you to specify settings such as the bucket storage class, ACL, tags, and metadata.

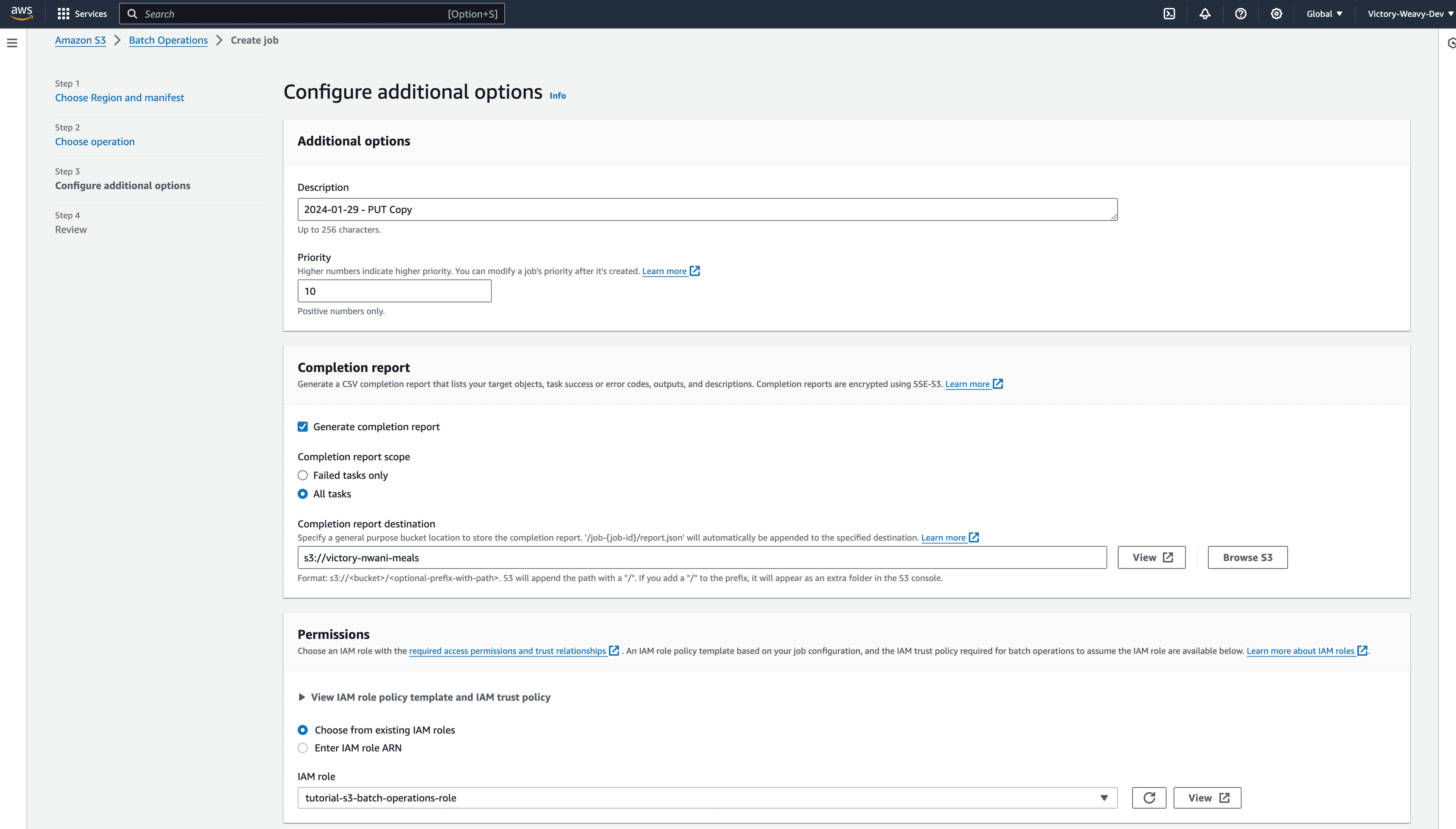

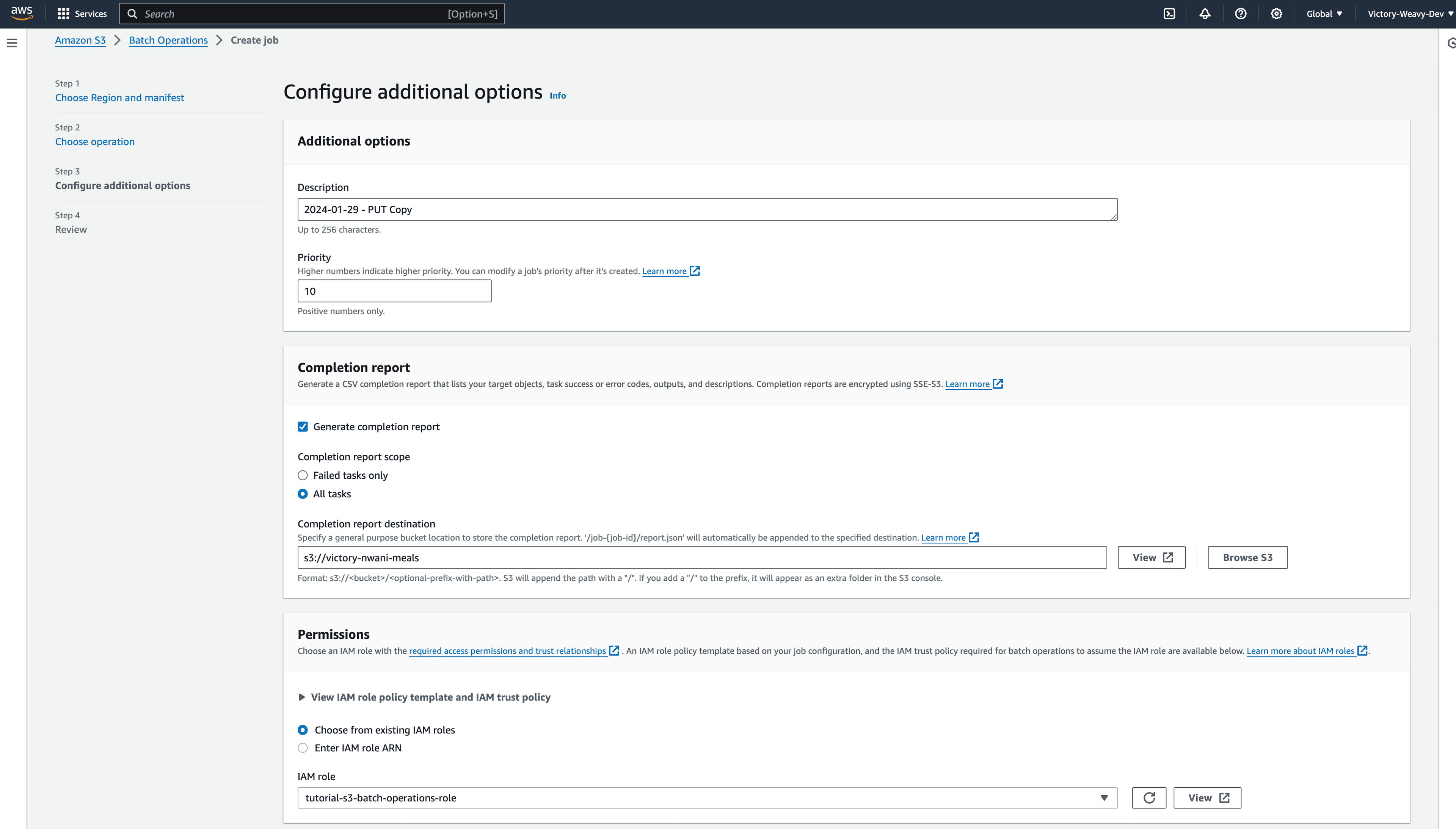

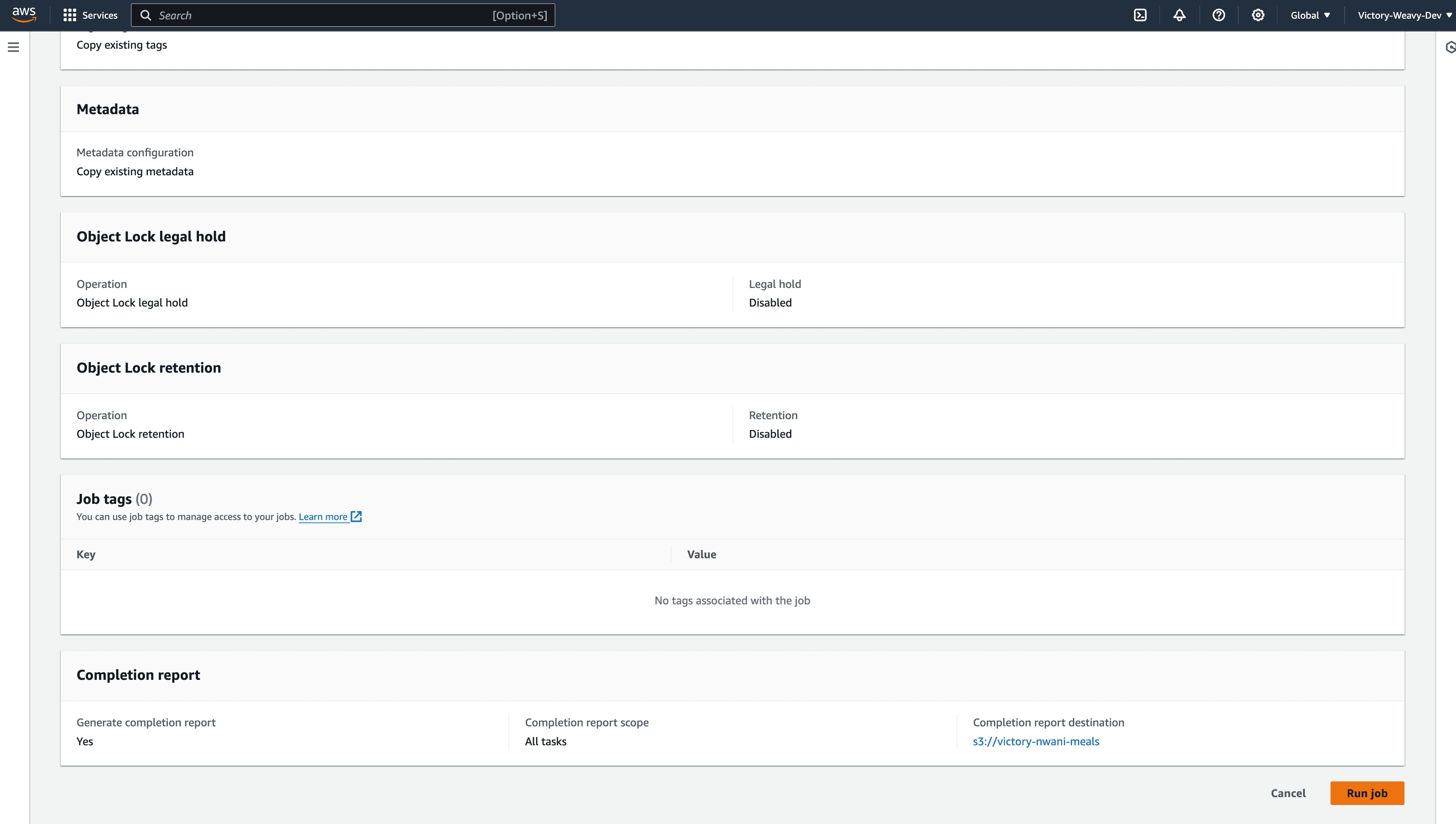

Click the Next button to configure the additional options for the batch job.

Click the Browse S3 button within the Completion report section to specify where the batch job report should be stored.

Select the IAM role you created from the previous section in the Permissions section.

Click the Next button, review the configurations, and click the Create Job button to save the job.

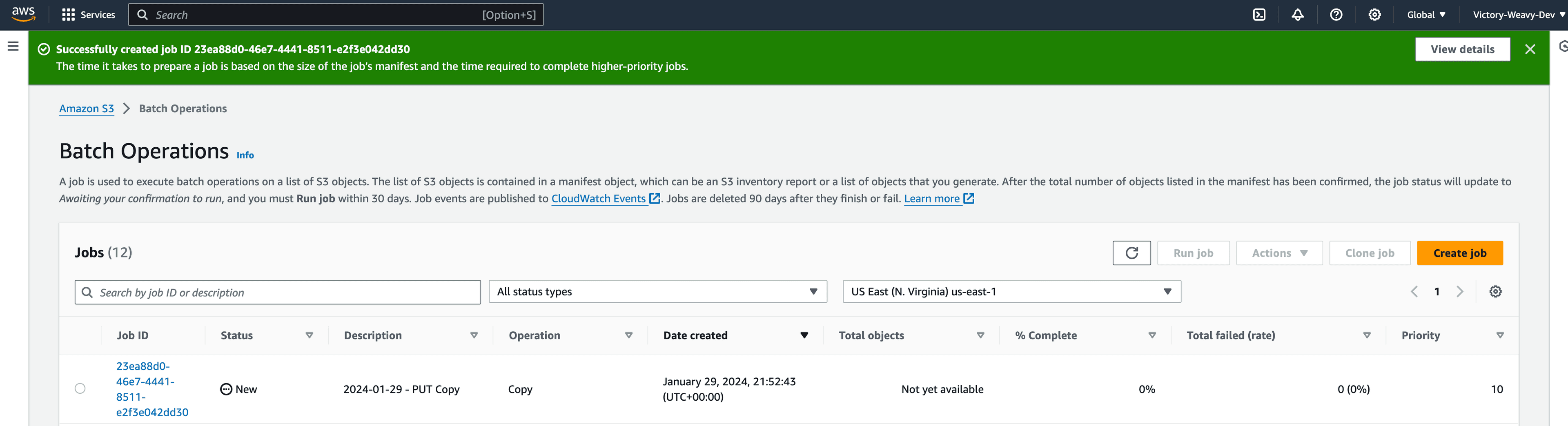

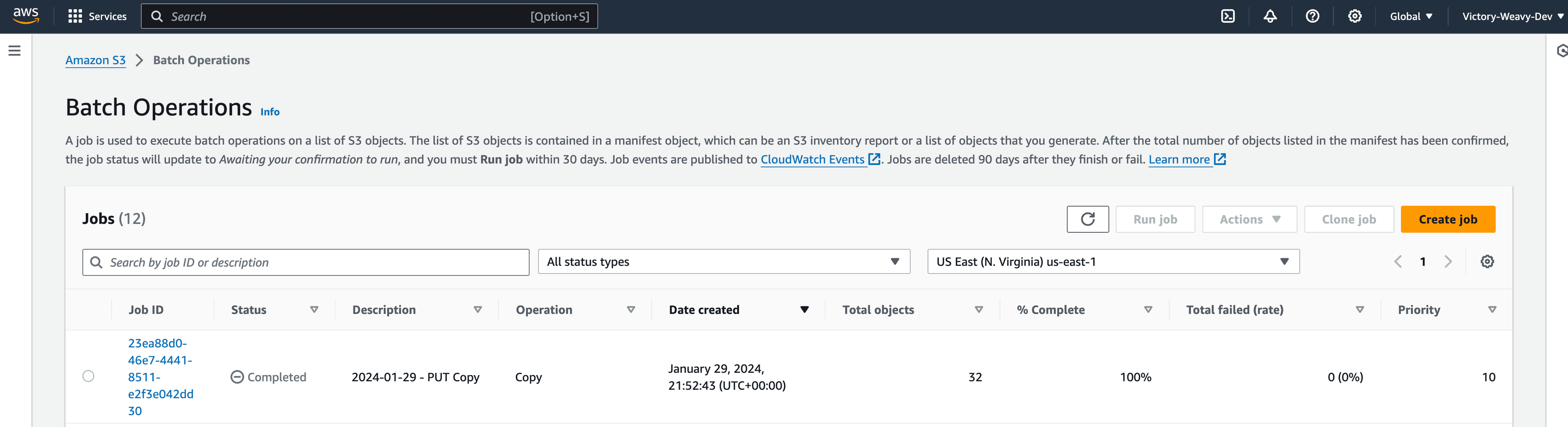

As shown in the following image, the new job will be displayed in the S3 Batch operations dashboard.

By default, the S3 Batch operation jobs are not executed immediately after they are created. Developers need to run the job before the operation executes.

Click the new Job, review its configurations, and click the Run Job button to execute the operation.

Columns within the job overview on the S3 batch operations page provide insight into the job execution. For example, the status column displays the entire job execution state, while the Total failed (rate) column displays if any of the objects within the bucket threw an error.

At this point, you have learned how to utilize the S3 Batch operations feature to copy files from one bucket to another without breaking a sweat!

- https://docs.aws.amazon.com/AmazonS3/latest/userguide/storage-inventory.html?icmpid=docs_amazons3_console

- https://docs.aws.amazon.com/AmazonS3/latest/userguide/tutorial-s3-batchops-lambda-mediaconvert-video.html

- https://docs.aws.amazon.com/AmazonS3/latest/userguide/batch-ops.html

- https://aws.amazon.com/about-aws/whats-new/2023/11/amazon-s3-batch-operations-buckets-prefixes-single-step/