How to Use Proprietary Foundation Models with Amazon SageMaker JumpStart

Amazon SageMaker JumpStart supports popular proprietary foundation models, such as AI21 Labs, Cohere, and LightOn. In this blog post, learn how to use a preview feature, a playground of AWS console and deploy to SageMaker Studio. You can test these models without writing a single line of code and easily evaluate models on your specific tasks.

Channy Yun

Amazon Employee

Published Feb 7, 2024

Foundation models are large-scale machine learning (ML) models that contain billions of parameters and are pre-trained on the vast data sets of text and image, such as the entirety of Wikipedia and publicly available literature across multiple spoken languages as well as computer programming languages. So you can perform a wide range of tasks such as article summarization and text, image, or video generation. Because foundation models are pre-trained, they can help lower training and infrastructure costs and enable customization for your use case.

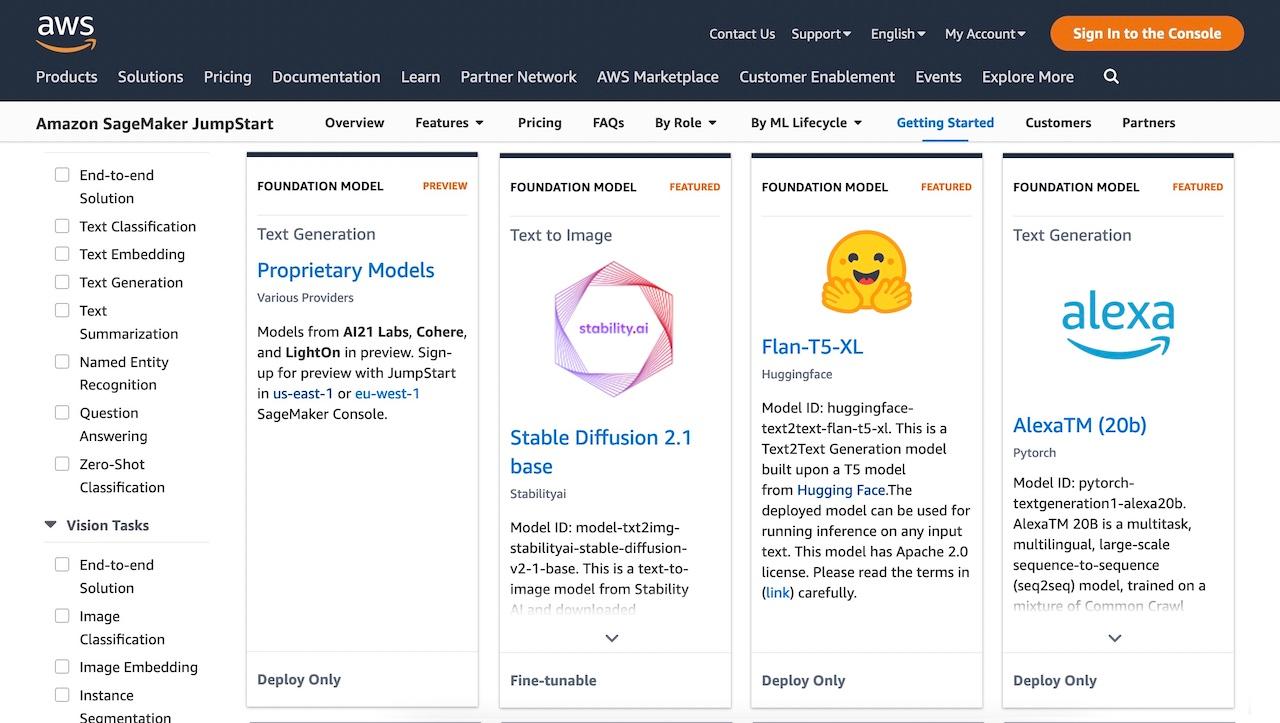

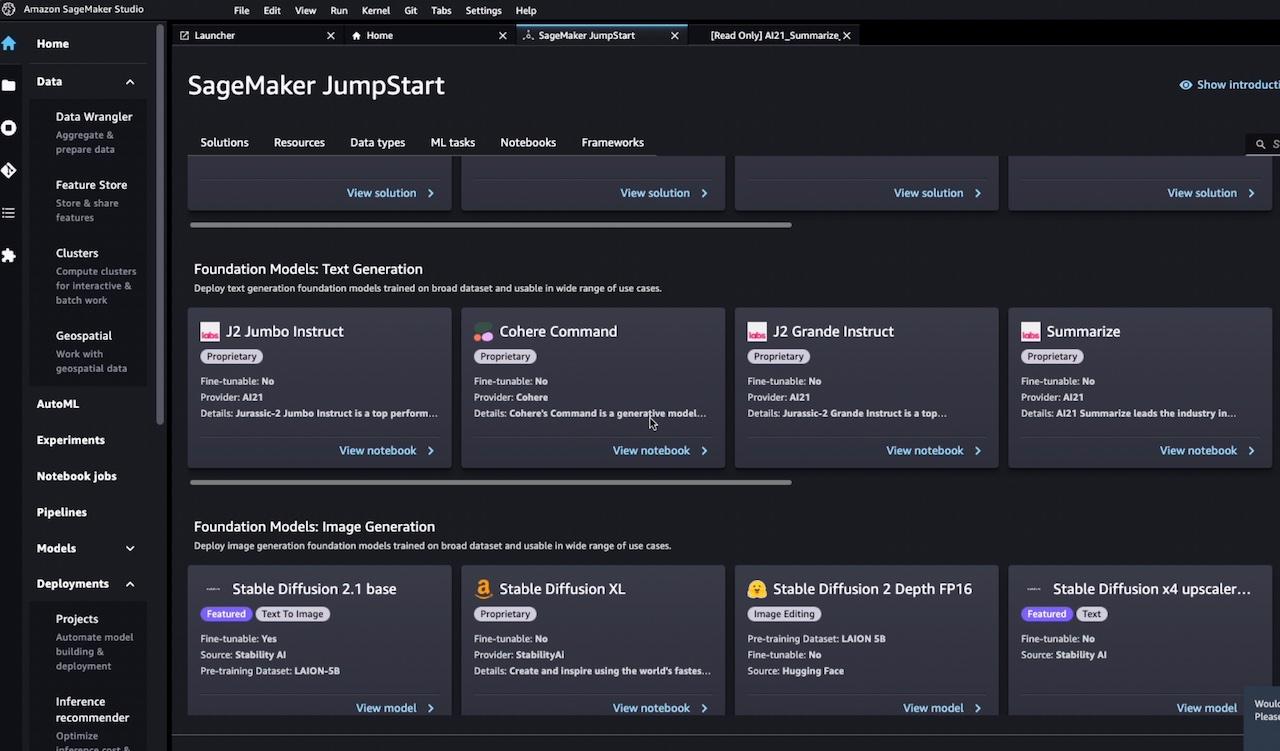

Amazon SageMaker JumpStart is a ML hub with hundreds of built-in algorithms and pre-trained models for customers to accelerate ML model building and easily deploy within SageMaker, while ensuring their data security. SageMaker JumpStart also offers the broad set of foundation models from Amazon to proprietary, and publicly available from various model providers representing top-scoring models via Holistic Evaluation of Language Models (HELM) benchmarks.

Since November 2022, SageMaker JumpStart has previewed pre-trained proprietary models, such as AI21 Jurassic-1 language model, Cohere language model, and LightOn Lyra-fr model, as well as publicly available models such as AlexaTM 20B language model, Bloom language models, Finetuned Language Models (FLAN) model, and Stability AI image creation model.

For example, the Stable Diffusion model is used by hundreds of business applications such as DreamStudio reaching millions of end users to create novel designs for their content. If you want to generate your own new artwork, you can simply create an image like the one below by feeding the sentence “Four men riding bicycles in the Swiss Alps, a Renaissance painting, a majestic, breathtaking natural landscape.” to the Stable Diffusion model.

In this blog post, you can learn how to use pre-trained proprietary models in SageMaker JumpStart to perform a wide range of tasks to generate text, image, or video for your generative AI applications. This is based on a preview feature, a playground of AWS console and deploy to SageMaker Studio. You can test these models without writing a single line of code and easily evaluate models on your specific tasks

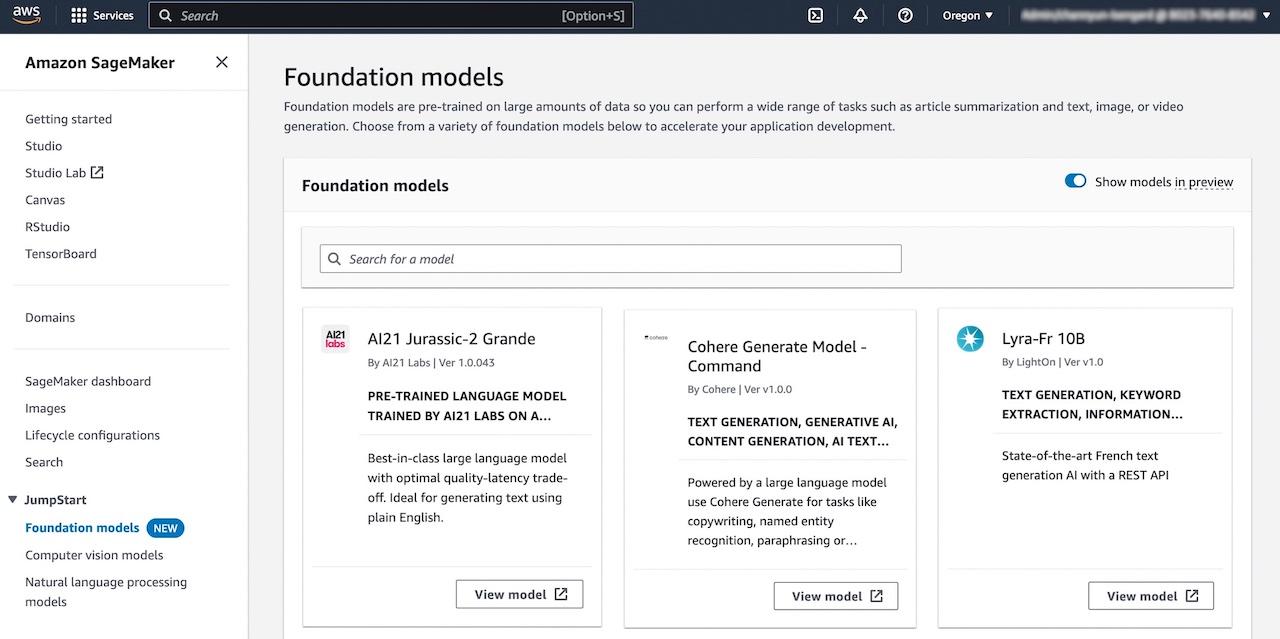

You can try out popular pre-trained foundation models without the need to deploy. To get started on the latest models that are in preview or to try out models in a playground, you need to request access. You’ll receive an email once it’s ready. If you have a preview access, choose Foundation models under JumpStart in the Amazon SageMaker console.

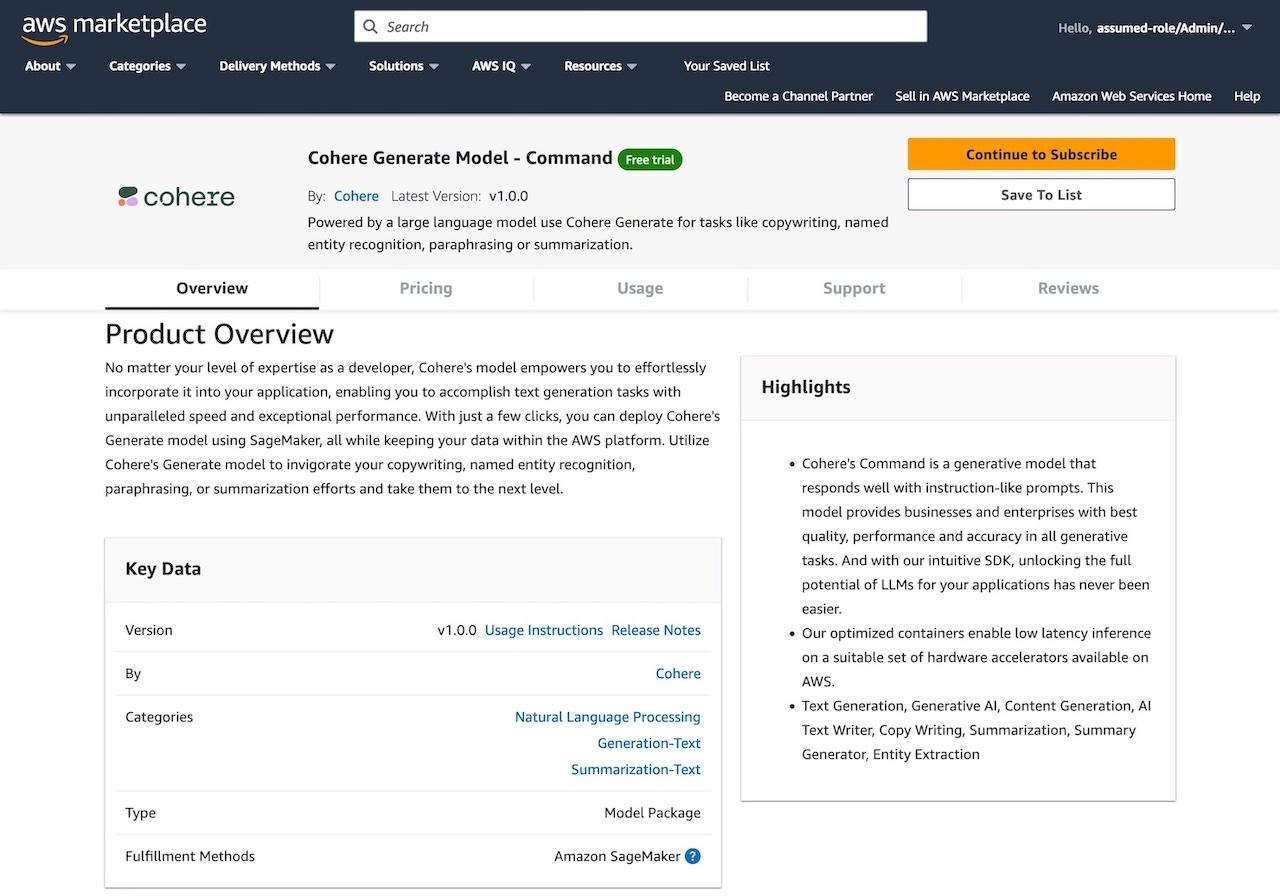

For example, Cohere Generate Model – Command model helps automate a wide range of tasks such as copywriting, entity recognition, paraphrasing, text summarization, and classification. This enables developers and customers to add linguistic AI to their technology stack and build a variety of applications with it.

If you want to try out this mode, choose View model and see the model description popup.

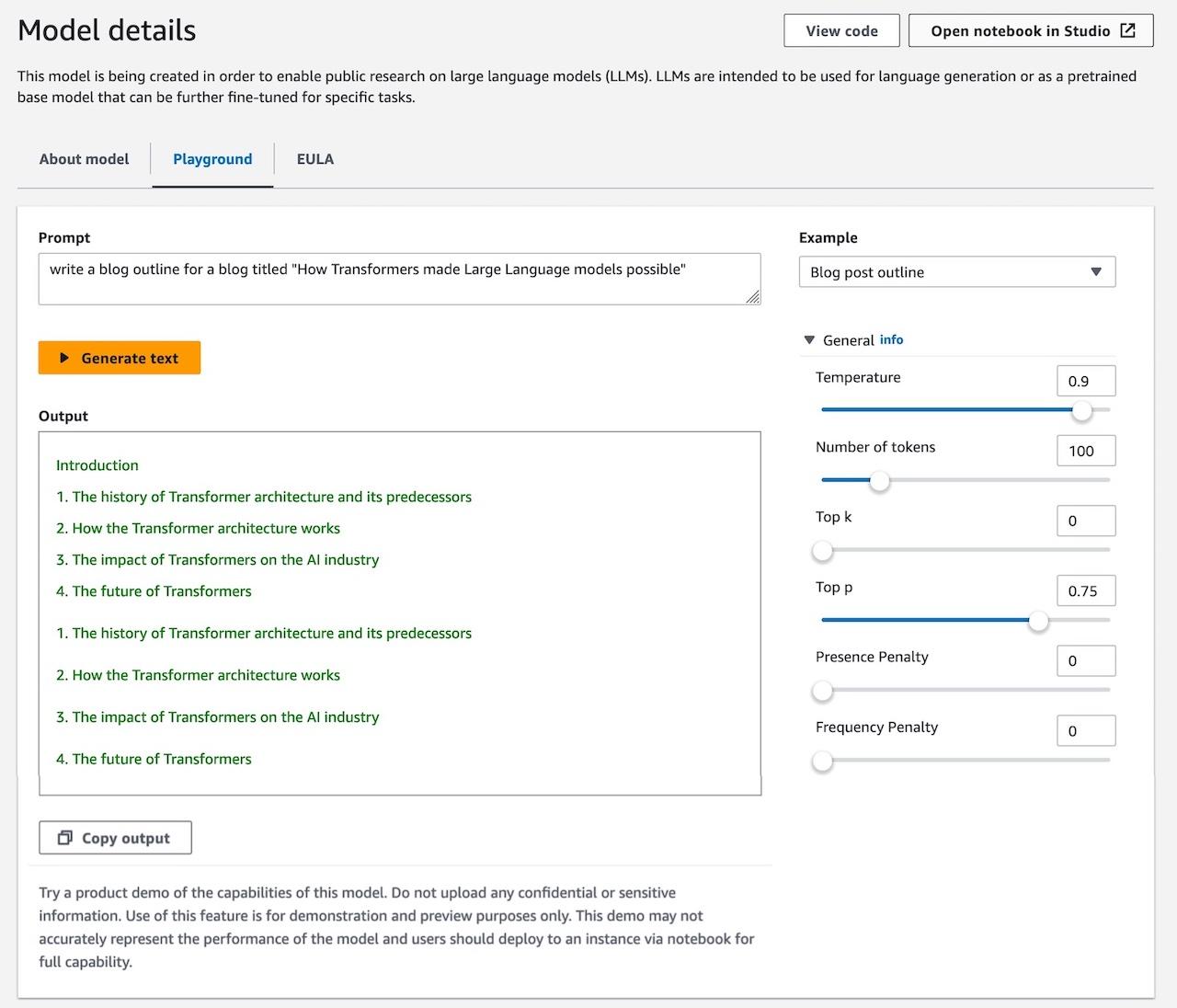

Now let’s test this Cohere model and prompt it to generate given examples. Choose Playground and “Blog post outline” write a blog outline for a blog titled “How Transformers made Large Language models possible” in the Example dropdown menu.

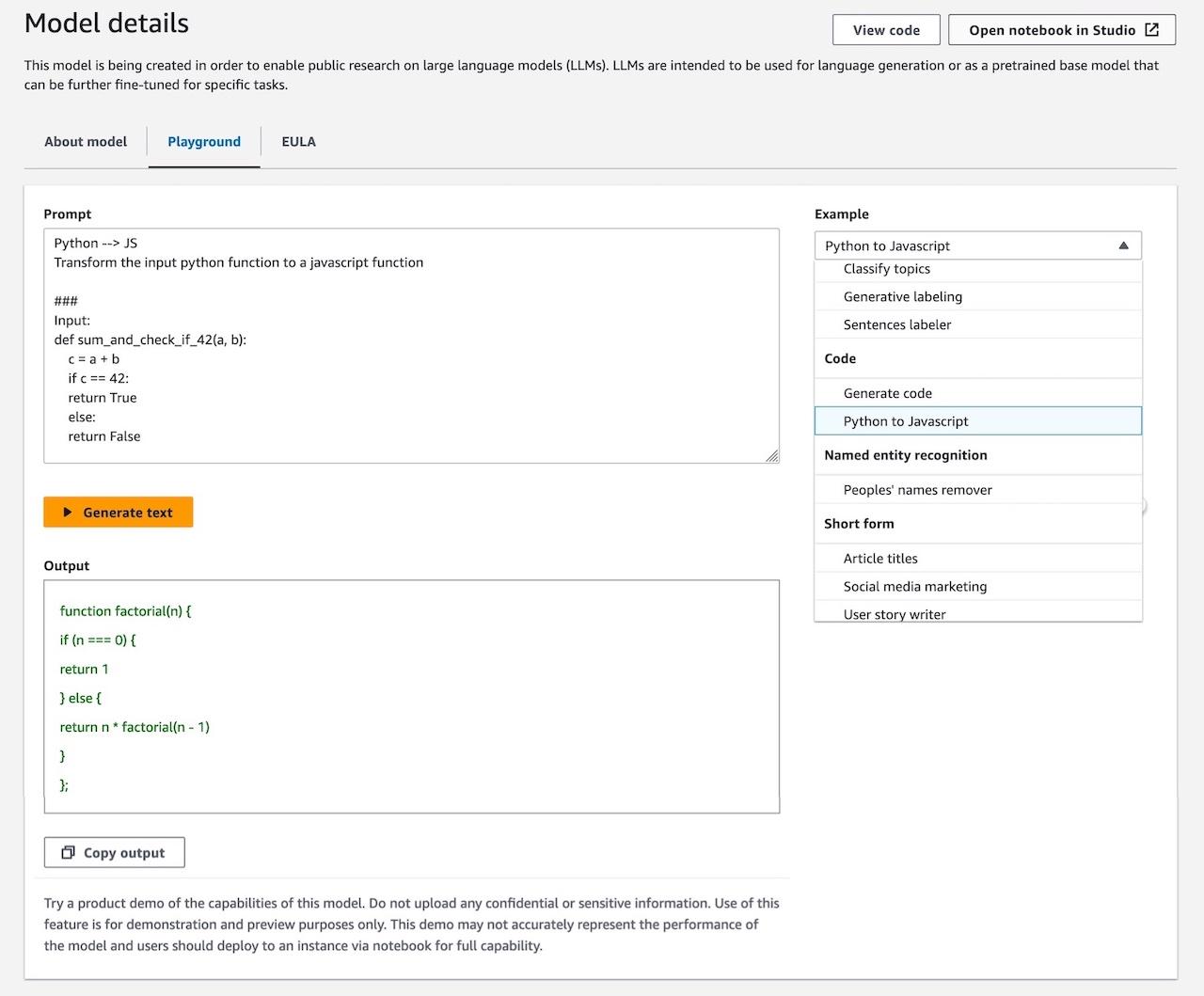

Another popular proprietary model, AI21 Labs’s Jurassic-2 Grande has fun use-cases such as, generating simple programming code, classifying news articles by topics, labeling peoples’ names, and suggesting tweets copy for social media marketing.

You can deploy foundation models with only one click in the Amazon SageMaker Studio interface, or with very few lines of code through the SageMaker Python SDK.

You can use more proprietary foundation models for your purpose – zero-shot classification models such as AI21 Jurassic-2 language models (Jumbo instruct, Grande Instruct), Cohere Command, LightOn Mini, and task specific models such as AI21 Summarize and Paraphrasing.

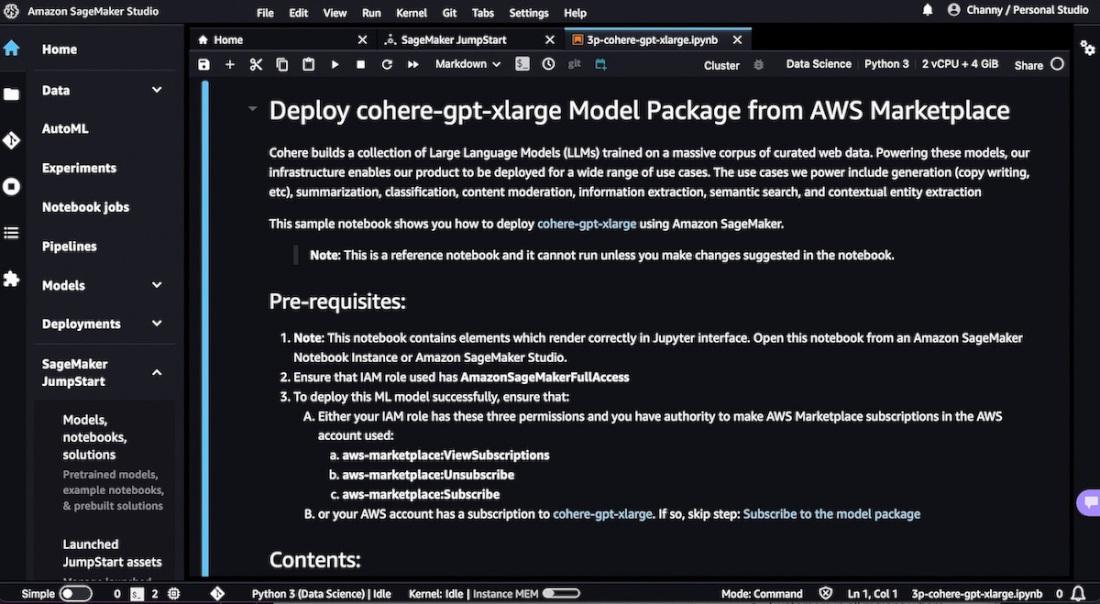

Now, let’s look now to deploy Cohere’s Generate Model – Command model.

Following the step-by-step guide in the notebook, you should subscribe to deploy Cohere’s language model package from AWS Marketplace and use your own data to customize models to your specific needs.

Once you click on Continue to configuration button and then choose a region, you will see a

Product Arn displayed. This is the model package ARN that you need to specify while creating a deployable model using Boto3. Copy the ARN corresponding to your region and specify the same in the model_package_map in the example of notebook code.# Mapping for Model Packagesmodel_package_map = {"us-east-1": "arn:aws:sagemaker:us-east-1:123456789012:model-package/cohere-gpt-medium-v1-5-15e34931a06235b7bac32dca396a970a","eu-west-1": "arn:aws:sagemaker:eu-west-1:123456789012:model-package/cohere-gpt-medium-v1-5-15e34931a06235b7bac32dca396a970a",}region = boto3.Session().region_nameif region not in model_package_map.keys():raise Exception("Current boto3 session region {region} is not supported.")model_package_arn = model_package_map['us-east-1']# Create an endpointco = Client(region_name=region)co.create_endpoint(arn=model_package_arn, endpoint_name="cohere-gpt-medium", instance_type="ml.g5.xlarge", n_instances=1)# If the endpoint is already created, you just need to connect to it# co.connect_to_endpoint(endpoint_name="cohere-gpt-medium")# Perform real-time inferenceprompt="""Write a creative product description for a wireless headphone product named the CO-1T, with the keywords "bluetooth", "wireless", "fast charging" for a software developer who works in noisy offices, and describe benefits of this product."""response = co.generate(prompt=prompt, max_tokens=100, temperature=0.9)print(response.generations[0].text)When you’ve finished working and clean up your resources, you need shut down the SageMaker Studio instances to delete the endpoint and avoid incurring additional costs.

For a variety of tutorials and tips and tricks related to this language model, see Cohere brings language AI to Amazon SageMaker in the AWS Machine Learning Blog. You can also see an example of automated quiz generation with AWS Certification exams using AI21 Jurassic-2 Jumbo Instruct model.

To learn more examples about building generative AI with SageMaker JumpStart, see blog articles in the AWS Machine Learning Blog.

You can now access various proprietary foundation models from Amazon SageMaker console, SageMaker Studio, and SageMaker SDK, by subscribing to these from AWS Marketplace. Since all data is encrypted and does not leave your VPC, you can trust that your data will remain private and confidential. Try out a playground of these models and deploy to SageMaker Studio. You can test these models without writing a single line of code and easily evaluate models on your specific tasks

You can also choose a broad range of AWS services that have generative AI built in, all running on the most cost-effective cloud infrastructure for generative AI. To learn more, visit Generative AI on AWS to innovate faster to reinvent your applications.

Channy Yun is a Principal Developer Advocate for AWS, and passionate about helping developers to build modern applications on latest AWS services. A pragmatic developer and blogger at heart, he loves community-driven learning and sharing of technology, which has funneled developers to global AWS Usergroups. His main topics are open-source, container, storage, network & security, and IoT. Follow him on Twitter at @channyun.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.