Deploying Stable Diffusion on m7i.4xlarge and Accelerate with OpenVINO

Explaining on how to deploy Stable Diffusion on EC2

Published Feb 12, 2024

Hi I’m Beny, this is my 1st article in AWS Community. In this article you will learn on how to deploy Stable Diffusion running on EC2 m7i.4xlarge (Intel® 4th Gen) and using OpenVINO to enhance AI Inferencing.

Stable Diffusion, a text-to-image model unveiled in 2022 and rooted in diffusion methodologies, epitomizes the burgeoning AI advancements of its time. Its core function lies in crafting intricate images based on textual cues, yet its utility extends to various tasks like inpainting, outpainting, and text-guided image translations.

Stable Diffusion

employs a specific diffusion model (DM) known as a latent diffusion model

(LDM), pioneered by the CompVis group at LMU Munich.

Stable Diffusion

employs a specific diffusion model (DM) known as a latent diffusion model

(LDM), pioneered by the CompVis group at LMU Munich.

Stable Diffusion represents a significant advancement in the realm of text-to-image model generation, offering broad accessibility while demanding considerably less computational resources compared to other models in the field. Its functionalities encompass text-to-image conversion, image-to-image transformation, graphic artwork creation, image editing, and video production.

In text-to-image generation, Stable Diffusion is widely employed. It constructs images based on textual inputs, allowing for diverse image outcomes through adjustments to the random seed number or manipulation of the denoising schedule for varied effects.

Furthermore, in image-to-image generation, Stable Diffusion facilitates the creation of images using both input images and textual prompts. A typical application involves generating images from sketches paired with relevant prompts.

The OpenVINO™ toolkit, an open-source platform,

enhances AI inference by minimizing latency and maximizing throughput without

compromising accuracy. It achieves this by reducing model size, optimizing

hardware utilization, and facilitating streamlined development and integration

of deep learning across various domains such as computer vision, large language

models, and generative AI.

enhances AI inference by minimizing latency and maximizing throughput without

compromising accuracy. It achieves this by reducing model size, optimizing

hardware utilization, and facilitating streamlined development and integration

of deep learning across various domains such as computer vision, large language

models, and generative AI.

I’m using m7i.4xlarge since its Intel® 4th Gen that has up to 15% better price performance compare to m6i.4xlarge.

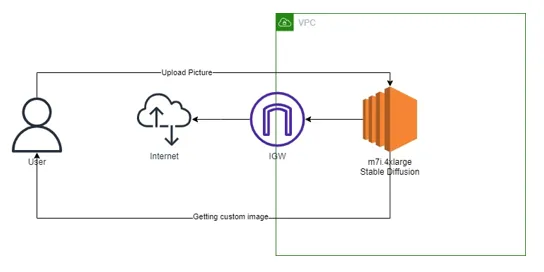

Architecture

Pre-requisites

1. Anaconda

2. Python 3.10.6

3. Torch 2.2.0

4. Torchvision

5. Xformers

6. Torchaudio

7. EC2 m7i.4xlarge

Installation Process

# Make python environment with version 3.10.6

#download the stable diffusion

#put as variable

#Download Model

#Install Linux package

#Install Python Module

#change the degradation code

from torchvision.transforms.functional_tensor import rgb_to_grayscale

To

from torchvision.transforms.functional import rgb_to_grayscale

#Launch the WebUI

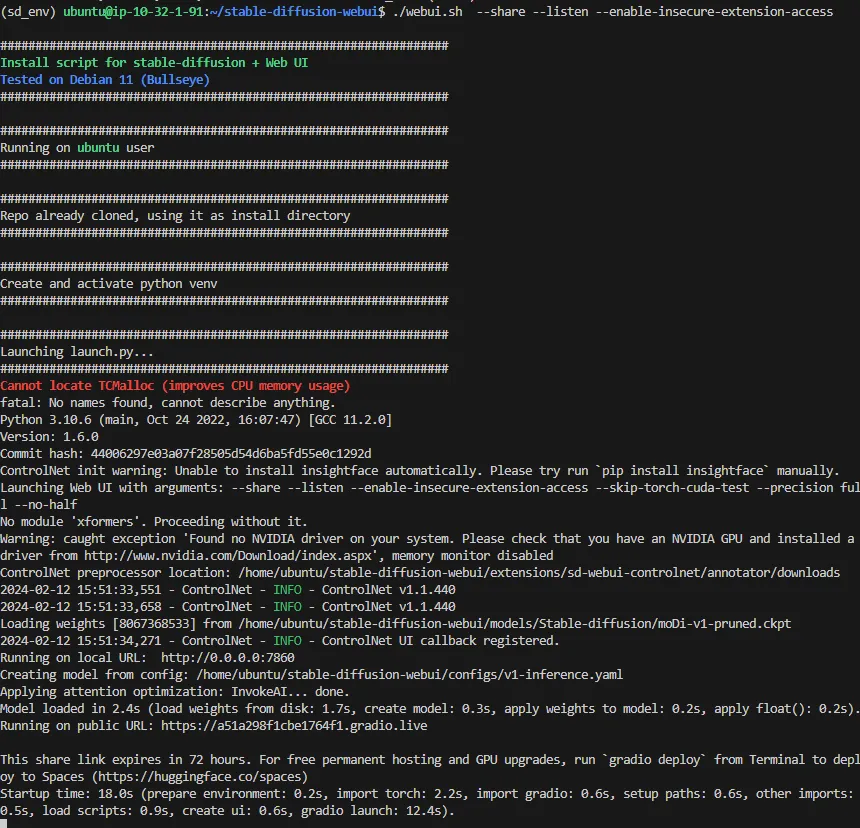

Web UI Console Output

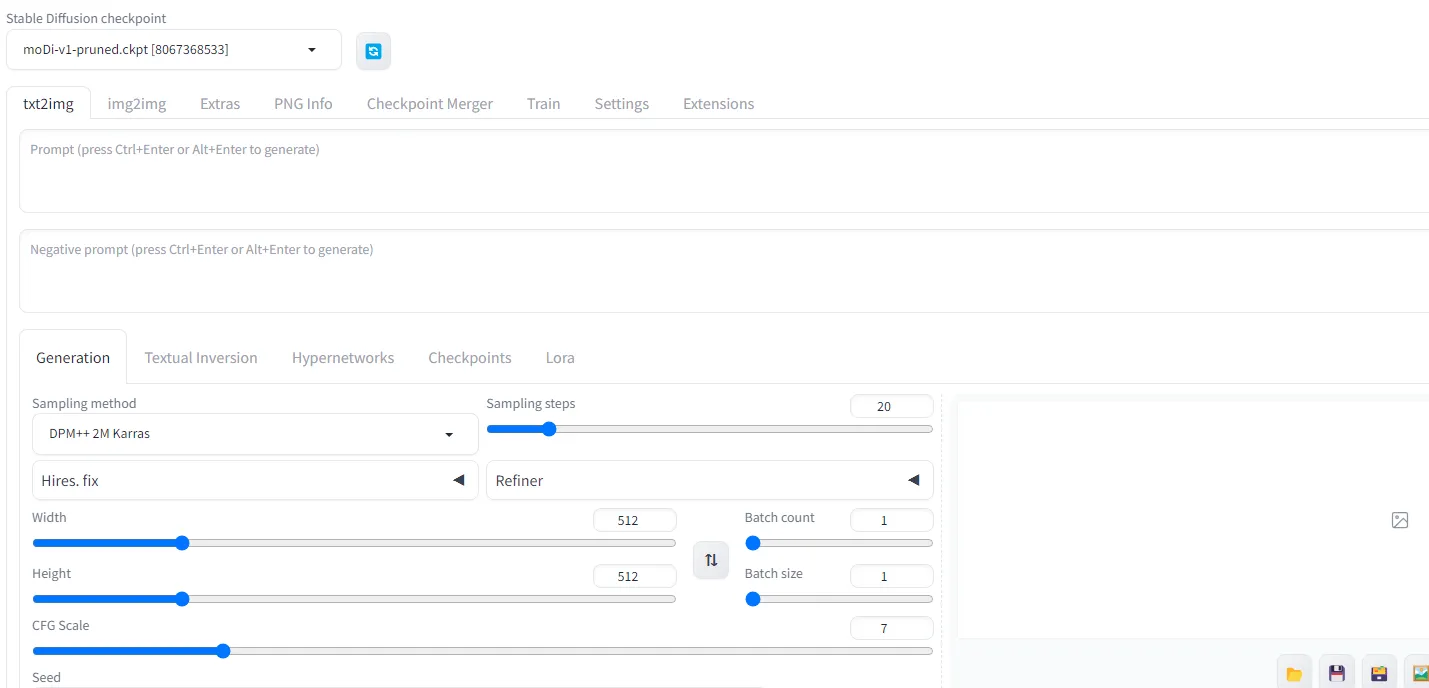

WebUI Web output

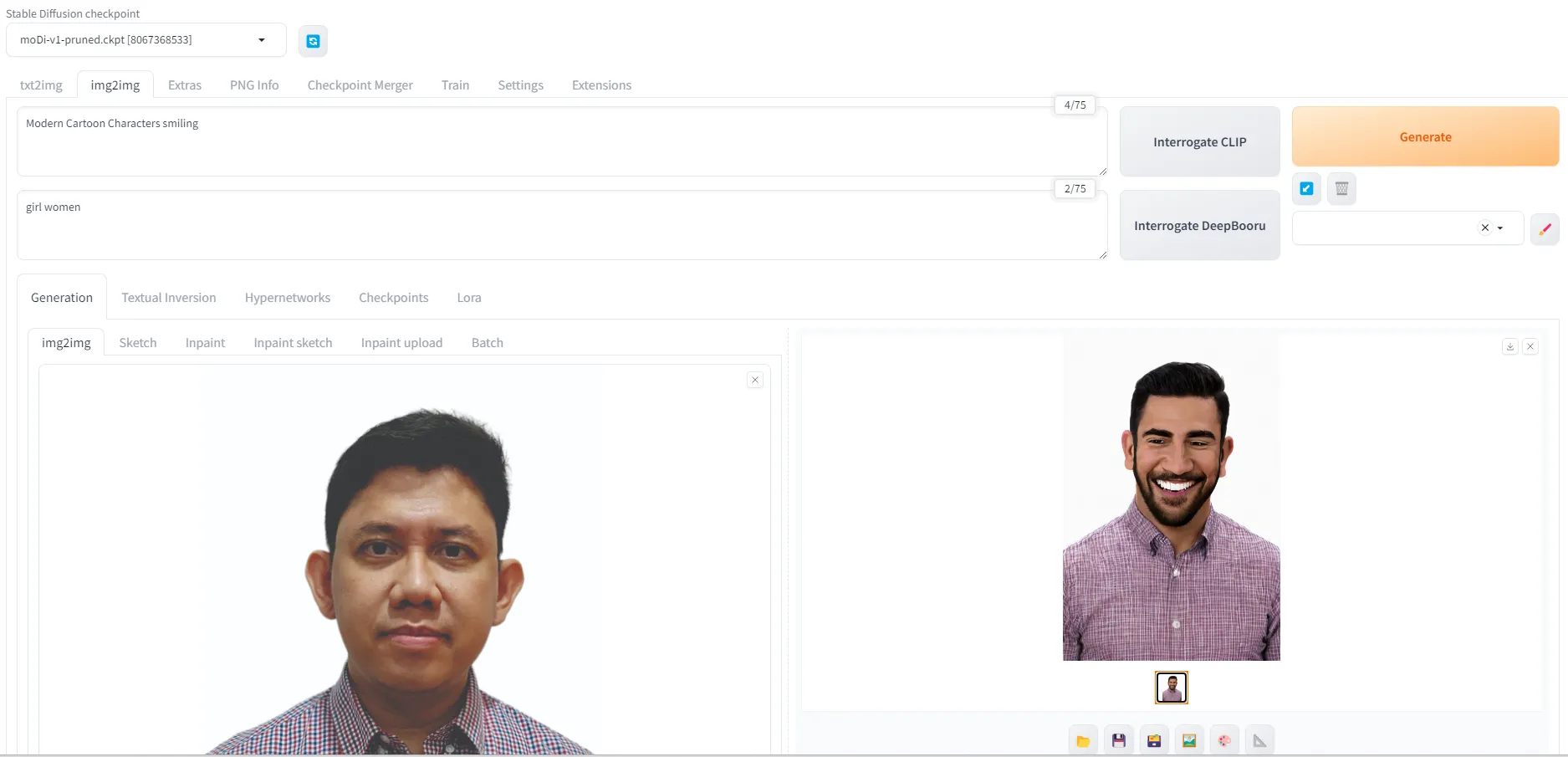

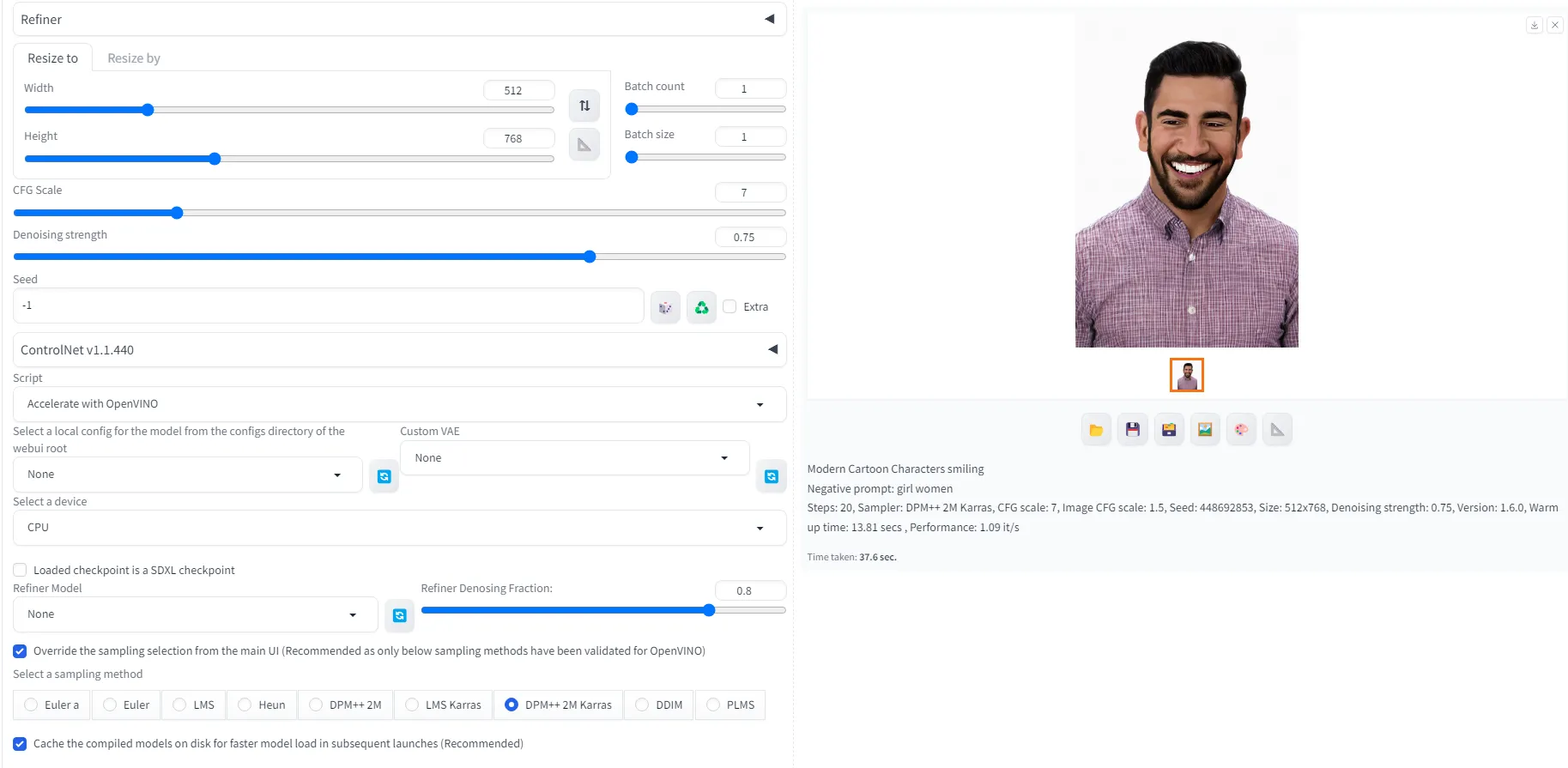

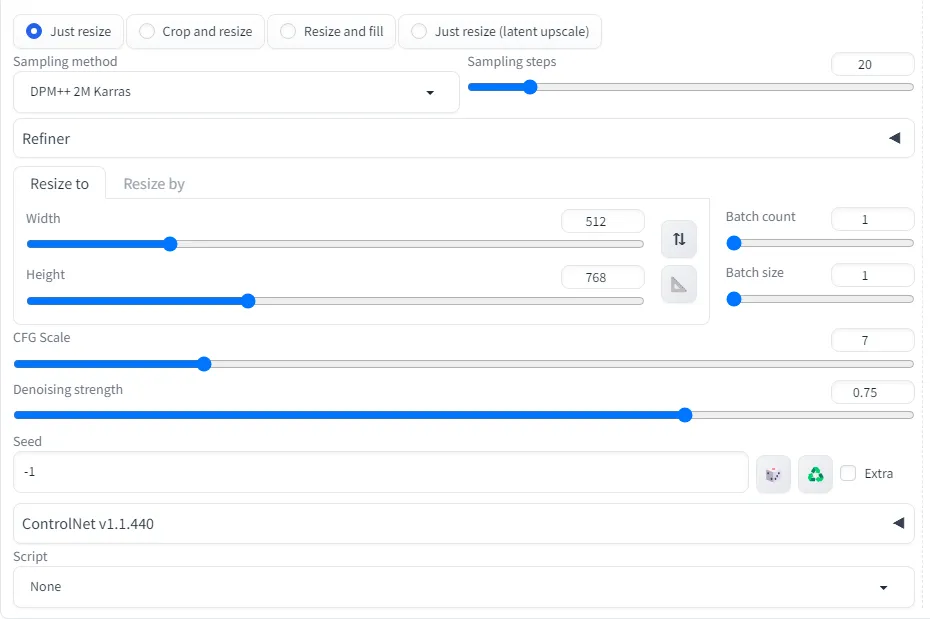

We start to put our image into Stable Diffusion

- Choose img2img

- Put on the prompt = Modern Cartoon Characters smiling

- Negative prompt = girl women

- Sampling method = DPM++ 2M Keras

- Script = Accelerate with OpenVINO and Override model = DPM++ 2M Keras

OpenVINO script acceleration enabled, the performance is 37.6 second to generate image

* The performance is variative depends on the environment and instance family.Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7, Image CFG scale: 1.5, Seed: 448692853, Size: 512x768, Denoising strength: 0.75, Version: 1.6.0, Warm up time: 13.81 secs , Performance: 1.09 it/s

* The performance is variative depends on the environment and instance family.Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7, Image CFG scale: 1.5, Seed: 448692853, Size: 512x768, Denoising strength: 0.75, Version: 1.6.0, Warm up time: 13.81 secs , Performance: 1.09 it/s

processing | 44.2/4.1s

Time taken:37.6 sec.

Time taken:37.6 sec.

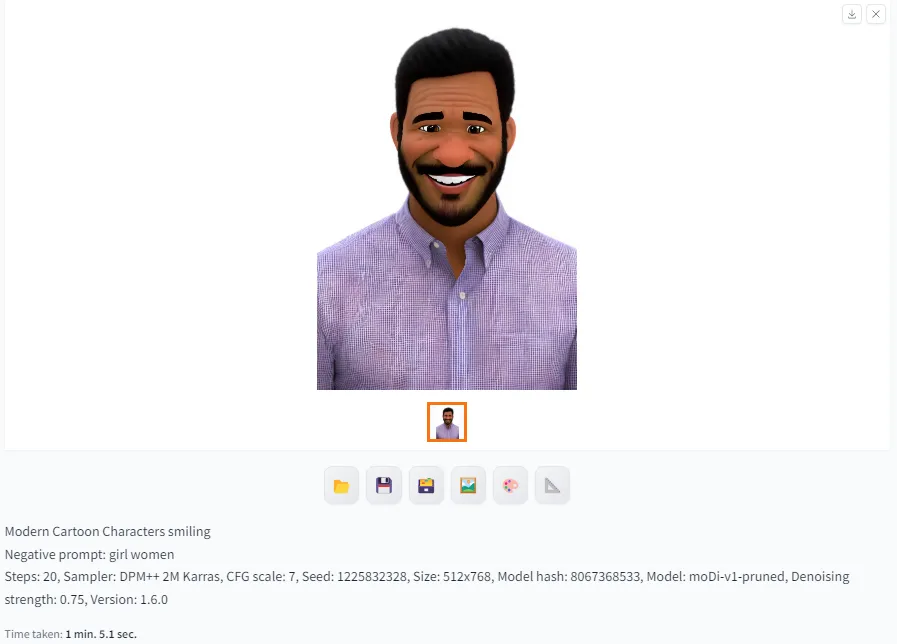

OpenVINO script acceleration disabled, the performance is 1 min. 5.1 second to generate image

Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7, Seed: 1225832328, Size: 512x768, Model hash: 8067368533, Model: moDi-v1-pruned, Denoising strength: 0.75, Version: 1.6.0

Time taken:1 min. 5.1 sec.

- We can do Generative AI using M7i, R7i and C7i family and powered by CPU Intel(R) 4th Gen

- Stable Diffusion process will be more performing using OpenVINO

- https://github.com/openvinotoolkit/stable-diffusion-webui/wiki/Installation-on-Intel-Silicon

- https://github.com/openvinotoolkit/stable-diffusion-webui?tab=readme-ov-file

- https://www.intel.com/content/www/us/en/developer/tools/openvino-toolkit/overview.html