Scaling Distributed Systems with Event Sourcing, CQRS, and AWS Serverless

An introduction to event sourcing, CQRS, and the benefits of using AWS serverless services to implement these architecture patterns.

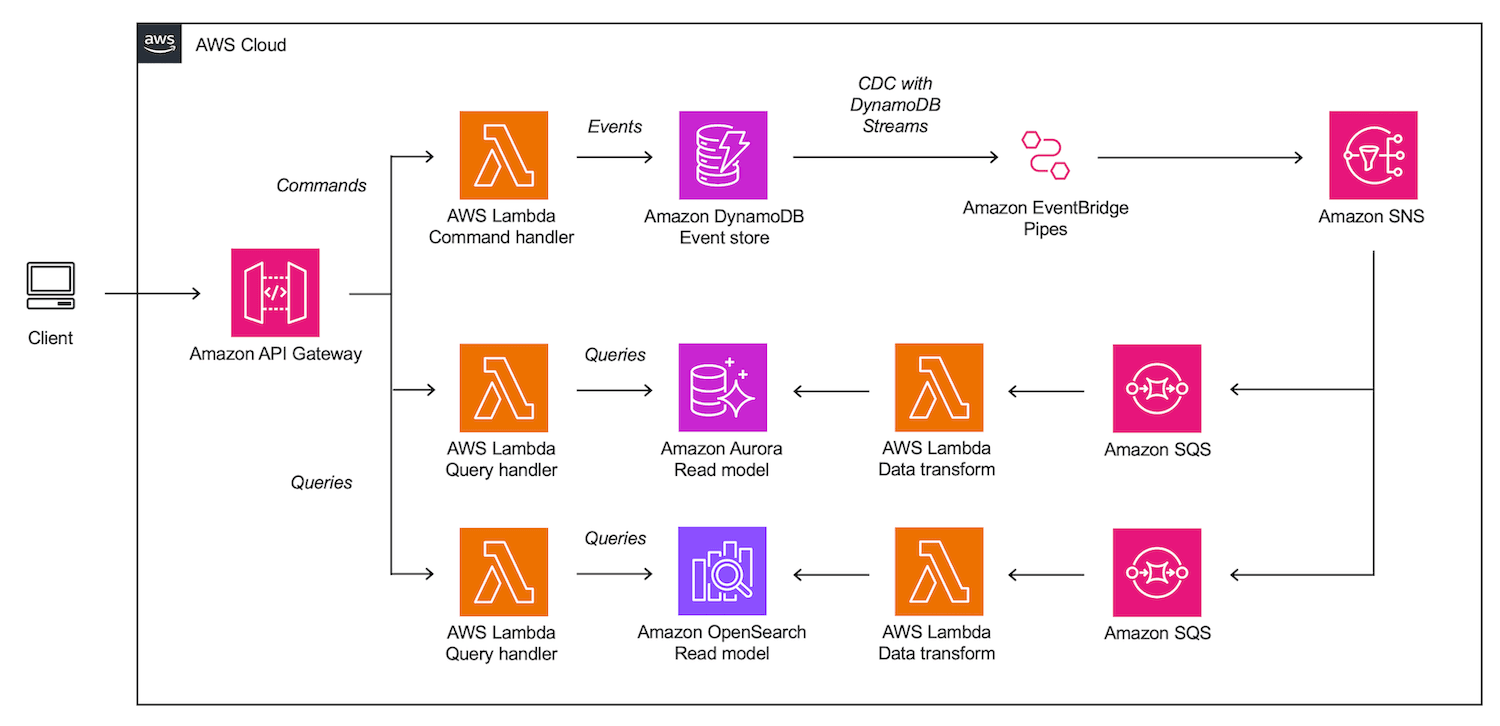

ProductCreated would be such an event. Because events are stored in an append-only log, they cannot be modified or deleted. Instead, if you want to delete an event, you must create a new one that offsets the previous event.ProductCreated events, as well as, for example, related ProductDeleted events, to arrive at the current list of products. With many of these events in the event store, this becomes a computationally intensive operation to perform each time this website view is requested by a client.CreateProduct. Commands are derived from the business logic of the system and set business processes in motion. Each command either produces an event, throws an error, is rejected, or has no effect. Commands are triggered by a client, such as the user interface of an application.GetProducts, interact only with a read data store and only return data without causing any side effects. On the other hand, commands that change the state of the system interact with a different write-optimized data store. By separating these concerns, you can optimize each type of operation independently, allowing for greater flexibility and scalability.- Amazon API Gateway serves as a highly scalable and managed entry point to the system, routing commands and queries to their respective handlers.

- AWS Lambda, a serverless, event-driven compute service, is used to implement command and query handlers that perform tasks such as validating data, triggering business logic, and storing events in the event store.

- Amazon DynamoDB is used as the event store. DynamoDB is a fully managed, serverless, key-value NoSQL database. The flexible schema of NoSQL databases makes them well suited for storing the events with widely varying properties that exist in complex systems. Because DynamoDB does not need to optimize for complex joins between tables or relations, it is highly scalable for writes.

- DynamoDB Streams is a feature of DynamoDB that provides a near real-time flow of information about changes to items in a DynamoDB table that can be accessed by applications. The combination of DynamoDB and DynamoDB Streams is well suited for implementing a serverless event store. For more detailed guidance, see also the AWS blog post Build a CQRS event store with Amazon DynamoDB.

- A Lambda function is triggered by events on the DynamoDB stream. The function performs the necessary data transformations and updates the current system state in the read model. Lambda event source mappings can be used to automatically poll new events from the DynamoDB stream in batches for processing.

- In this sample architecture, the read model is implemented using an Amazon Aurora Serverless database.

- Instead of pushing data directly to read models, it is published to an Amazon Simple Notification Service (Amazon SNS) topic via Amazon EventBridge Pipes which provides serverless point-to-point integrations between event producers and consumers. The SNS topic can then fan out the transformed data to any number of read models. Learn more about decoupling event publishing with EventBridge Pipes in this blog post.

- Each read model has its own Amazon Simple Queue Service (Amazon SQS) queue. This queue subscribes to the SNS topic and receives change data on new events. SNS message filtering can be applied to only receive only a subset of messages from the event store. This architectural pattern is known as topic queue chaining.

- For each read model, an AWS Lambda function reads polls the SQS queues and updates the current state of each read model. The function applies necessary data transformations. Lambda event source mappings can simplify polling the SQS queue.

- Amazon OpenSearch is added as a second sample read model.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.