Create call center transcript summary using AWS Bedrock and Lambda - Anthropic Haiku

Automate call center transcript summary creation using AWS Bedrock, Lambda, API and Anthropic Haiku foundation model.

Published Apr 14, 2024

Generative AI - Has Generative AI captured your imagination to the extent it has for me?

Generative AI is indeed fascinating! The advancements in foundation models have opened up incredible possibilities. Who would have imagined that technology would evolve to the point where you can generate content summaries from transcripts, have chatbots that can answer questions on any subject without requiring any coding on your part, or even create custom images based solely on your imagination by simply providing a prompt to a Generative AI service and foundation model? It's truly remarkable to witness the power and potential of Generative AI unfold.

Amazon Bedrock is a fully managed service and it integrate with multiple popular foundation models like Anthropic, AI21 Labs, Meta, Cohere, Stability AI and AWS their own model Titan foundation model.

Recently, Amazon announced support for the latest Anthropic model - Anthropic Claude 3 Haiku, the fastest model that allows the creation of near-instant generative AI applications. By integrating with Amazon Bedrock, it provides a powerful combination for building generative AI applications with little effort, enabling enterprises to serve business needs and customers with speed-to-market and faster software delivery in an agile manner.

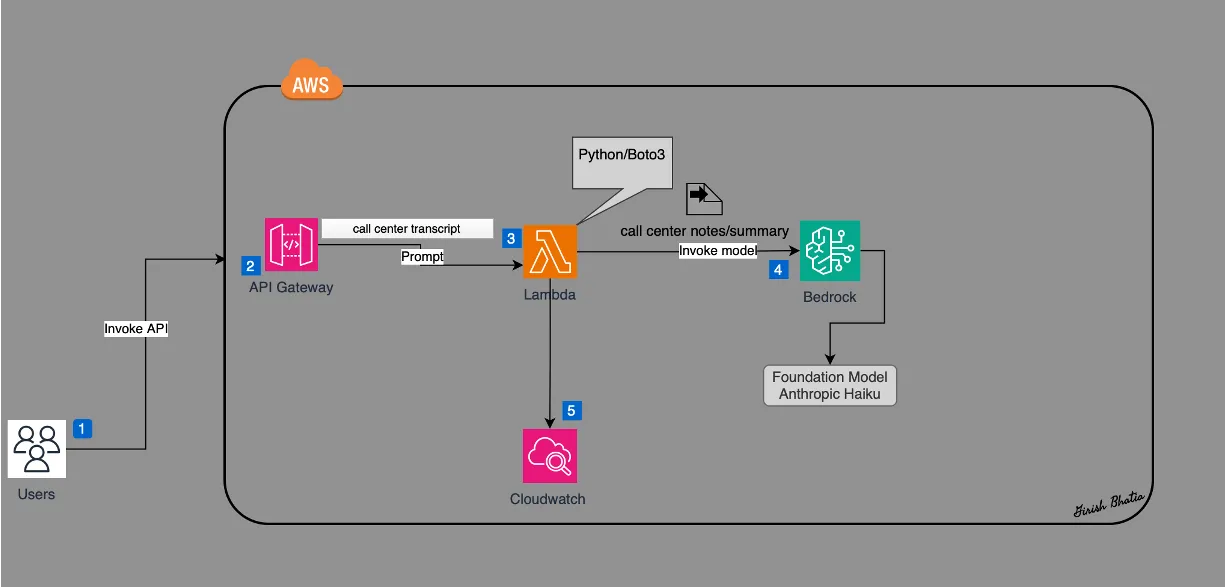

Let's consider an example of how to create a REST API that integrates with Amazon Bedrock and Anthropic Claude 3 Haiku. This API takes a call center transcript containing interactions between a call center employee and a customer, and then summarizes it into simple text for further analysis and training purposes.

I am going to show the steps to build a serverless Generative AI solution to create call center transcript summary via a rest API, Lambda and AWS Bedrock service. I will use recently released Anthropic Haiku foundation model and will invoke it via Amazon Bedrock API.

Let's review our use cases:

- There is a transcript available for a case resolution and conversation between customer and support/call center team member.

- A call summary needs to be created based on this resolution/conversation transcript.

- An automated solution is required to create call summary.

- An automated solution will provide a repeatable way to create these call summary notes.

- Increase in productivity as team members usually work on documenting these notes can focus on other tasks.

- There is a transcript available for a case resolution and conversation between customer and support/call center team member.

- A call summary needs to be created based on this resolution/conversation transcript.

- An automated solution is required to create call summary.

- An automated solution will provide a repeatable way to create these call summary notes.

- Increase in productivity as team members usually work on documenting these notes can focus on other tasks.

I am generating my lambda function using AWS SAM, however similar can be created using AWS Console. I like to use AWS SAM wherever possible as it provides me the flexibility to test the function without first deploying to AWS cloud.

Here is the architecture diagram for our use case.

Let's see the steps to create this automated Generative AI solution for all center transcript summary.

Review Bedrock Service

Amazon Bedrock is fully managed service that offers choice of many foundation models like Anthropic Claude, AI21 Jurassic-2 , Stability AI, Amazon Titan and others.

Since Bedrock is another serverless offering from amazon, it allows integrating with popular FMs and also allows to privately customize FMs with your own data using the AWS tools without having to manage any infrastructure.

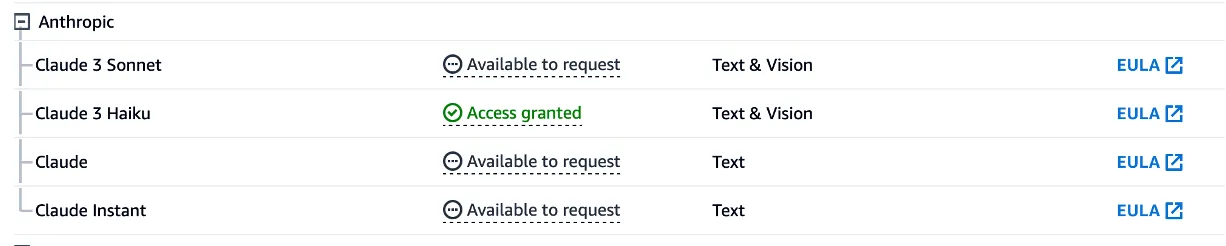

Request Model Access

Before model can be used, you need to request the access to the model.

For Amazon Models, typically it takes few mins to get access.

For Anthropic Haiku, it may take few hours to get access.

Temperature, Top P, Max Token Count

Let's review few key terms before we use the parameters for the model.

- Temperature - A value of 0 indicate less random generation, a value of 1 means more random generation.

- Top P - If value is less than 1, it will have less probable set of tokens.

- Max Token Count - This indicate Max numbers of tokens to generate. Responses are not guaranteed to fill up to the maximum desired length. Tokens are factor in the overall cost hence it is worthwhile to pay attention to max tokens you want to use.

Among a few others, these are some key parameters that need to be passed when invoking the Amazon Bedrock API along with the prompt to get the desired response.

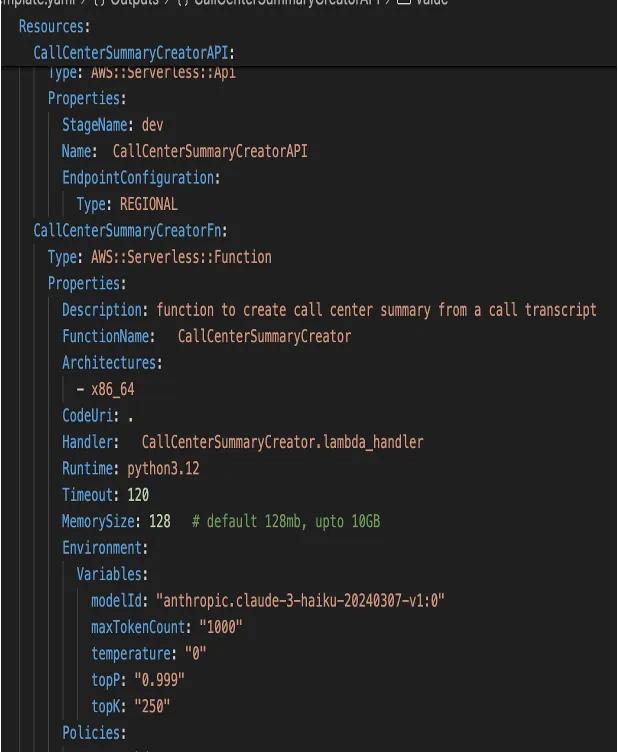

Create a SAM template

I will create a SAM template for the lambda function that will contain the code to invoke Bedrock API along with required parameters and a prompt. Lambda function can be created without the SAM template however, I prefer to use Infra as Code approach since that allow for easy recreation of cloud resources. Here is the SAM template for the lambda function.

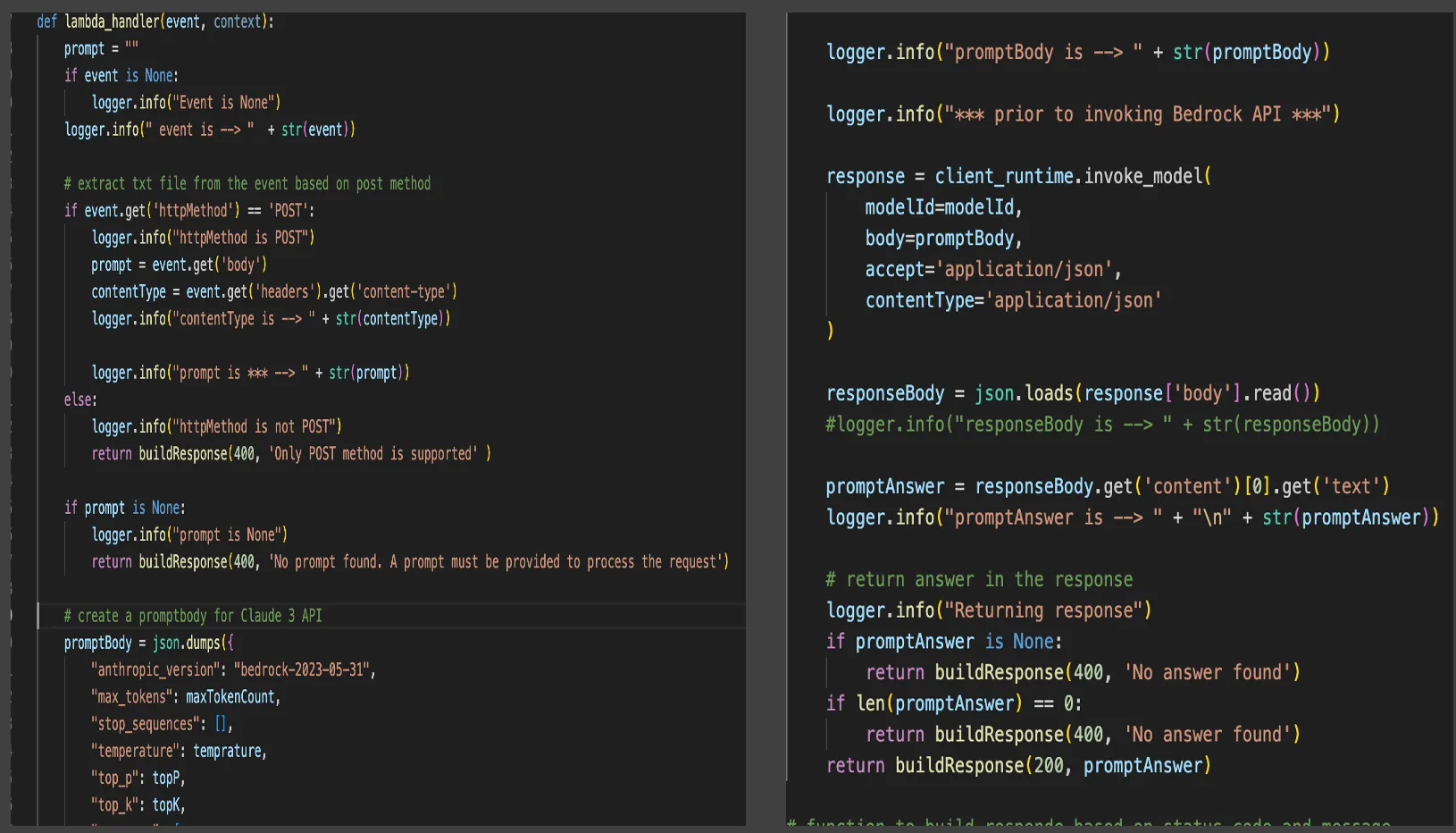

Create a Lambda Function

The Lambda function serves as the core of this automated solution. It contains the code necessary to fulfill the business requirement of creating a summary of the call center transcript using the Amazon Bedrock API. This Lambda function accepts a prompt, which is then forwarded to the Bedrock API to generate a response using the Anthropic Haiku foundation model. Now, Let's look at the code behind it.

Build function locally using AWS SAM

Next build and validate function using AWS SAM before deploying the lambda function in AWS cloud. Few SAM commands used are:

- SAM Build

- SAM local invoke

- SAM deploy

Validate the GenAI Model response using a prompt

Prompt engineering is an essential component of any Generative AI solution. It is both art and science, as crafting an effective prompt is crucial for obtaining the desired response from the foundation model. Often, it requires multiple attempts and adjustments to the prompt to achieve the desired outcome from the Generative AI model.

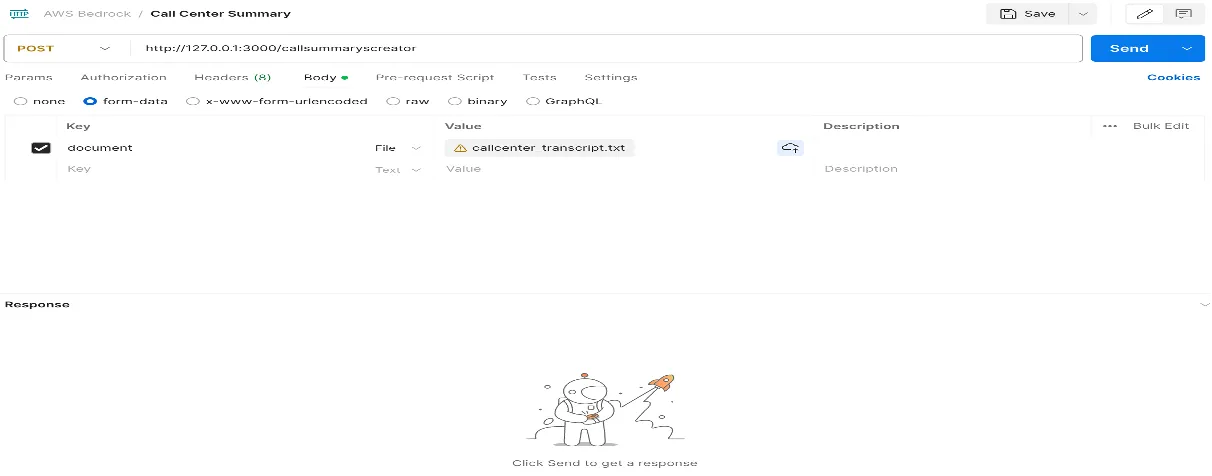

Given that I'm deploying the solution to AWS API Gateway, I'll have an API endpoint post-deployment. I plan to utilize Postman for passing the prompt in the request and reviewing the response. Additionally, I can opt to post the response to an AWS S3 bucket for later review.

I am using Postman to pass transcript file for the prompt.

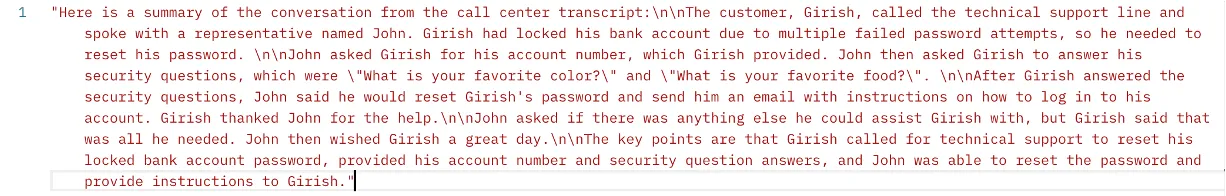

This transcript file has a conversation between call center employee (John) and customer (Girish) about a request to reset the password due to the locked account.

- John: Hello, thank you for calling technical support. My name is John and I will be your technical support representative. Can I have your account number, please?

- Girish: Yes, my account number is {ACCOUNT_NUMBER-1}.

- John: Thank you. I see that you have locked your account due to multiple failed attempts to enter your password. To reset your password, I will need to ask you a few security questions. Can you please provide me with the answers to your security questions?

- Girish: Sure, my security questions are: What is your favorite color? and What is your favorite food?

- John: Great, thank you. I will now reset your password and send you an email with instructions on how to log in to your account. Please check your email in a few minutes.

- Girish: Thank you so much for your help.

- John: You're welcome. Is there anything else I can assist you with today?

- Girish: No, that's all for now. Thank you again for your help.

- John: You're welcome. Have a great day!

Review the response returned by Generative AI Foundation Model

As GenAI solutions continue to evolve, they are set to reshape traditional workflows and drive tangible benefits across industries. This workshop serves as a compelling example of the transformative power of AI technologies in addressing real-world challenges and unlocking new opportunities for innovation.

Thanks for reading!

Click here to get to YouTube video for this solution.

𝒢𝒾𝓇𝒾𝓈𝒽 ℬ𝒽𝒶𝓉𝒾𝒶

𝘈𝘞𝘚 𝘊𝘦𝘳𝘵𝘪𝘧𝘪𝘦𝘥 𝘚𝘰𝘭𝘶𝘵𝘪𝘰𝘯 𝘈𝘳𝘤𝘩𝘪𝘵𝘦𝘤𝘵 & 𝘋𝘦𝘷𝘦𝘭𝘰𝘱𝘦𝘳 𝘈𝘴𝘴𝘰𝘤𝘪𝘢𝘵𝘦

𝘊𝘭𝘰𝘶𝘥 𝘛𝘦𝘤𝘩𝘯𝘰𝘭𝘰𝘨𝘺 𝘌𝘯𝘵𝘩𝘶𝘴𝘪𝘢𝘴𝘵