Adding Gen AI powered RAG feature into my app in an hour

I will show you how to use Amazon Q for business to get the job done

Syed Jaffry

Amazon Employee

Published Apr 1, 2024

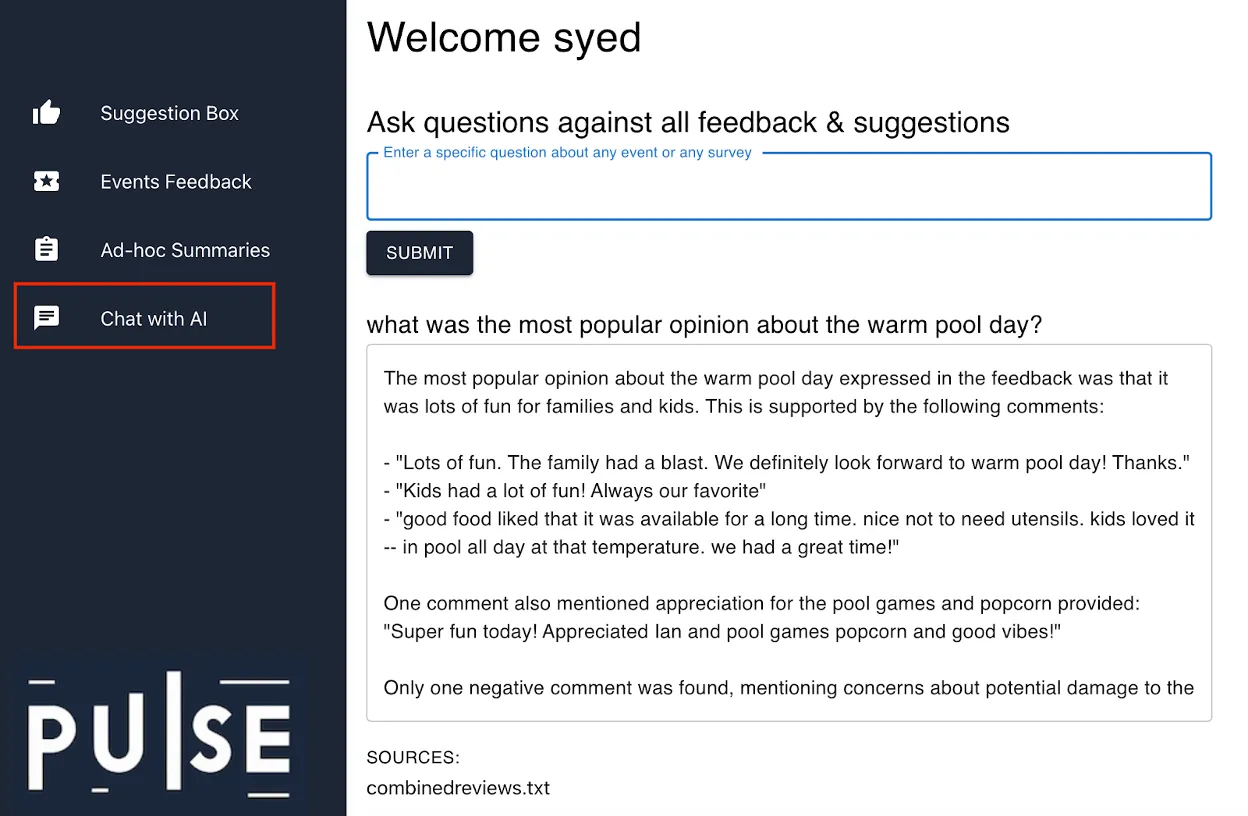

My journey began with a gap I noticed at my tennis & swim club where member feedback was few and far between, however having high member satisfaction was important to the club management. The gap was the lack of an effective mechanism to gather timely feedback. So I built a QR code feedback collection mechanism that members started using to provide ongoing feedback on various events that the club hosted. As the popularity grew, it became apparent that sifting through tens or sometimes hundreds of feedback comments was not going to be effective for the club management. So that's when I came up with an idea to use Gen AI to enable question answering on the reams of feedback data that was sitting in S3. Enter the “RAG” design pattern to the rescue! RAG stands for Retrieval Augmented Generation and it’s a perfect solution to enable finding insights and creating summaries of all the feedback the club now receives a lot more frequently. See screenshot of the RAG application in action.

Intentions are great but how do I execute?

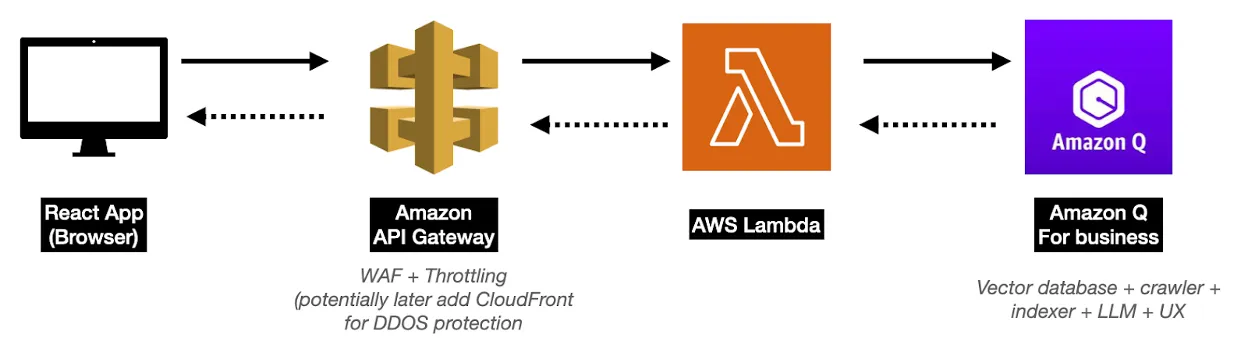

The first thing I needed to do was to identify where my data was. Luckily, for this use case, all the data was textual and was sitting under various folders (more accurately “S3 prefixes”) within a single bucket. So according to the RAG design pattern, I just need to index all that textual data in the S3 bucket into a vector store and then attach an LLM to the vector store and put a user experience app on top of that LLM. This is where the magic of AWS integrated services comes into play. I could’ve spent weeks carefully evaluating each of the individual technology components and then finding/building/configuring/hosting them to make this application work or I could leverage Amazon Q for business for all the vector store related crawling and indexing, the LLM and the user experience API and turn the entire RAG application problem space into a familiar AWS cloud paradigm of calling higher level API services and integrating them directly into my app using glue code with Lambda. Below is the design diagram.

Now that I have the design, how does the rubber hit the road?

I chose to natively integrate Amazon Q for business into my React app. For that I started with setting up Amazon Q app first. Using the Amazon Q for business console experience, I created the app and connected my S3 bucket to it and initiated the data sync (for larger environments, you probably should use infrastructure as code method). That made all of my feedback comments data available for query in natural language, which I tested using the Amazon Q console experience. Once I was happy with the results, I proceeded to integrate it into my React app. The main component I needed for that was a Lambda function that invoked my Amazon Q app API called “chat_sync” and returned the results back to my React app via API gateway. And that’s it, I started taking questions from user input and the app returned a Gen AI powered RAG based response back to the user! Voila! I now have a RAG based feature natively integrated into my app!

Below is the snippet of the Python boto3 Lambda code that calls the Amazon Q for business API.

client = boto3.client('qbusiness')

response = client.chat_sync(

applicationId=appId,

userId=user_name,

userMessage=query

)

result = {

"answer": response['systemMessage'],

"sources": [source['title'] for source in response['sourceAttributions']]

}

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.