Handling Time Management: I built a scheduling assistant with Claude and Streamlit

Leveraging the latest advancements of vision capabilities in Claude 3 to create a scheduling assistant

Viswanath Nagabhatla

Amazon Employee

Published Apr 22, 2024

Last Modified Apr 23, 2024

In today's fast-paced societies, most of us face the burden of managing multiple calendars and schedules which becomes overwhelming often times. Scattered appointments, meetings, and deadlines across various platforms often lead to conflicts, inefficient time management, and worse lack of work-life balance.

This is where the innovative use of Generative AI and where our Smart Calendar Analyzer app comes into play, offering a seamless solution to streamline your scheduling process and optimize your productivity.By integrating Claude and Streamlit, I created a user-friendly interface that allows you to interact with our smart calendar analyzer intuitively.

Anthropic's latest innovation, Claude 3, now offered through Amazon Bedrock, introduces a game-changing capability that has powered our smart calendar analyzer. Claude 3's ability to interpret visual data, opens up a world of possibilities for seamless scheduling.

Imagine being able to capture a screenshot of your upcoming week's commitments or as simple as writing down your appointments and having our application effortlessly translate that visual representation into a structured calendar, complete with all the necessary details. With Claude 3's vision capabilities at the core of our smart calendar analyzer, the barrier between having different API calls and integrations across different calendar system disappears allowing you to effectively schedule meetings. On the backend, I will use Amazon Bedrock's Message API to call the models in the Claude 3 family to understand and analyze the image.

As for the choice of using Streamlit, it simplifies the entire process of rapid prototyping and development without spending too much time on the frontend. Streamlit is a powerful open-source Python library that allows you to create interactive web applications with minimal coding effort. This is particularly useful for those of us who aren't UI gurus. It's great for building proof-of-concepts with the goal of having a working example up and running fast.

Let's explore the steps to accomplish it!

For our purposes of rapid prototyping with the Claude 3 models, developing the front-end interface for interaction becomes a necessity but not a priority. In order to simplify the overall experience of developing a front-end for our application, we will go with a Python based approach.

To build the web interface in a fairly simple and straightforward manner, I turned to Streamlit, which is an open source Python library, to build out the front-end interface and is perfect for users who do not want to spend too much time or effort into developing the frontend UI. With just a few lines of code, I can automatically generate a web app interface without the getting bogged down in the UI design details all too much. The idea here is to whip up a working example swiftly.

The logic for designing for our frontend sits in the

app.py file. The code uses several Streamlit components for the UI:- st.file_uploader is used to upload multiple images:

- st.sidebar.image displays the uploaded images:

- st.chat_input is used for user text input:

- st.chat_message displays the chat messages from both the user and the model:

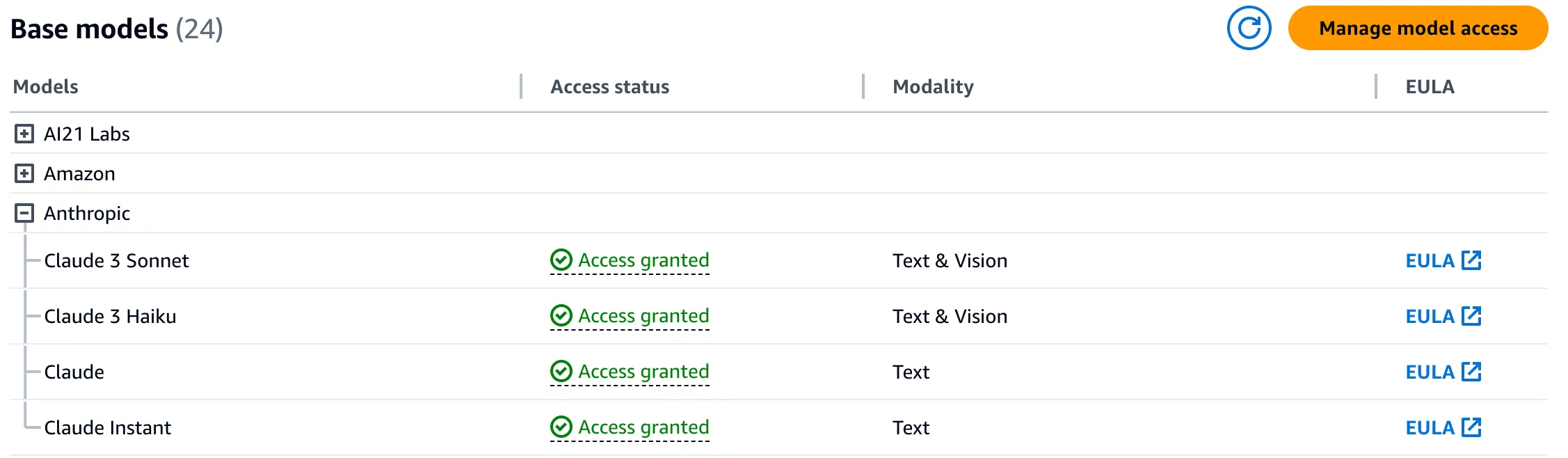

The initial step in being able to access the Claude 3 models is to initially enable the models through the AWS Console. More details on enabling the models can be found here.

The authenticator is initialized by calling the Auth.get_authenticator() method, which retrieves the authenticator configuration from AWS Secrets Manager.

The authenticator then handles user login and logout functionality. When main() is called, it first calls authenticator.login() to authenticate the user. If authentication fails, it stops the Streamlit app.

The authenticator stores information about the logged in user, which is then accessed via authenticator.get_username() to display the username.

The code uses Streamlit's file_uploader component to allow users to upload multiple images at once:

It then loops through each uploaded file object and displays it in the sidebar for preview and to pass the image data to the Claude model, it first needs to be encoded from a file object to a base64 string. This is done using:

The base64 encoded string can then be included in the message payload that gets sent to the model:

By encoding the image to base64, it allows the raw image data to be embedded directly into the JSON request body. The model response is then processed to extract the generated text response.

The code first initializes a Boto3 client to interact with the Amazon Bedrock runtime

You then define the model ID and max tokens you want to invoke:

We introduce a new function called run_multi_modal_prompt. This function is designed to interact with the Amazon Bedrock API, specifically to send prompts and receive responses from an GenAI model like Claude 3. Its primary purpose is to facilitate communication between your application and the GenAI model hosted on Amazon Bedrock, allowing you to leverage the model's multi-modal capabilities within your application.

When the user submits input, a messages array is populated with the user's text. If an image was uploaded, it is encoded to base64 and added to the message payload along with the chat history. This messages array is passed to the run_multi_modal_prompt function along with the bedrock runtime created above, model_id and max number of tokens specified previously along with the messages array :

This function constructs the JSON body and makes the InvokeModel API call. The response is loaded as JSON and the model's text output is extracted. This output is then displayed to the user via a st.chat_message component.

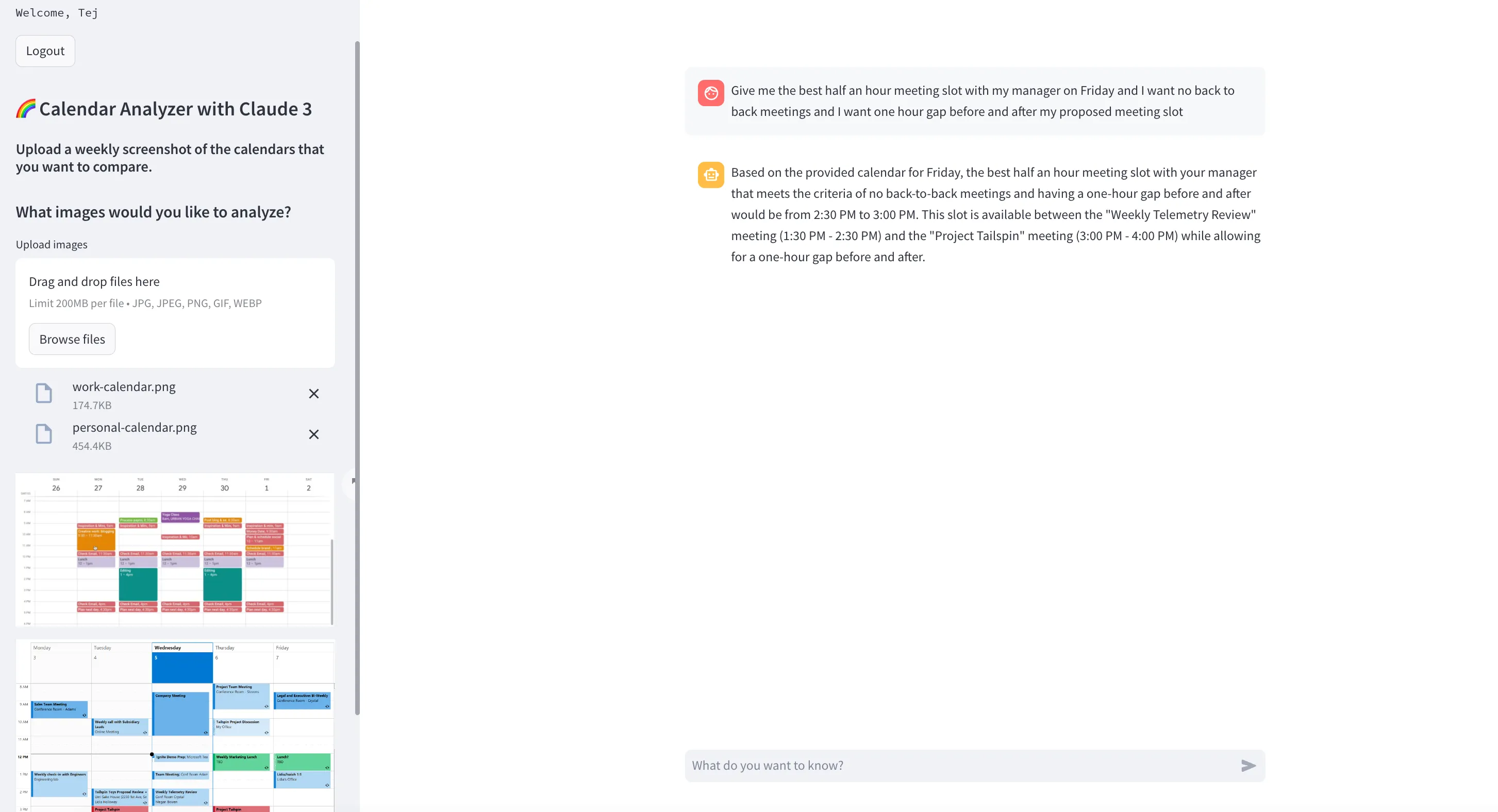

Notably, the Calendar Analyzer with Claude 3 demonstrated the capability of leveraging the vision capabilities of Anthropic's latest Generative AI model, Claude 3, accessed through Amazon Bedrock's APIs. This enabled the application to interpret and process visual data seamlessly, eliminating the need for complex calendar API integrations or manual data entry. Please note that the screenshots of the calendars are just samples and not real calendar screenshots.

Here is a sample look at the app below:

Overall, the outcome validated the concept of using latest GenAI models, like Claude 3, in combination with rapid prototyping tools like Streamlit, to create innovative solutions that streamline scheduling processes and enhance productivity and I have done so .

If the calendar analyzer app is any indication, the potential of AI-driven solutions is truly exciting and something to look forward to. Embrace the future, my friends, and let the calendar analyzer chatbot app with Claude 3 be your guide to a more organized, productive, and stress-free days.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.