Engineering Resilience: Lessons from Amazon Search's Chaos Engineering Journey

Discover how Amazon Search enhanced its system resilience through practical resilience engineering, transitioning from traditional load tests to large-scale experiments and achieving key milestones along the way.

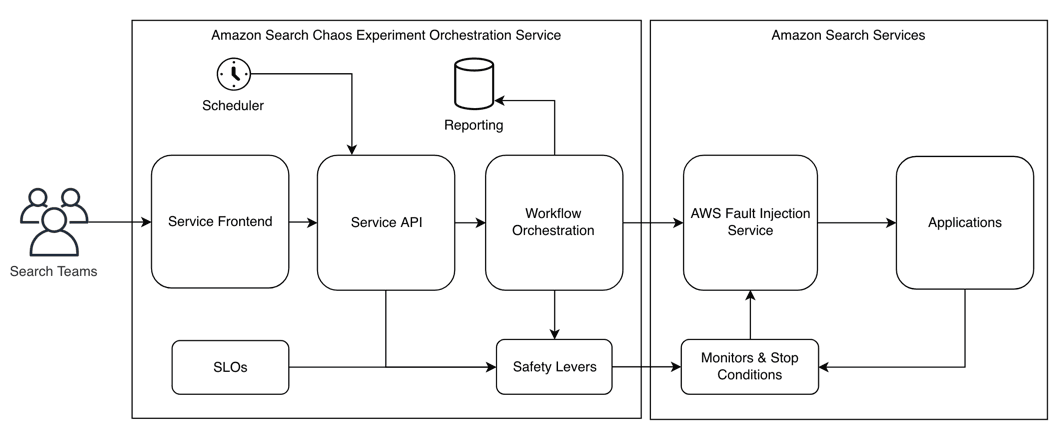

- Implement guard rails to prevent or limit customer impact during experiments.

- Automatically shut down experiments if there's a disruption in the steady state, meaning unintended customer impact.

- Set up infrastructure provisioning, such as chaos injecting agents, sidecar containers, and AWS resources.

- Execute experiments regularly to detect regressions at an early stage.

- If regressions occur, escalate the issue to service owners.

- Controlled customer impact is preferred to unexpected customer impact during peak traffic.

- Every experiment tests a hypothesis.

- We don’t test in production when alternatives are available.

- We rely on fail-safes and heartbeats in chaos experiments, allowing the system to proactively stop disruptive experiments without needing an external signal to terminate them.

- We prioritize crash-only models over adding complexity for error-handling and recovery.

- We implement multiple fail-safes and guardrails because we recognize that bugs will happen.

- Experiments that validate the system's resilience to a particular failure: For instance, when we terminate a host, the service seamlessly switches to another host, and the end customer doesn't experience any disruption. In other words, these experiments don't impact the measured steady state during the experiment itself.

- Experiments where the hypothesis is that the system will recover from the failure without human operator intervention: For example, if a critical dependency experiences an outage, we want to ensure that our service doesn't wait indefinitely, tying up sockets and threads, but rather promptly recovers when it becomes available again. In this case, we want to measure that we've returned to our steady state after the experiment.

- Instance Termination: testing the system's resilience to a loss of compute resources.

- Latency Injection: introducing latency to assess the system's performance under degraded network conditions.

- Packet Black-holing: investigating how the system recovers under 100% packet loss.

- Emergency Levers: activation of emergency levers (e.g., turning off features to reduce load) to ensure they work without encountering issues when they are most needed.

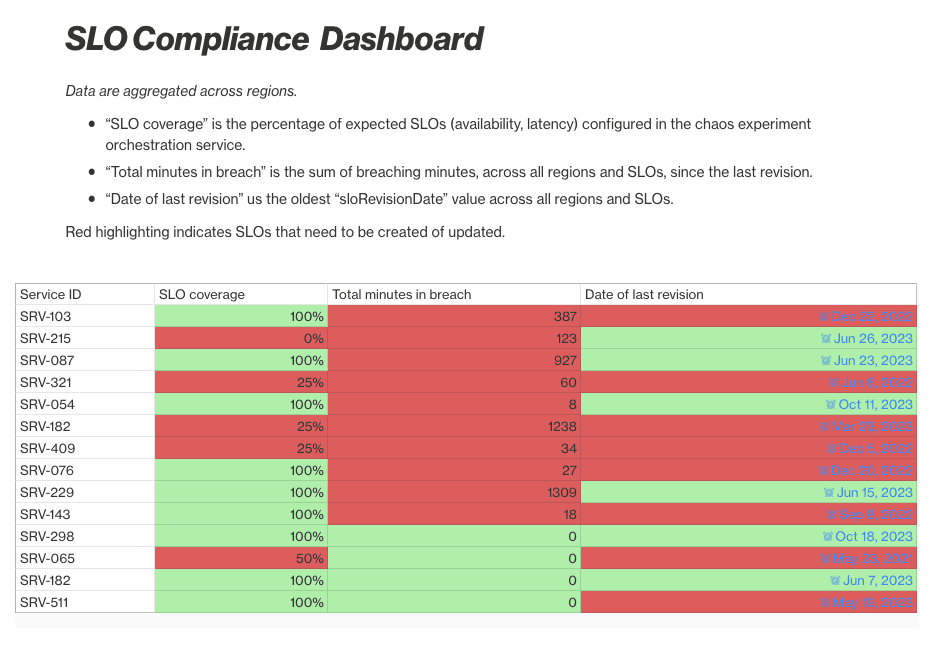

- Insufficient Error Budget: the service may not have any error budget left (i.e., breaching their SLO) so we should prioritize the customer experience instead of running chaos experiments.

- Issue Detection: the experiment may have uncovered an issue that is being investigated and fixed. In such cases, there is no need to run the experiment until the issue has been addressed.

- Manual Disabling: Experiments may have been intentionally disabled to preserve error budget for challenging operational migrations or sales events.

- SLO Last Revision Time: we document when the SLO was last revised, meaning SLIs (metrics) were added or removed, or thresholds were modified upwards or downwards.

- SLO Breach Duration: we track the total duration in minutes that a specific SLO revision has spent in breach. This means when the error budget is zero or in the negative range.

- SLO Coverage: we assess whether all SLOs (availability and latency) are present and actively monitored for a particular service.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.