Building a workshop for AWS re:Invent - part 2

Ever wondered what goes into the design and delivery of content at re:Invent? This series of posts explores the process of creating a 300-level workshop for delivery at AWS re:Invent 2023. Part 2 takes a deeper dive into the CloudFormation code used.

Matt J

Amazon Employee

Published Feb 8, 2024

Continue to Building a workshop for AWS re:Invent - part 3

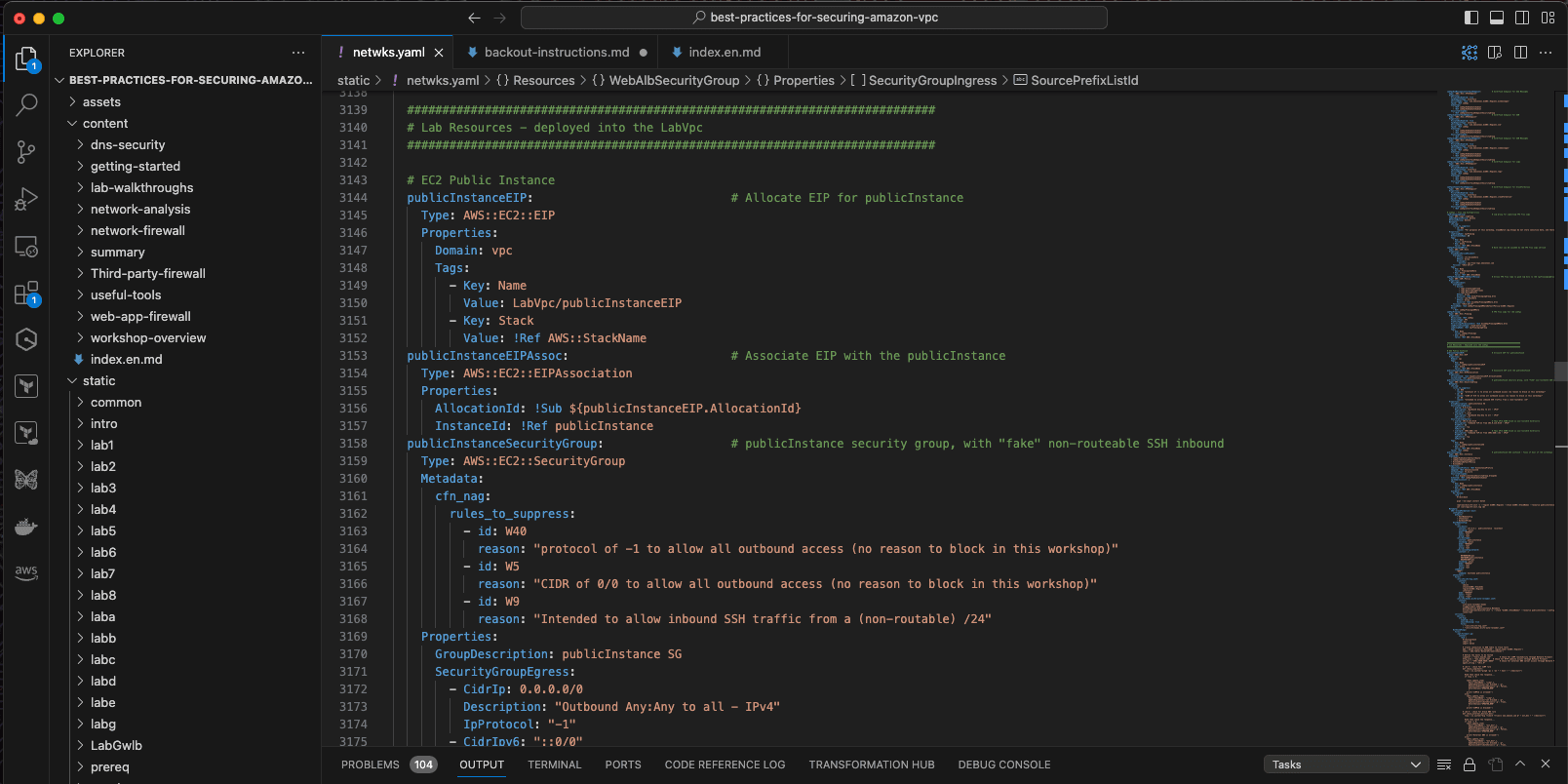

A significant part of the effort of building a workshop is focussed on the development of the AWS CloudFormation template(s) which deploy the resources that the participants will use. There are lots of approaches to authoring CloudFormation templates - if you are a developer, I would highly recommend tools such as the AWS Cloud Development Kit (CDK). For Laura and myself, we decided to write native CloudFormation (as YAML), because we were more experienced with this approach, and it gave us a bit more control over resource naming (useful when you need users to find specific resources).

Whilst most of the template is pretty straightforward (if long - around 7,500 lines of code), there are a few specific implementation details that are worth calling out; the first is around conditional resources, the second custom resources, and the third CloudWatch Dashboards.

Custom resources in CloudFormation allow you to provision resources and capabilities that might not otherwise be supported natively in CloudFormation. It consists of an AWS Lambda function that manages the resource, and a Custom CloudFormation resource that defines the properties and attributes that can be used within the rest of the CloudFormation template.

We use custom resources for a few things in the workshop; to discover the primary ENI ID and IPv6 addresses for instances; to get the AWS Network Firewall endpoint IDs for specific Availability Zones, and to get the GWLB VPC service endpoint ID.

Below is the Lambda function that powers a CloudFormation custom resource that we use to find two bits of information; the Elastic Network Interface (ENI) ID of an instance, and also the IPv6 address it has been assigned (if any). These are included in the

responseData dictionary as Eni and Ipv6Address attributes.We can then use this to create a CloudFormation custom resource, which uses the properties provided (the

InstanceId) to create a logical resource publicInstanceNetworking:Once provisioned, this logical resource will have two attributes that can be referenced elsewhere in the CloudFormation template (ENI ID and IPv6 Address), using these code snippets:

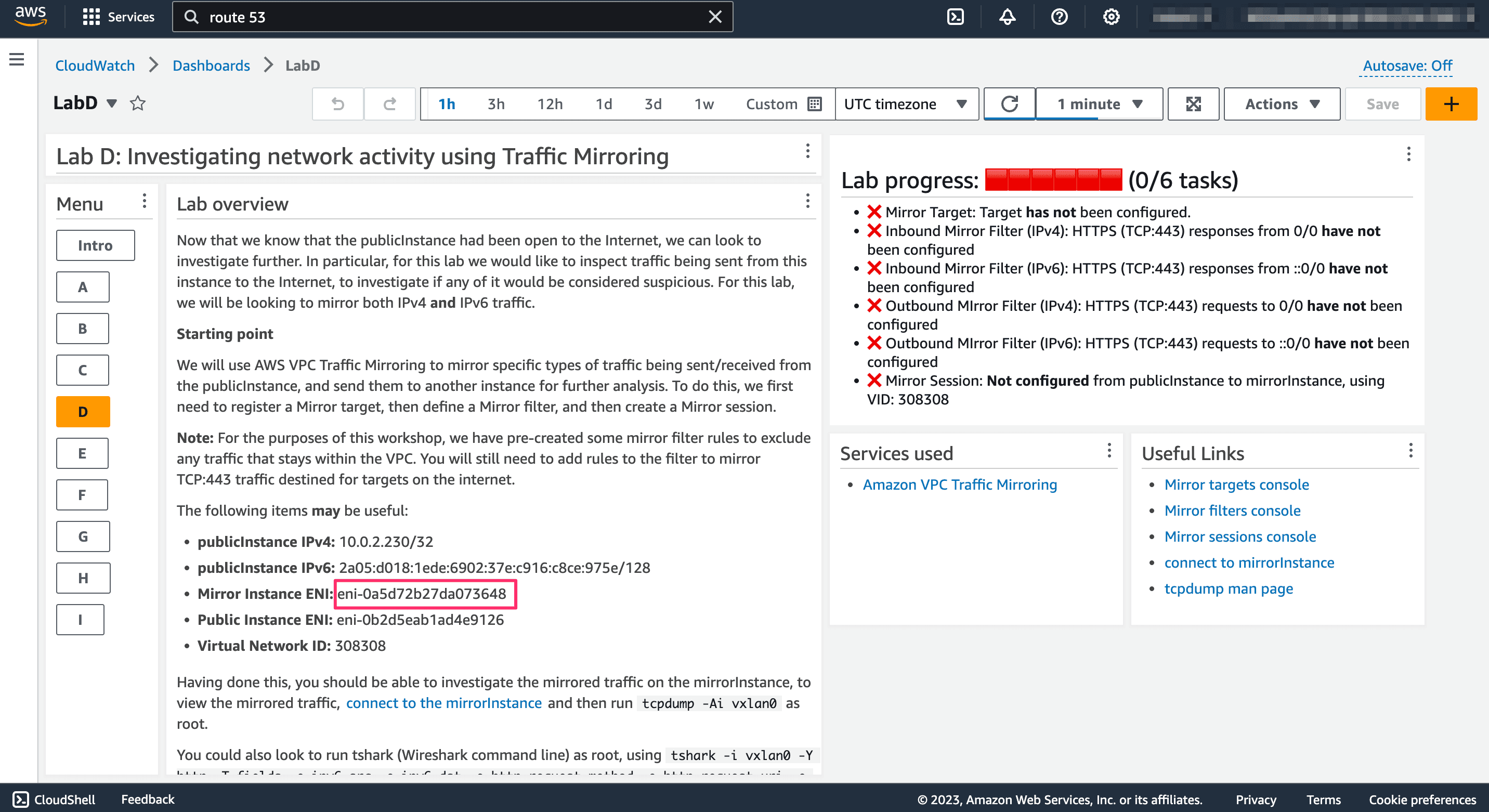

${publicInstanceNetworking.Eni} and $publicInstanceNetworking.Ipv6Address.To encourage participants to explore, we make extensive use of AWS CloudWatch Dashboards (one for each lab). These provide two valuable capabilities; they can provide context-sensitive information not available in the static content guide, and (through the use of custom widgets) also provide an up-to-date status on the completion of the lab tasks.

In many of the Labs, the participant will be asked to configure or change a pre-deployed resource (for example, to configure a route to a VPC endpoint). Since the details of some of these resources aren't known until the workshop is deployed, it means that the workshop guide cannot be specific about resource names, ENI IDs, etc. However, since we build the CloudWatch Dashboards as part of the same CloudFormation template, we can embed logical references to those resources within the dashboard definitions (using the

${ResourceName.Attribute} format), and allow CloudFormation to resolve them for us when the stack is deployed. This means that the Dashboard is able to display the details of the actual resources, unique to each participant. This sounds more complicated than it is, and so I've provided an example below:

This appears as the following:

If you haven't come across Custom Widgets before, I highly recommend checking them out - they are incredibly useful for so many things! Custom widgets are Lambda functions that generate JSON, HTML or Markdown output, and which are then displayed on the dashboard. For our workshop, each dashboard is set to refresh every 60 seconds, and when it does, the Lambda function runs and updates the custom widget output.

In our case, we use a custom widget (one for each lab) to show progress against the tasks that need completing. Behind the scenes, the Lambda function is making calls to various AWS service APIs to see if the participant has configured a resource in the correct way. It then updates the output of the custom widget to show what progress has been made (and makes use of CloudWatch Dashboard's support for unicode to do so in a reasonably colourful way!).

As an example, in the Traffic Mirroring lab, participants need to complete a number of tasks, such as configuring a Mirror Target, defining a Mirror Filter, and establishing a Mirror Session. The Lambda function checks (every 60 seconds) if those tasks have been completed (by making API calls to the relevant services), and if they have been, updates the widget output to show that a task has been completed.

A snippet from one of the functions is shown below; note the use of the unicode

&#.... codes to display ticks and crosses, without having to generate any custom images. This renders in the CloudWatch dashboard similar to:

After the workshop is feature-complete, we then open it up to a series of "dry-runs"; this is where we invite a number of Amazonians (including both experts in networking and those with less direct experience of the topic) to run through the workshop from start to finish, and capture any errors, glitches, bugs, or suggestions on how to improve the attendee experience. These dry-runs are really important - when you've been working on a project for period of time, you can become "desensitised" and overlook things that people coming with a fresh viewpoint will quickly spot.

Once the dry-runs are completed, we then look at performing a set of security reviews. We are designing and building with security in mind throughout the workshop (security is this highest priority at AWS!), but this stage provides a formalised, independent review of the workshop from a security perspective.

First up, we perform a series of automated code reviews using a range of open-source tooling. This includes cfn-lint (looking for specific CloudFormation errors or bad-practice) and cfn_nag (which has more of a security best-practice focus). Following this, we perform a scan of the workshop using ScoutSuite, which analyses the deployed resources looking for misconfigurations or bad practice.

It should be noted that in some cases, we make decisions to acknowledge but ignore warnings from these tools. Each of these decisions is made deliberately, with supporting reasoning, and is linked to the aims and objectives of the workshop. For example, cfn_nag recommends that AWS Lambda functions are executed within a VPC (to enable tracking and auditing of network calls). In this workshop, Lambda functions are there as a supporting function (such as CloudWatch dashboard custom widgets), and not processing critical data - hence, we choose to acknowledge this and ignore the warning.

You can see this in the CloudFormation template:, where we use resource metadata to suppress warnings:

The next stage is to complete a workshop audit; this is a 60-question report that ensures a high-bar is set for the quality of the workshop. It covers a range of technical areas, such as security, resilience, cost-optimisation, as well as other important checks, such as the use of inclusive language, back-out instructions for deployment in customer accounts, and image rights (if used).

Finally, the workshop code, documentation and audit report is reviewed by a Workshop Guardian; these are Amazonians, experts in the specific workshop domain, who have undertaken additional security training. They provide an independent assessment of the workshop, which cannot be published until they have given the go-ahead.

With that done, we're good to go - next stop Las Vegas, which we'll cover in part 3...

Continue to Building a workshop for AWS re:Invent - part 3

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.