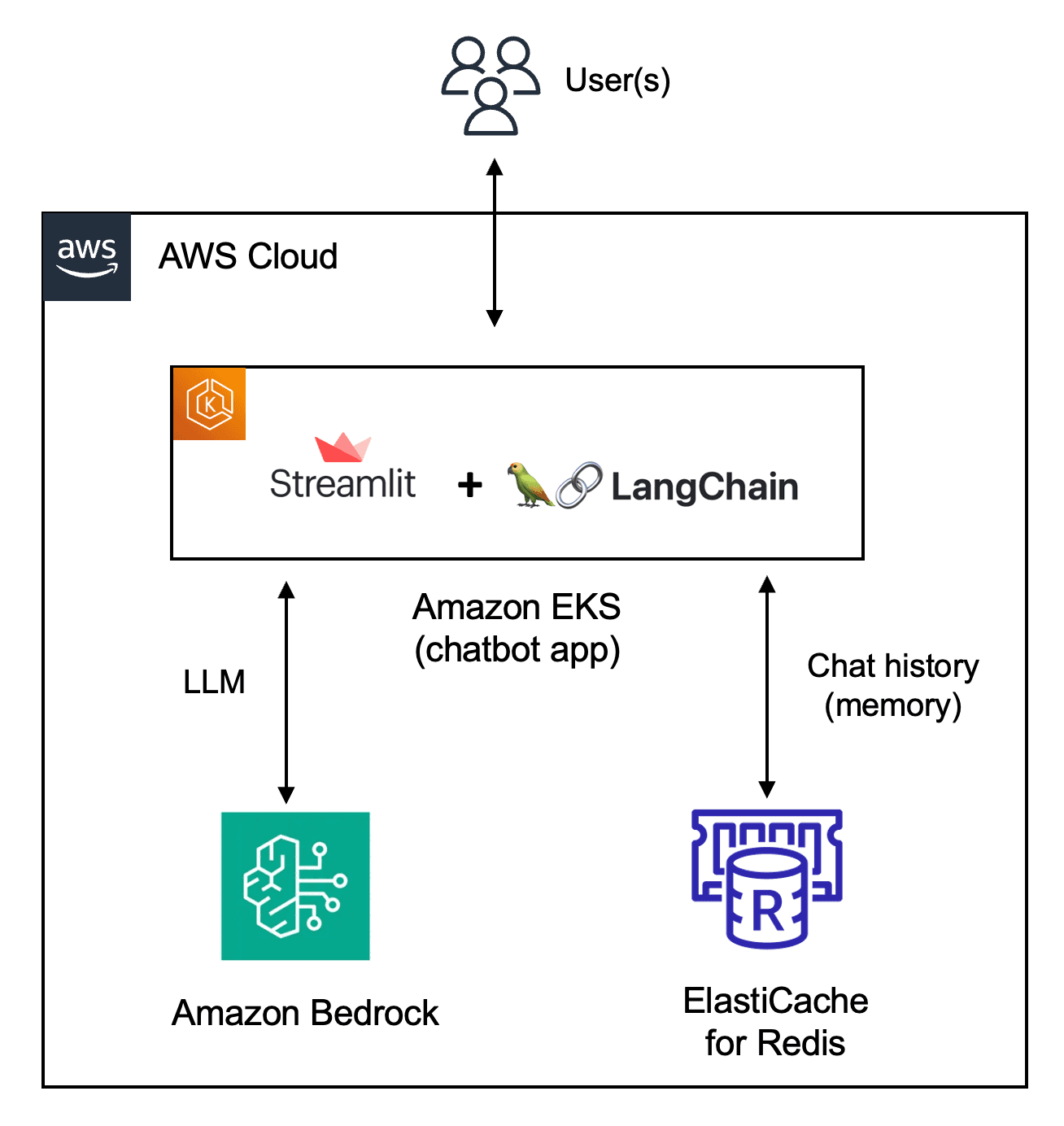

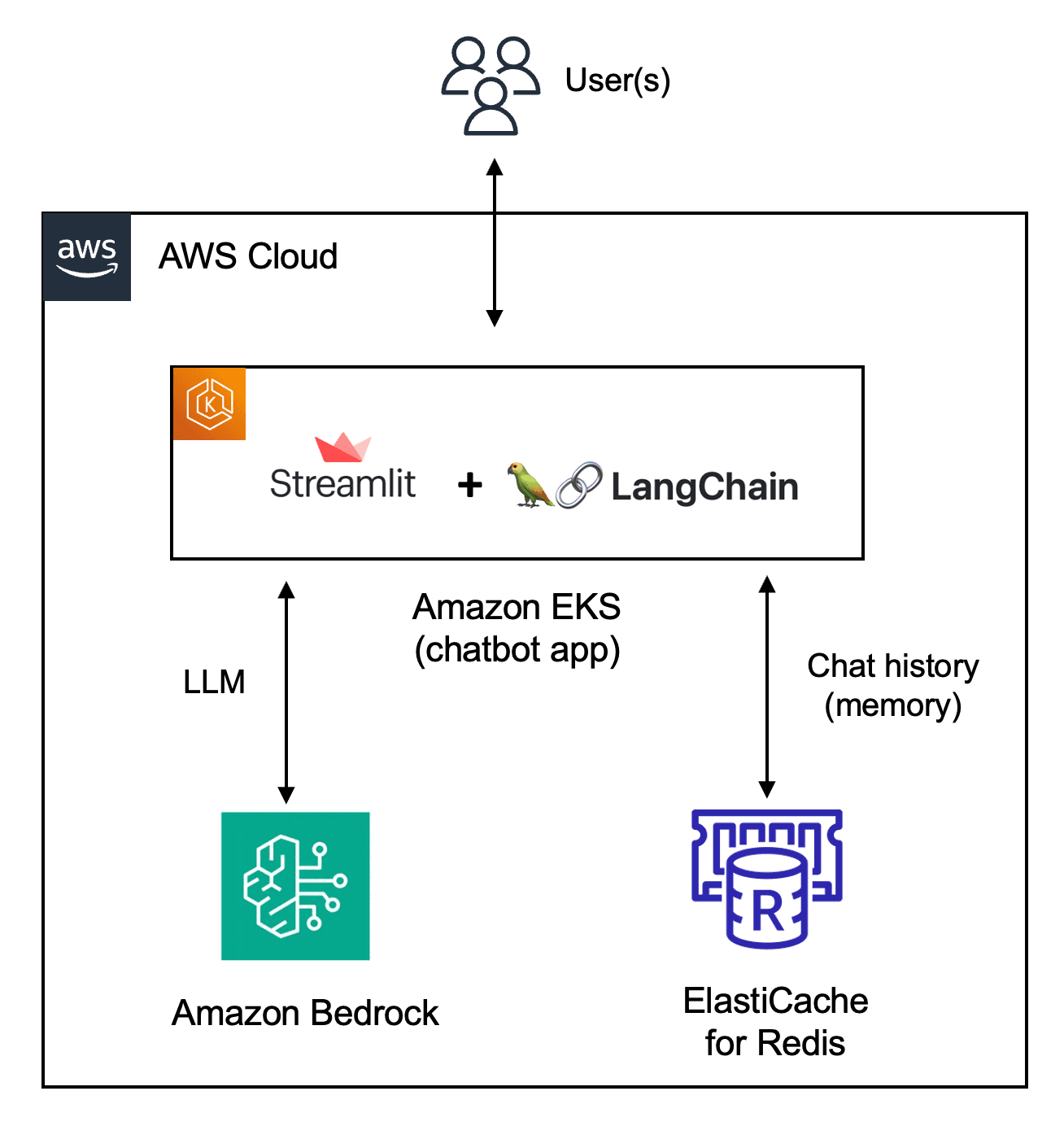

Build a Streamlit app with LangChain and Amazon Bedrock

Use ElastiCache Serverless Redis for chat history, deploy to EKS and manage permissions with EKS Pod Identity

GitHub repository for the app - https://github.com/build-on-aws/streamlit-langchain-chatbot-bedrock-redis-memory

- Amazon Bedrock: Use the instructions in this blog post to setup and configure Amazon Bedrock.

- EKS cluster: Start by creating an EKS cluster. Point

kubectlto the new cluster usingaws eks update-kubeconfig --region <cluster_region> --name <cluster_name> - Create an IAM role: Use the trust policy and IAM permissions from the application GitHub repository.

- EKS Pod Identity Agent configuration: Set up the EKS Pod Identity Agent and associate EKS Pod Identity with the IAM role you created.

- ElastiCache Serverless for Redis: Create a Serverless Redis cache. Make sure it shares the same subnets as the EKS cluster. Once the cluster creation is complete, update the ElastiCache security group to add an inbound rule (TCP port

6379) to allow the application on EKS cluster to access ElastiCache cluster.

app.yaml file:- Enter the ECR docker image info

- In the Redis connection string format, enter the Elasticache username and password along with the endpoint.

To check logs:kubectl logs -f -l=app=streamlit-chat

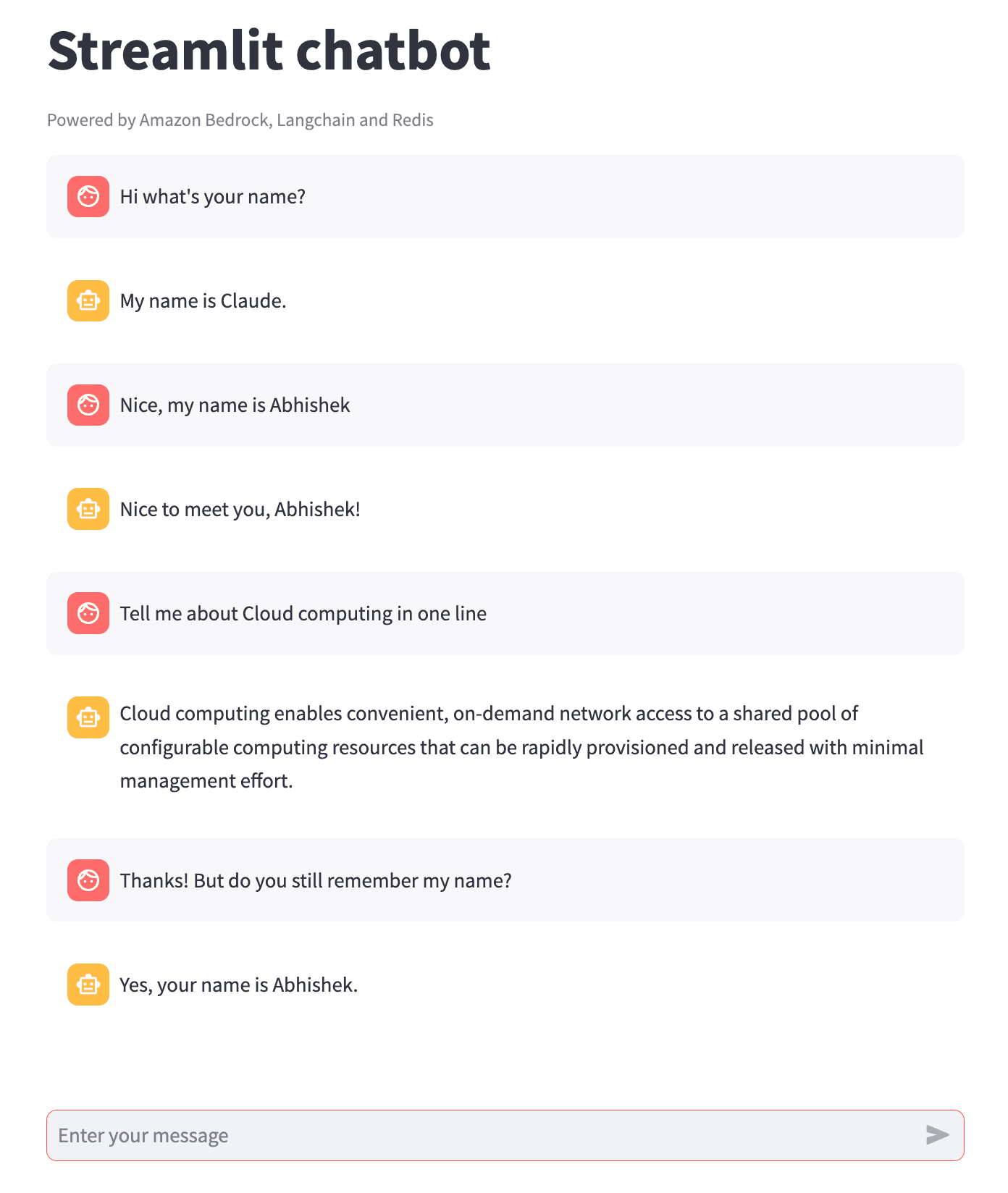

http://localhost:8080 using your browser and start chatting! The application uses the Anthropic Claude model on Amazon Bedrock as the LLM and Elasticache Serverless instance to persist the chat messages exchanged during a particular session.

redis-cli to access the Elasticache Redis instance from EC2 (or Cloud9) and introspect the data structure used by LangChain for storing chat history.Don't runkeys *in a production Redis instance - this is just for demonstration purposes

"message_store:d5f8c546-71cd-4c26-bafb-73af13a764a5" (the name will differ in your case).type message_store:d5f8c546-71cd-4c26-bafb-73af13a764a5 - you will notice that it's a Redis List.LRANGE command:message_store:<session_id>) - each Redis List is mapped to the Streamlist session. I also found the Streamlit component based approach to be quite intuitive and it pretty extensive as well.Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.