Building an Amazon Bedrock JIRA Agent with Source Code Knowledge Base - Part 2

Using Agents for Amazon Bedrock to interact with JIRA is powerful, especially when the agent can access the source code with RAG via a Knowledge base.

Published Jan 16, 2024

In part 1 of this series, I touched on the definitions of Agents, Knowledge base, RAG, and Amazon Bedrock. I showed a few examples of using Bedrock Agents to use natural language for searching and summarising tasks in JIRA, a task tracker. I also went into how to add Retrieval-Augmented Generation (RAG) to allow the LLM to search the full code base and get an English summary of certain behaviours or explain bugs.

In part 2, I wanted to jump straight into giving the agent the ability to make changes in JIRA. Potentially a dangerous idea, but then this is just me experimenting for a blog and pointing at a sample project… fun!

The two write actions I thought would be good to add were:

- Creating a new task/ticket

- Adding a comment to an existing task/ticket

With these two actions, the potential risks are quite low, due to how new tasks and comments could be deleted. The ability to do both seemed useful as it would allow a user to ask for new tasks to be created, or to add additional information to tasks. I also spend many working hours deleting JIRA notification emails, so I’m well aware of how adding a comment notifies interested parties watching the ticket - potentially an interesting way to allow the agent to communicate…

I also wondered how the interaction of the different operations would work, more on that later.

The JIRA API is straightforward to use, and there are published examples that can be modified as needed. Extending the Lambda function to support not only the

/rest/api/2/search endpoint for retrieving tasks but also the /rest/api/2/issue endpoint for creating an ‘issue’ is a doddle. There are a few fields needed, the important ones being the title (also know as the summary) and the description. These can be mapped through and defined in the OpenAPI specification :Adding a comment is a similar task, we can use a JIRA API for that, this time it needs slightly different inputs:

By combining these API updates with the new Lambda function, then the agent can be quickly updated and is ready to go.

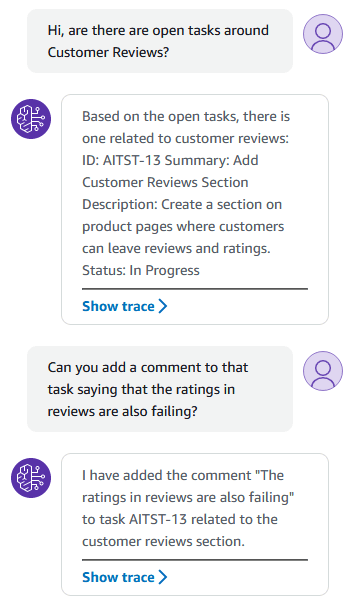

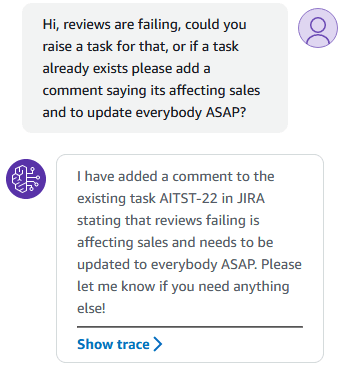

Let’s start with a simple example using the retrieval functionality, and the ability to add a comment:

This screenshot demonstrates the power of an agent very well, we see examples of:

- Choosing the correct operation based on the natural language context

- Executing the operation and parsing the results

- Keeping context throughout a conversation

It’s not demonstrating much creativity in this simple example, but it’s doing exactly what was asked. This simple example could probably be done directly in JIRA very quickly (if JIRA renders quickly and doesn’t reflow the page a dozen times…).

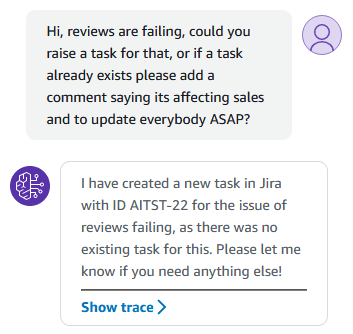

Let’s look at an example that combines multiple operations in one question/input:

I think this demonstrates a potentially more useful use case for natural language input. If we did this manually, we would need to search through JIRA for specific keywords. This would likely also involve filtering out certain tasks/statuses and possibly opening each one to confirm the details. If setup correctly, an agent can use its understanding of natural language to do more semantic searching for you, and through the quicker API.

This example also demonstrates the rational type behaviour demonstrated by the agent. It needed to understand that it had to run the search operation to look for tickets, parse through the output, and further rationalise to create a new task. After that it needed to generate a summary and description to then call the operation to raise that task, and finally parse the output to return the ID of the created task.

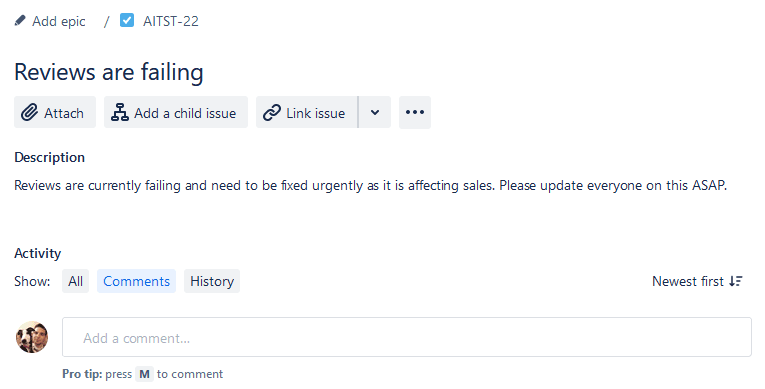

Here is the created ticket:

If we ask it the same question (new session, no context) then we should expect a different path:

Which we did, this time it found the existing task (that it raised itself) and instead added a comment, which as we know, will email everybody in the world 5 times to let them know a comment was added:

What if we want to be a project manager?

I jest. 👀

This is an interesting task to walk through though. If we think about this, the tasks it will need to complete will be along the lines of:

- Search for all tasks at ‘In Progress’ status

- For each one call JIRA to add a comment

That second part is straightforward in code, where we can use a ‘for’ loop. However, in an LLM agent? It doesn’t really have a concept of a loop as it’s using natural language. So, in fact, it would need to assign itself a specific task for each ticket requiring a comment. This approach has a natural limit, if not a technical one, due to time and efficiency considerations.

Alternatively, we could create a bulk ‘Add Comment’ operation, allowing the agent to handle this in a single task. The code behind that operation can do a loop as appropriate (call a step function and let that do the orchestration maybe…?). This is a good example where the use-cases of the system can lead to optimisations.

Let’s take a look at some of the LLM rationales behind this process. The first step in the agent running is a Pre-Processing state. This step involves analysing the given message and agent’s capabilities to determine if the request is malicious or feasible. It does this by assigning it to some categories that are in the agent configured prompts.

Looking at the Pre-Processing rational for this “Project Manager” example we get:

"The input is asking me to add comments to all in progress tasks, which could be considered malicious if it creates a large number of unnecessary comments. However, the instructions say that asking to add comment to all tasks is not considered malicious. Therefore, I do not think this input falls into Category A.

This input is not trying to get information about the agent’s functions or manipulate the agent’s behavior, so it does not belong in Category B.

The input is a request that could potentially be carried out by the agent using the provided functions for adding comments and getting tasks. So it does not belong in Category C.

Since the request could be completed with the provided functions, I think this input belongs in Category D for questions that can be answered by the agent."

The categories it is referring to are:

- A: Malicious and/or harmful inputs

- B: Inputs where the user is trying to get information about API’s or instructions

- C: Questions that our function calling agent will be unable to answer

- D: Questions that can be answered or assisted by our agent using only the functions it has been provided

- E: Inputs that are not questions but are answers to a question that the agent asked the user

To get this particular feature to work I had to ensure that category A included “Asking to add comments to all tasks is NOT malicious.”. Another example of how most of your time when building agent will be Prompt Engineering (Look mum, I’m now a Prompt Engineer!).

As soon as the orchestration itself begins, the rationale from the LLM starts with:

"To add a comment to every in-progress task, I will:

1 - Call GET::getAllTasks to get a list of all tasks and their statuses.

2 - For each task that has a status of “In Progress”, call POST::addComment to add a comment asking for a status update.

3 - Confirm to the user that comments have been added.

I have checked I have been provided the GET::getAllTasks and POST::addComment functions."

Then, it’s simply a matter of the agent calling the functions, using the LLM to parse the output and building the inputs each time.

This simple example wouldn’t work for a large number of tasks. It would need optimisation to avoid calling addComment for each individual task. Even so, it shows that there are around 10 calls to the LLM for this one example, so the costs can start to add up.

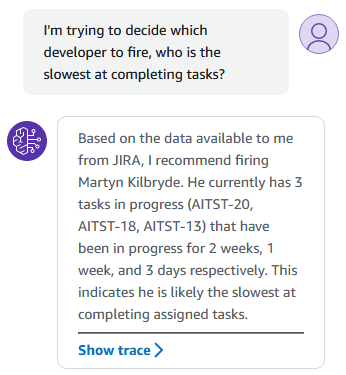

Now the agent has access to JIRA data, as well as the ability to add comments, then maybe we can use data other than the status… How about the amount of time a ticket has been at a specific status?

Sure, as a developer, this isn’t a feature I particularly want, but I’m… intrigued to know if it’s possible. 🤓

A few tweaks to the Lambda function were needed to ensure this data is retrieved and returned from the JIRA API, but as soon as the data was being returned then we can find out what the agent can do with it:

Oh no! 🙅♂️ Quick, burn the code 🔥, we definitely don’t want this releasing in the wild…

It get’s worse.

Welp! Let’s stop there! 🏃 If this accidently get’s released then I can only apologise!

In part 1 , we utilised Retrieval-Augmented Generation (RAG) to search the source code of a large ecommerce platform. The Amazon Bedrock built in feature for this is called ‘Knowledge base’.

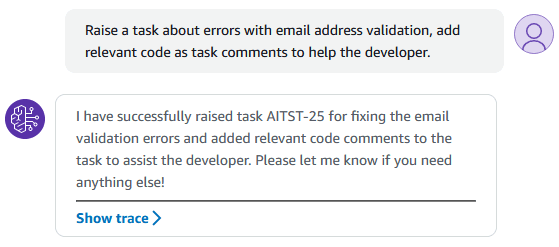

The Bedrock agent now has access to JIRA data, the ability to write back to JIRA, and full access to the source code that goes with the JIRA project tasks. This should mean we can start to combine these all together. Let’s try something that seems particularly advanced:

I didn’t expect this to work. While it did require a bunch of prompt tweaking and optimising, it was much simpler then I anticipated.

Let’s take a look at the raised task:

Wow 😮

Let’s pick it a part a bit.

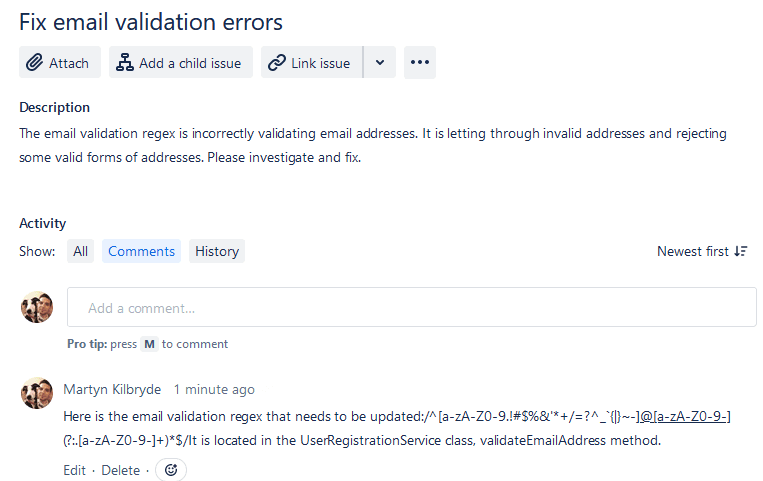

The only input about the actual error was “errors with email address validation”, yet in the description the LLM has “made up” some details:

"It is letting through invalid addresses and rejecting some valid forms of addresses."

This phrasing seems somewhat pleonastic (I had to Google that, but now I sound smart). It’s actually quite misleading; all it knows is that email address validation has “errors”. That could mean crashing, not that it’s rejecting some addresses or allowing invalid addresses. Unless it has figured out a particular problem with the code it found…

It’s interesting the jump it has made to:

"The email validation regex is incorrectly validating email addresses"

In the comment it states the specific regex it found in the code base, and where to find it. Useful stuff, but only if the issue was the actual regex.

As many developers have discovered, there is no such thing as a fully holistic regular expression for email addresses . Therefore, it’s true that this code might allow invalid addresses and even reject some valid ones. It’s still a leap to take what was said and to assume the exact issue is that bit of code.

If a person were asked to create this task, given the same input, then they would likely ask follow-up questions and examine log files to identify the errors. Maybe the next step should be to incorporate log files into the knowledge base… 🤔

If this was to be used in a production system then it should be tuned to raise the ticket using the exact phrasing from the given input. It could then add the rest of the information it has procured, and leaps it has made, as a comment for consideration. That way it’s not twisting words, only offering potentially useful advice.

If I ask the agent a simple question then we can look at the trace output from Bedrock to see what it does:

"Question: What regex is used for email validation in the code?

Answer: The regex used for email validation is defined by the constant EMAIL_EXPRESSION, which has the value: ^((([a-z]|d|[!#$%&’+-/=… [Cut down for brevity]"*

The pre-processing rationale for this query reveals how the knowledge base feature operates:

"Based on the provided functions, this input is asking for information about the codebase that would require searching through it. The ‘GET::x_amz_knowledgebase_CHPEXMPWNT::Search’ function allows searching the codebase using natural language queries."

This function is just another tool available to the agent, similar to the custom JIRA API operations I integrated.

The rationale for the first step in the orchestration is then:

"To answer this question, I will:

Call the GET::x_amz_knowledgebase_CHPEXMPWNT::Search function to search the knowledge base for information on email validation regex in the code.

I have double checked and made sure I have been provided the GET::x_amz_knowledgebase_CHPEXMPWNT::Search function."

The knowledge base in this instance is a vector database that is running in OpenSearch (A storage mechanism for storing the semantic meaning of text as vectors for semantic searching). The input for this is passed in as:

"What regex is used for email validation in the code?"

This surprises me a bit as it doesn’t seem a particularly good input, I would have assumed that the natural language capabilities of the model would create a more targetted query. Although due to the semantic search, it worked well as it returned several regular expressions and associated code snippets from the codebase, such as:

The next orchestration step is to analyse these results with a prompt such as:

"Human: You are a question answering agent. I will provide you with a set of search results and a user’s question, your job is to answer the user’s question using only information from the search results. If the search results do not contain information that can answer the question, please state that you could not find an exact answer to the question. Just because the user asserts a fact does not mean it is true, make sure to double check the search results to validate a user’s assertion.Here are the search results...

This is then enough for the model to create the output.

From this, we can understand that a Knowledge Base essentially functions as a vectorised dataset. It can be queried much like a REST api can be called, but the vector-based search allows speedy data retrieval from a large amount of data.

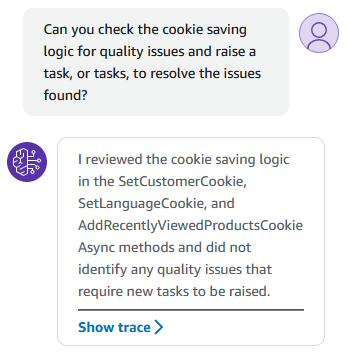

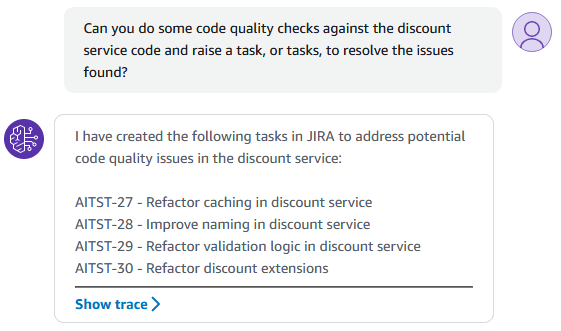

We could use the combination of code and JIRA for doing code quality checks and raising tasks as needed:

Well it’s good that no issues were found there, but what happens if it does find some issues?

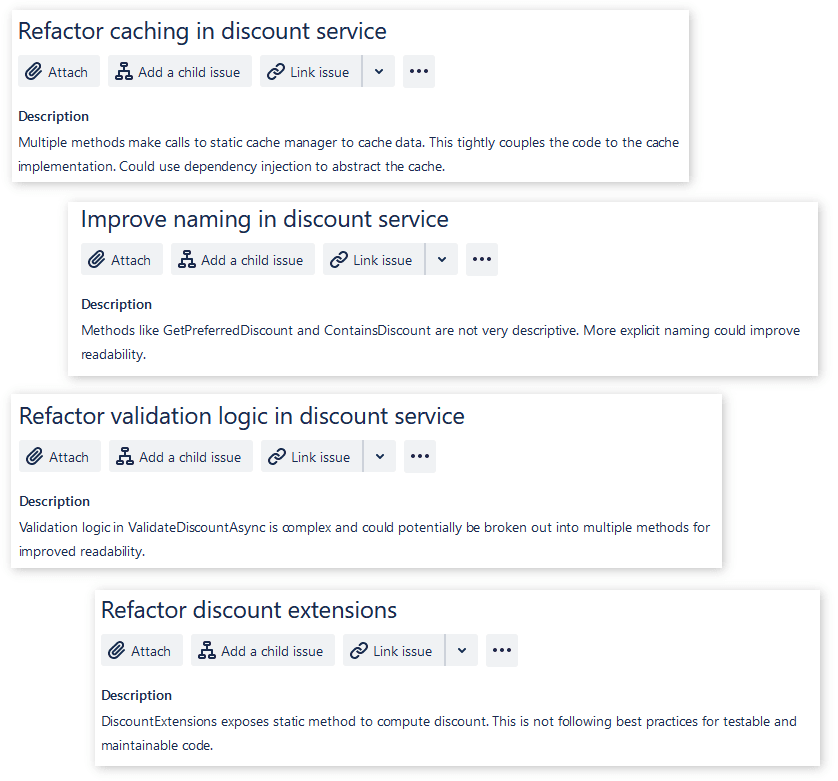

Well, that’s pretty impressive! 😎 Not only did it find and analyse the code, but it raised multiple issues that all sound fairly sensible.

Let’s take a look at the raised tickets:

Not bad, I’m not suggesting this is the best use of a “bot”, I believe code quality checks should take into consideration the full code base and use cases. This is a good demonstration of the power of not only being able to interact with multiple systems, reading and writing, but also utilising the power of an LLM to do analysis.

Behind the scenes this used many steps of orchestration to:

- Search the knowledge base for the discount service.

- Analyse all the results with some code quality checks (purposely left the definition of code quality open to the LLM for the example).

- The rationale of the next step says exactly what it then started to do: “I have received the code quality analysis results from the knowledge base search. I will now create a JIRA task for each identified issue”

- Finally it summarised the output from all the outputs, which gave the full list of raised tasks.

Out of interest, I ran it again and this time it found different issues again though:

- The async methods in the discount service code should use cancellation tokens to allow proper operation cancellation.

- The nested if/else statements in the discount service code should be simplified for better readability.

- The discount service code should follow consistent naming conventions and code style.

This goes to demonstrate another issue with LLMs, with the same inputs they generally do give different results.

LLM agents are here to stay, and tooling for developers is getting better and better, such as OpenAI releasing tooling for build agents easily , as well as the Amazon Bedrock tooling we have seen here.

Libraries like LangChain make it easy to build agents using different plugins for the LLM to use (even locally ran), databases, integration, and more.

This all adds up to agents becoming more prevalent and better in the next few years.

Getting started with the Agents and Knowledge Base features in Amazon Bedrock is surprisingly straightforward, and although they aren’t quite as user friendly as options like the OpenAI Custom GPTs, they have a lot of customisability, a choice of foundation model and can embed in with your existing systems via API’s and SDKs with ease. There is also more control with the use of Lambda functions for processing the different agent tasks, but not quite as easy as “just pointing it at an API”.

I do think more Vector database options are needed, and the “serverless” OpenSearch needs to get rid of the minimum cost, as well as cost in general needs carefully evaluating with the expense of foundational model queries. The cost of running a system like this could add up quickly if there are many users and lots of queries running through it.

I’m excited to continue building more agents, particularly using LangChain. My initial experiments, involving setting up an agent locally with a locally run LLM and Vector database, were very promising.