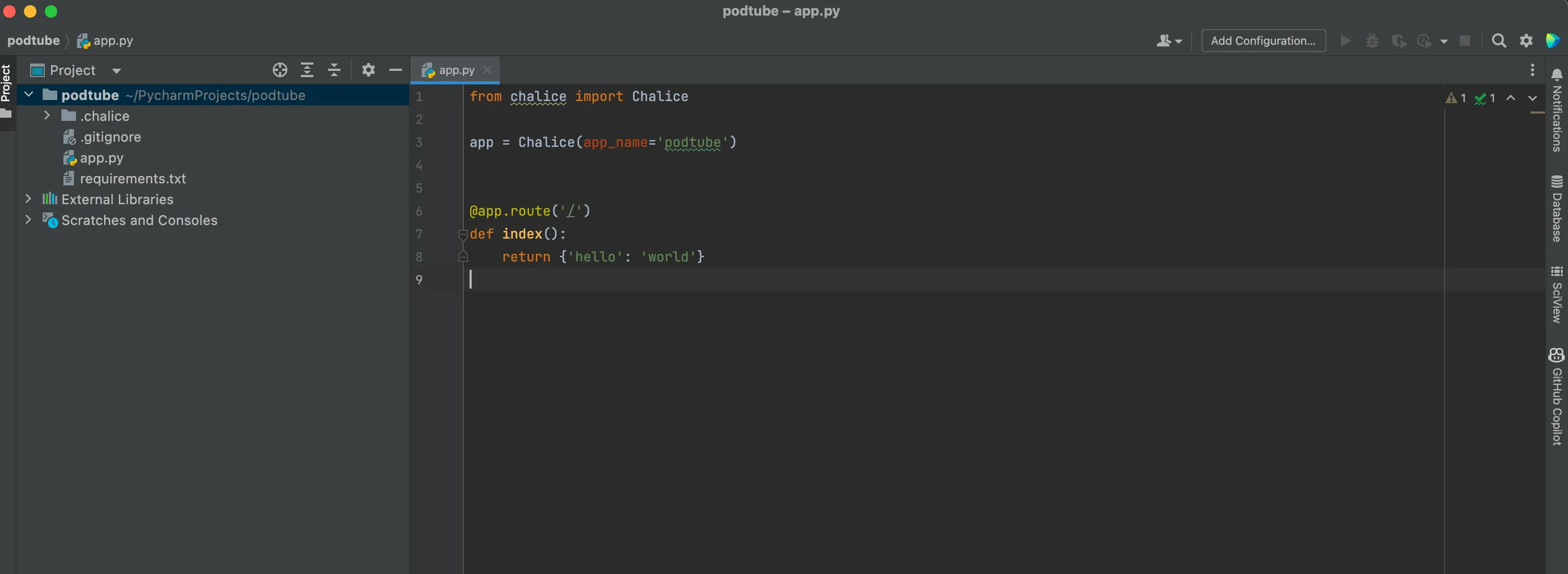

AWS Chalice Introduction

Developing applications has never been easier!

pip install chalice

chalice deploychalice deleteconfig.json file so that we can differentiate between the test and production environments..chalice directory, you will find the config file. Here, we can actually separate configurations for test and production environments. We can specify details such as how much RAM each Lambda function should use, or set environment variables. I will provide a configuration example that includes all these details, serving as a complex, yet illustrative example. For now, we are capable of defining these settings.1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

{

"version": "2.0",

"app_name": "podtube",

"automatic_layer": true,

"stages": {

"dev": {

"lambda_memory_size": 10240,

"reserved_concurrency": 100,

"lambda_timeout": 900,

"api_gateway_stage": "api",

"autogen_policy": false,

"api_gateway_policy_file": "policy-dev.json",

"environment_variables": {

"AWS_ACCESS_KEY_ID_IG": "BLA",

"AWS_ACCESS_SECRET_KEY_IG": "SECRET-BLA"

}

},

"test": {

"lambda_memory_size": 1024,

"reserved_concurrency": 100,

"lambda_timeout": 900,

"api_gateway_policy_file": "policy-test.json"

}

}

}chalice deploy --stage testtail -100 ~/.aws/credentialschalice deploy --profile profile-name1

2

3

def search(event, context):

return {'hello': 'world'}function_name– Lambda name.function_version– Function version.invoked_function_arn– Amazon Resource Name (ARN) used to call the function. Specifies whether the caller specifies a version number or alias.memory_limit_in_mb– The amount of memory allocated for the function.aws_request_id– Identifier of the request to call.log_group_name– Log groups.log_stream_name– Log flow for function instance.identity– (mobile apps) Information about the Amazon Cognito ID authorizing the request.cognito_identity_id– Authenticated Amazon Cognito ID.cognito_identity_pool_id– Amazon Cognito ID pool.client_context— (mobile applications) The client context provided by the client application to Lambda.

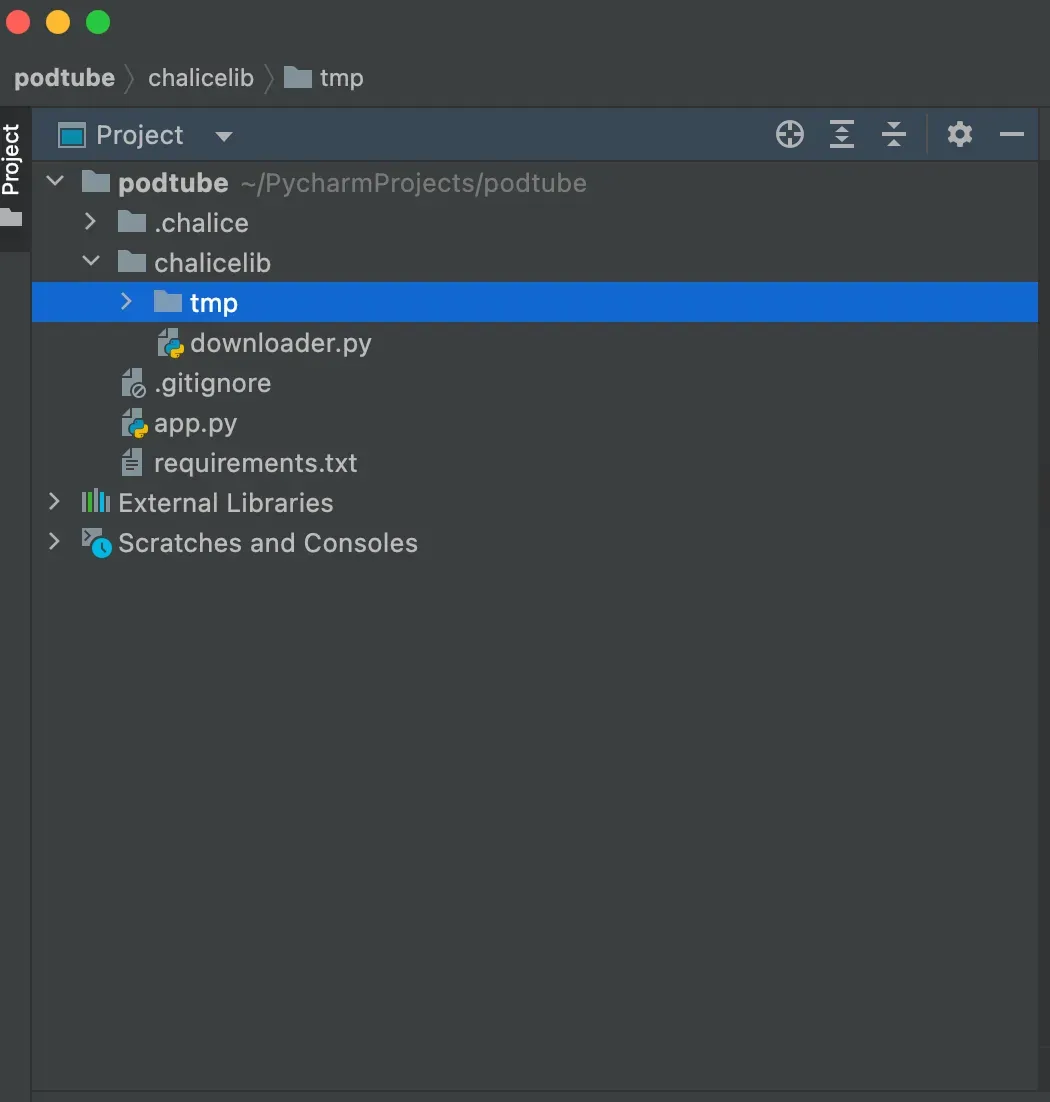

chalicelib directory, and anything in that directory is automatically included in the distribution package."pip install pytubepytube==12.1.01

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

from pytube import YouTube, exceptions

from abc import ABC, abstractmethod

import os

class VideoDownloader(ABC):

def download(self, url: str, path: str):

pass

class YoutubeDownloader(VideoDownloader):

def __init__(self):

self.out_file = None

def download(self, url: str, path: str = "./tmp"):

if url is None:

raise ValueError("URL is None")

try:

video = YouTube(url).streams.filter(only_audio=True).first()

self.out_file = video.download(output_path=path)

self.convert_to_mp3()

except exceptions.RegexMatchError:

raise ValueError("Invalid URL")

except exceptions.VideoUnavailable:

raise ValueError("Video is unavailable")

def convert_to_mp3(self):

base, ext = os.path.splitext(self.out_file)

new_file = base + '.mp3'

os.rename(self.out_file, new_file)

- If the values are not human readable, such as serialized binary data.

- When you have lots of arguments.

1

2

3

4

5

def users():

body = app.current_request.json_body

username = body["username"]

return {'name': username}- When you want to be able to call them manually while developing the code, e.g. with curl

- If you are already sending a different content type like application/octet-stream.

1

2

3

4

def users():

name = app.current_request.query_params.get('name')

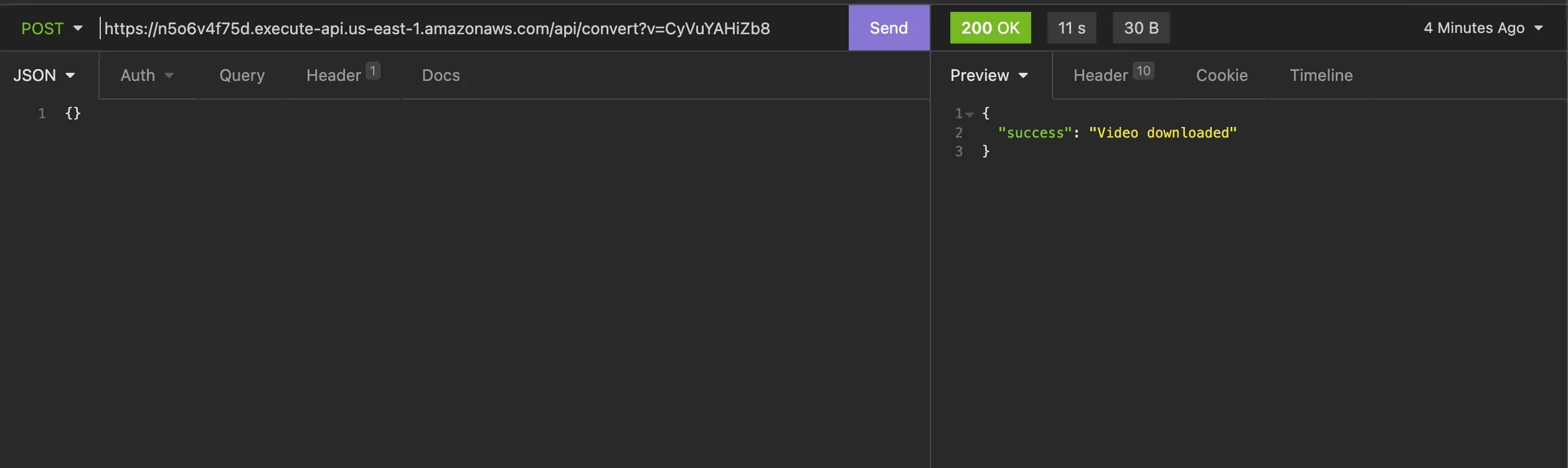

return {'name': name}0.0.0.0/convert?v=b-I2s5zRbHgchalice local1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

from chalice import Chalice

from chalicelib.downloader import YoutubeDownloader

app = Chalice(app_name='podtube')

def convert():

if app.current_request.query_params is not None:

if 'v' not in app.current_request.query_params:

return {'error': 'Parameter v is missing'}

else:

url_extension = app.current_request.query_params.get('v')

yt = YoutubeDownloader()

yt.download("https://www.youtube.com/watch?v={url_part}".format(url_part=url_extension))

return {'success': 'Video downloaded'}

else:

return {'error': 'No video URL provided'}1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

import boto3

import botocore.exceptions

class S3:

def __init__(self, bucket_name):

self.s3 = boto3.client("s3")

self.bucket_name = bucket_name

if not self.check_if_bucket_exists():

print("okay, bucket does not exist")

self.create_bucket()

else:

print("bucket exists")

def check_if_bucket_exists(self):

try:

self.s3.head_bucket(Bucket=self.bucket_name)

return True

except botocore.exceptions.ClientError:

return False

def create_bucket(self):

print("Creating bucket: " + self.bucket_name)

self.s3.create_bucket(Bucket=self.bucket_name)

def upload_file(self, file_path, s3_path):

self.s3.upload_file(file_path, self.bucket_name, s3_path)

def download_file(self,s3_path):

return self.s3.generate_presigned_url(

ClientMethod="get_object",

Params={

"Bucket": self.bucket_name,

"Key": s3_path,

},

ExpiresIn=3600,

)1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"logs:CreateLogGroup",

"logs:CreateLogStream",

"logs:PutLogEvents"

],

"Resource": "arn:aws:logs:*:*:*"

},

{

"Effect": "Allow",

"Action": [

"s3:*"

],

"Resource": "*"

}

]

}1

2

3

4

5

6

7

8

9

10

11

12

{

"version": "2.0",

"app_name": "podtube",

"automatic_layer": true,

"stages": {

"dev": {

"api_gateway_stage": "api",

"autogen_policy": false,

"api_gateway_policy_file": "policy.json"

}

}

}

Thank you for your passion I hope it will be helpful for your project!