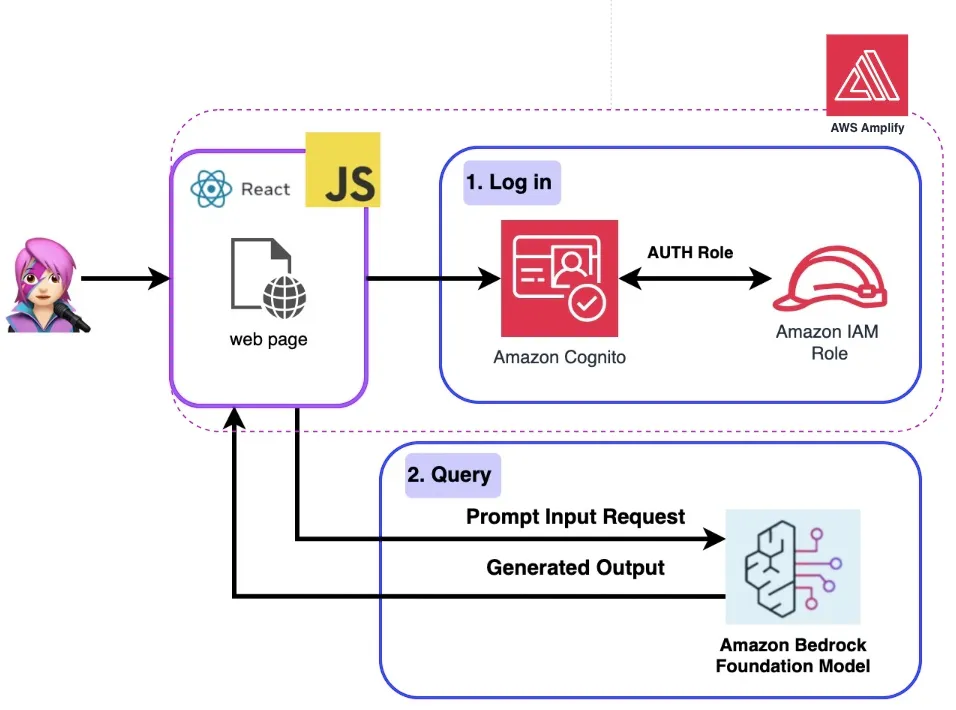

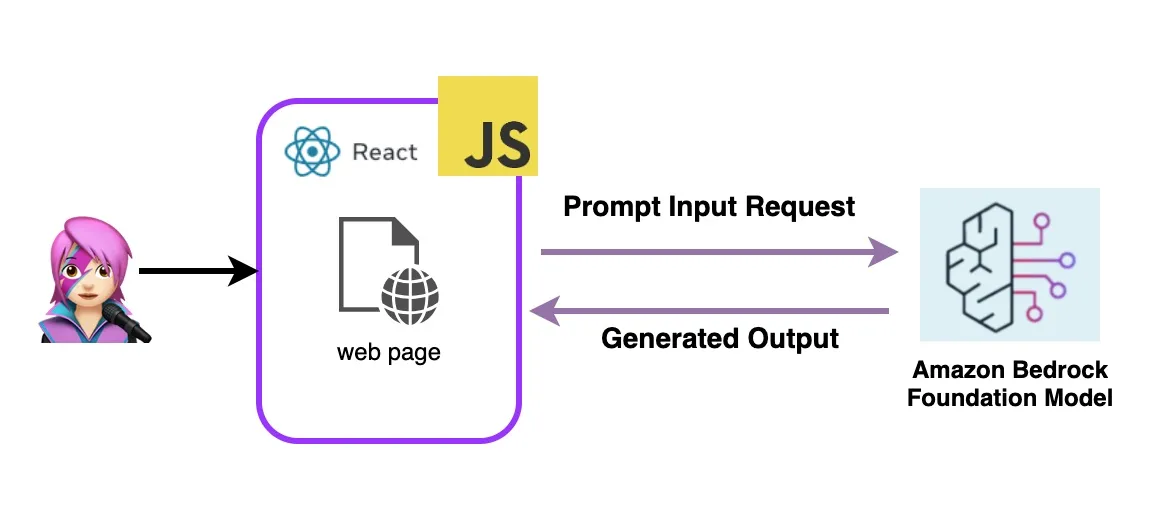

Add Generative AI to a JavaScript Web App

Learn how to integrate genAI with minimal code changes using JS, Cognito credentials to invoke the Amazon Bedrock API in a React single page app.

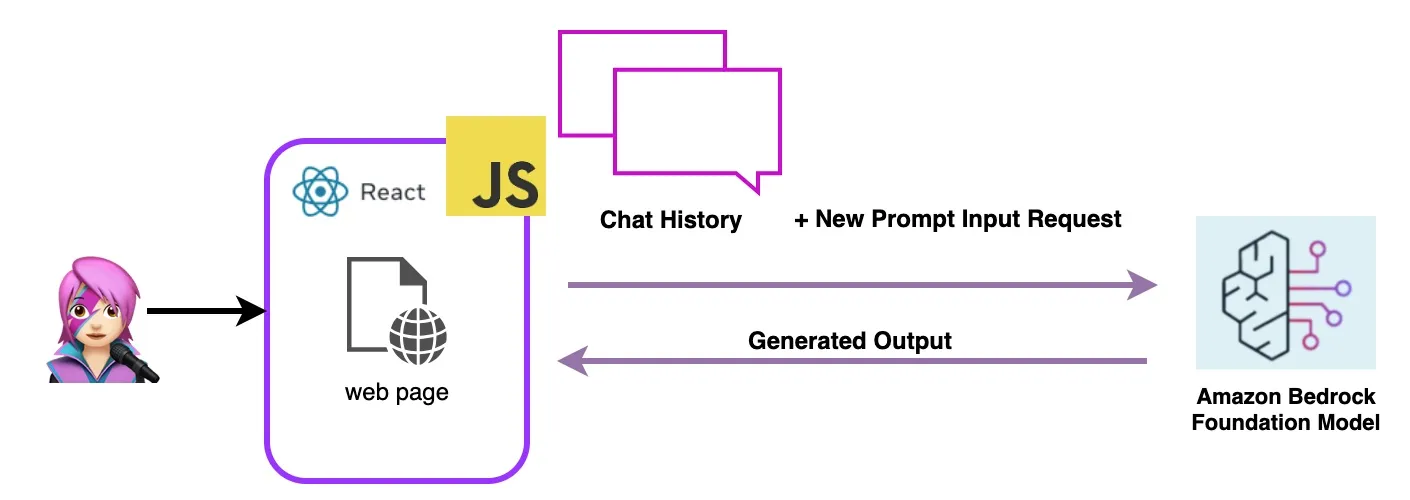

How Does This Application Work?

An instance of a Large Language Model

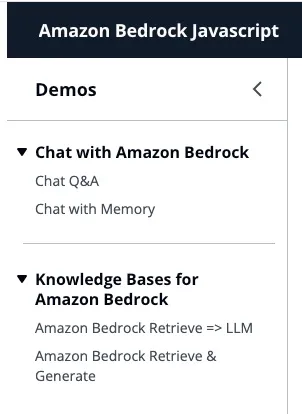

First: Chat With Amazon Bedrock

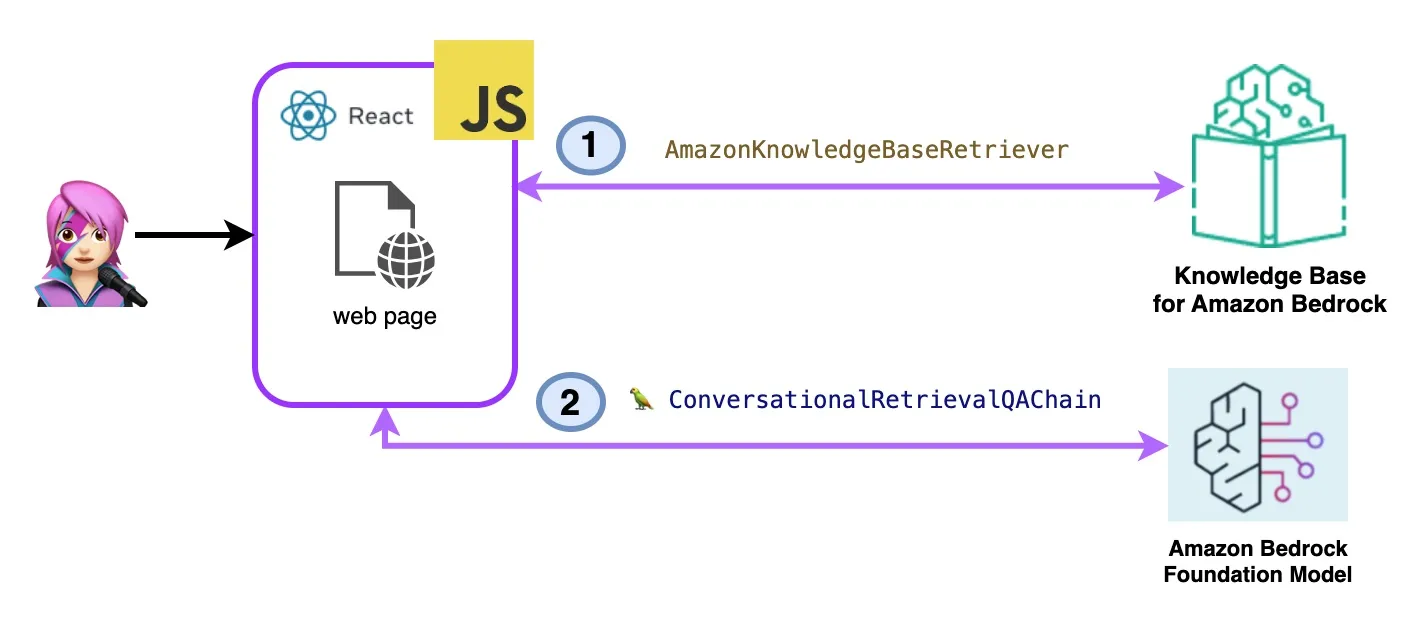

Second: Knowledge Bases for Amazon Bedrock

Let's Deploy React Generative AI Application With Amazon Bedrock and AWS Javascript SDK

Step 1 - Enable AWS Amplify Hosting:

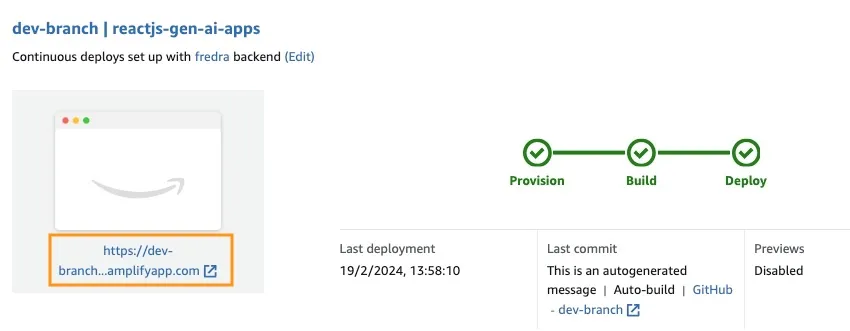

Step 2 - Access to the App URL:

Let's Try React Generative AI Application With Amazon Bedrock Javascript SDK

🚨🚨🚨🚨🚨 This blog is outdated, check the new version Add Generative AI to a JavaScript Web App 2.0 🚨🚨🚨🚨🚨🚨

1

2

3

4

5

6

7

8

9

10

11

12

{ policyName: "amplify-permissions-custom-resources",

policyDocument: {

Version: "2012-10-17",

Statement: [

{

Resource: "*",

Action: ["bedrock:InvokeModel*", "bedrock:List*", "bedrock:Retrieve*"],

Effect: "Allow",

}

]

}

}- Chat with Amazon Bedrock

- Knowledge Bases for Amazon Bedrock

1

2

import { BedrockAgentClient} from "@aws-sdk/client-bedrock-agent"

import { BedrockAgentRuntimeClient} from "@aws-sdk/client-bedrock-agent-runtime"1

2

3

4

5

6

7

8

9

10

11

12

13

export const getModel = async () => {

const session = await fetchAuthSession(); //Amplify helper to fetch current logged in user

const model = new Bedrock({

model: "anthropic.claude-instant-v1", // model-id you can try others if you want

region: "us-east-1", // app region

streaming: true, // this enables to get the response in streaming manner

credentials: session.credentials, // the user credentials that allows to invoke bedrock service

// try to limit to 1000 tokens for generation

// temperature = 1 means more creative and freedom

modelKwargs: { max_tokens_to_sample: 1000, temperature: 1 },

});

return model;

};

1

2

3

4

5

6

import { Bedrock } from "@langchain/community/llms/bedrock/web";

import { ConversationChain} from "langchain/chains";

import { BufferMemory } from "langchain/memory";

// create a memory object

const memory = new BufferMemory({ humanPrefix: "H", memoryKey:"chat_history"});chat_history key in the memory to get past dialogs, hence you use that key as memoryKey in BufferMemory.

1

2

3

4

5

6

7

8

9

import { ListKnowledgeBasesCommand } from "@aws-sdk/client-bedrock-agent"

export const getBedrockKnowledgeBases = async () => {

const session = await fetchAuthSession()

const client = new BedrockAgentClient({ region: "us-east-1", credentials: session.credentials })

const command = new ListKnowledgeBasesCommand({})

const response = await client.send(command)

return response.knowledgeBaseSummaries

}1

2

3

4

5

6

7

8

9

10

11

12

13

14

import { AmazonKnowledgeBaseRetriever } from "@langchain/community/retrievers/amazon_knowledge_base";

export const getBedrockKnowledgeBaseRetriever = async (knowledgeBaseId) => {

const session = await fetchAuthSession();

const retriever = new AmazonKnowledgeBaseRetriever({

topK: 10, // return top 10 documents

knowledgeBaseId: knowledgeBaseId,

region: "us-east-1",

clientOptions: { credentials: session.credentials }

})

return retriever

}1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

import { ConversationalRetrievalQAChain } from "langchain/chains";

export const getConversationalRetrievalQAChain = async (llm, retriever, memory) => {

const chain = ConversationalRetrievalQAChain.fromLLM(

llm, retriever = retriever)

chain.memory = memory

//Here you modify the default prompt to add the Human prefix and Assistant suffix needed by Claude.

//otherwise you get an exception

//this is the prompt that uses chat history and last question to formulate a complete standalone question

chain.questionGeneratorChain.prompt.template = "Human: " + chain.questionGeneratorChain.prompt.template +"\nAssistant:"

// Here you finally answer the question using the retrieved documents.

chain.combineDocumentsChain.llmChain.prompt.template = `Human: Use the following pieces of context to answer the question at the end. If you don't know the answer, just say that you don't know, don't try to make up an answer.

{context}

Question: {question}

Helpful Answer:

Assistant:`

return chain

}

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

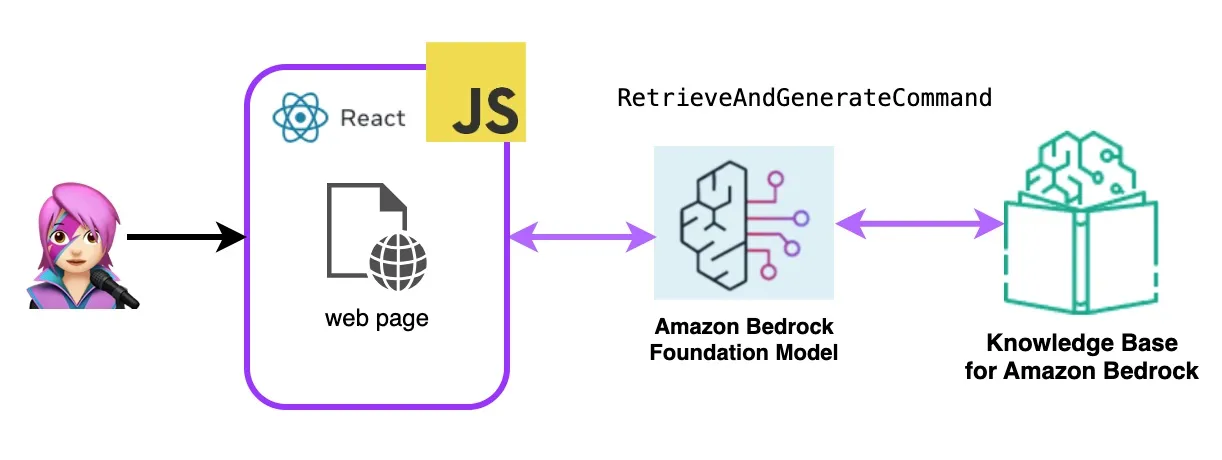

import { BedrockAgentRuntimeClient, RetrieveAndGenerateCommand } from "@aws-sdk/client-bedrock-agent-runtime"

export const ragBedrockKnowledgeBase = async (sessionId, knowledgeBaseId, query) => {

const session = await fetchAuthSession()

const client = new BedrockAgentRuntimeClient({ region: "us-east-1", credentials: session.credentials });

const input = {

input: { text: query }, // user question

retrieveAndGenerateConfiguration: {

type: "KNOWLEDGE_BASE",

knowledgeBaseConfiguration: {

knowledgeBaseId: knowledgeBaseId,

//your existing KnowledgeBase in the same region/ account

// Arn of a Bedrock model, in this case we jump to claude 2.1, the latest. Feel free to use another

modelArn: "arn:aws:bedrock:us-east-1::foundation-model/anthropic.claude-v2:1", // Arn of a Bedrock model

},

}

}

if (sessionId) {

// you can pass the sessionId to continue a dialog.

input.sessionId = sessionId

}

const command = new RetrieveAndGenerateCommand(input);

const response = await client.send(command)

return response

}- first fork this repo:

1

https://github.com/build-on-aws/building-reactjs-gen-ai-apps-with-amazon-bedrock-javascript-sdk/forks- Create a New branch:

dev-branch. - Then follow the steps in Getting started with existing code guide.

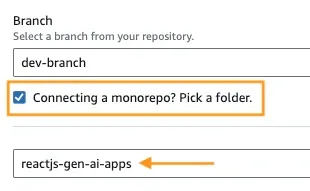

- In Step 1 Add repository branch, select main branch and Connecting a monorepo? Pick a folder and enter

reactjs-gen-ai-appsas a root directory.

Add repository branch - For the next Step, Build settings, select

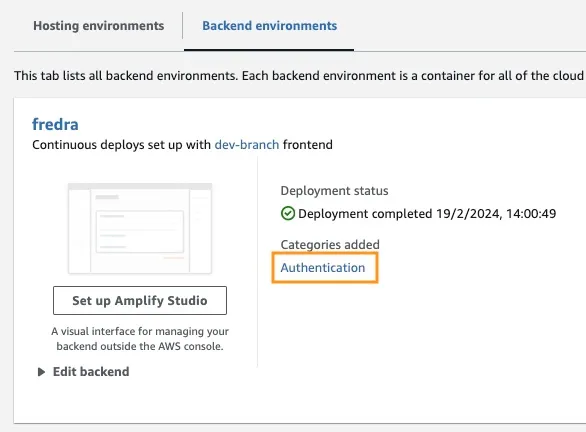

building-a-gen-ai-gen-ai-personal-assistant-reactjs-apps(this app)as App name, in Enviroment select Create a new envitoment and writedev.

- If there is no existing role, create a new one to service Amplify.

- Deploy your app.

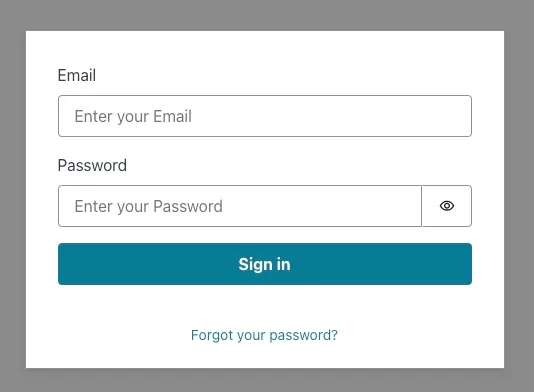

hideSignUp: false in App.jsx, but this can introduce a security flaw by giving anyone access to it.Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.