Incorpora IA generativa a una aplicación web de JavaScript

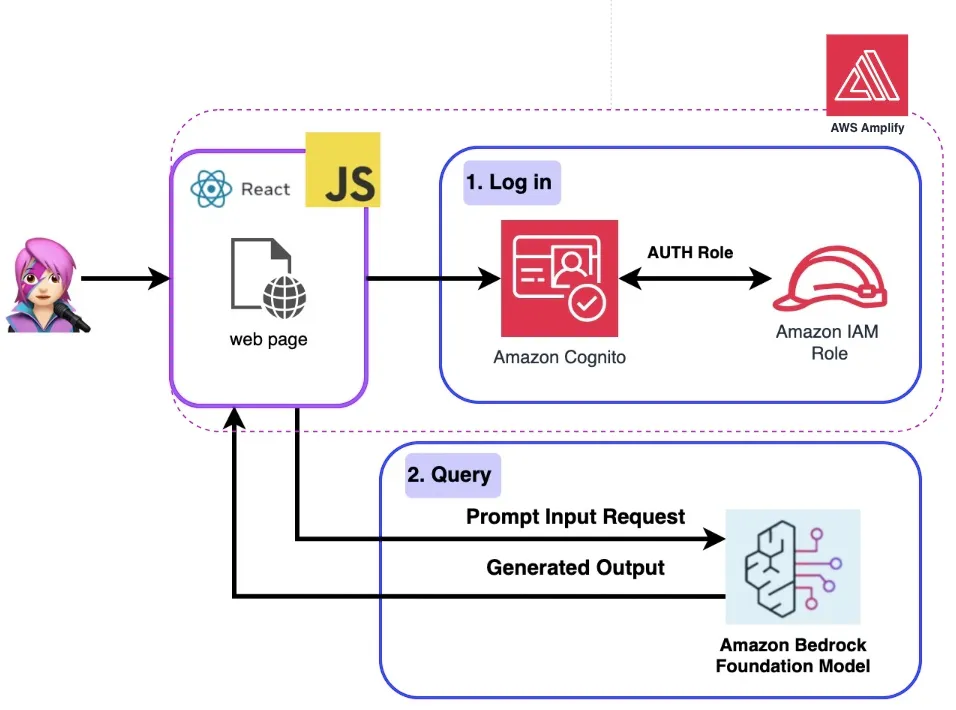

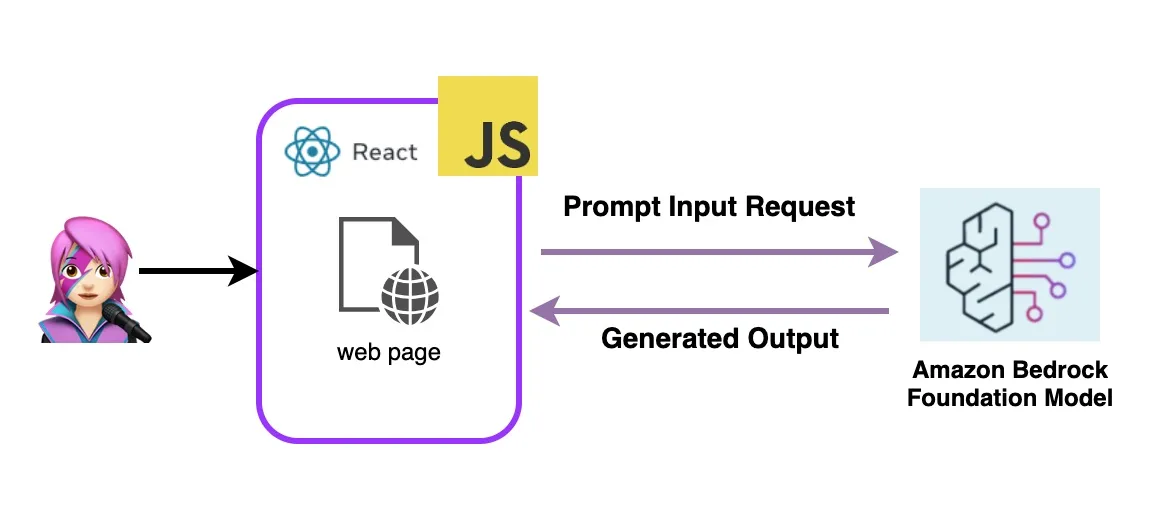

Integra GenAI con cambios mínimos en el código empleando JS y para invocar la API de Amazon Bedrock en una aplicación de una sola página de React.

¿Cómo funciona esta aplicación?

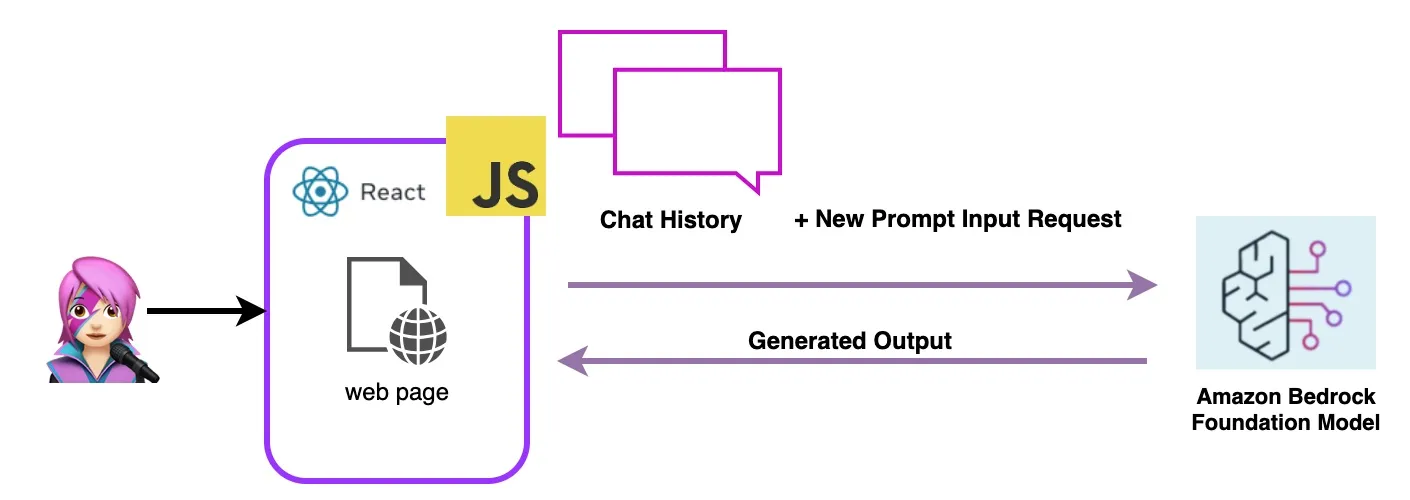

Un ejemplo de un modelo lingüístico amplio

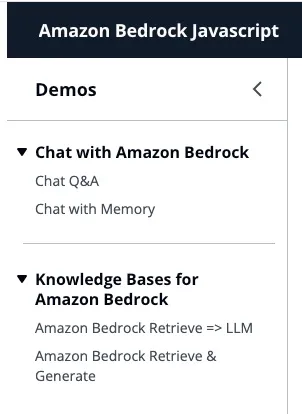

Primero: charla con Amazon Bedrock

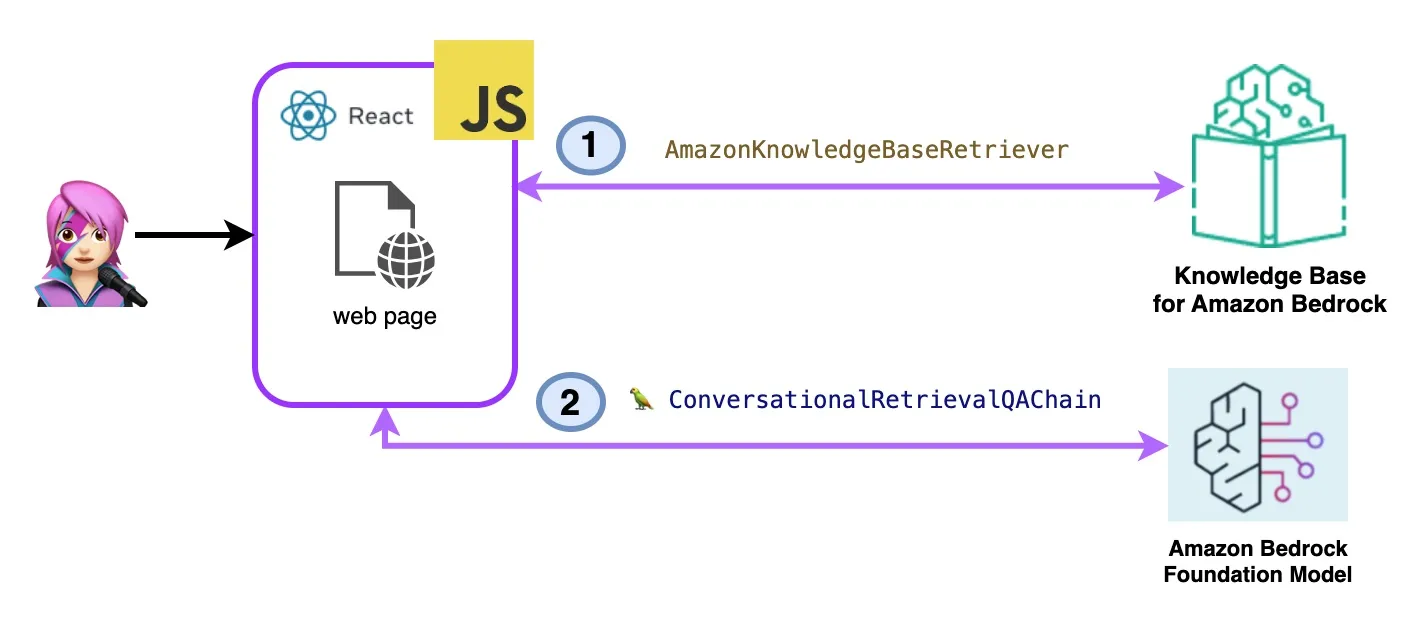

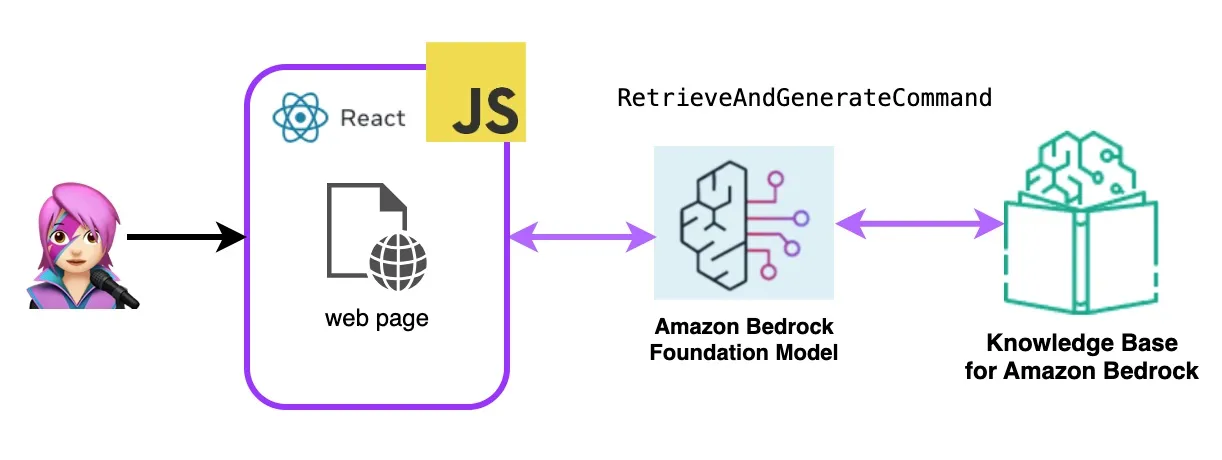

Segundo: Bases de conocimiento de Amazon Bedrock

Vamos a implementar la aplicación de IA generativa React con Amazon Bedrock y AWS Javascript SDK

Paso 1: Habilitar el host de AWS Amplify:

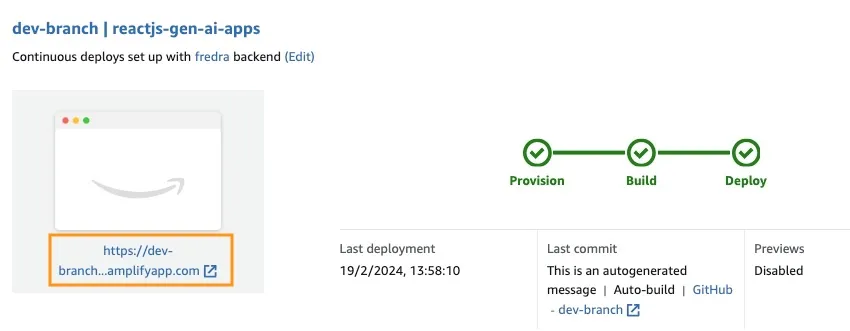

Paso 2: Acceso a la URL de la aplicación:

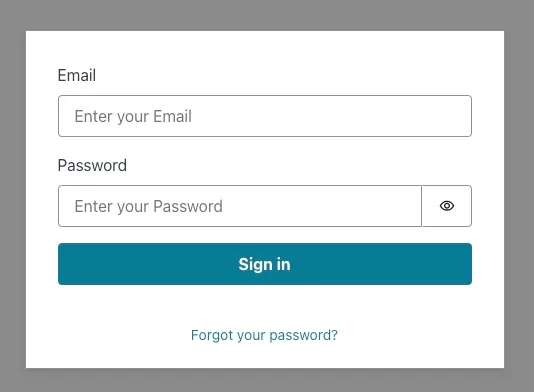

Probemos la aplicación de IA generativa React con el SDK de Javascript de Amazon Bedrock

🚀 Algunos enlaces para que sigas aprendiendo y construyendo:

- Chatea con Amazon Bedrock

- Bases de conocimiento de Amazon Bedrock

- primero fork este repositorio:

- Crea un Nueva Branch:

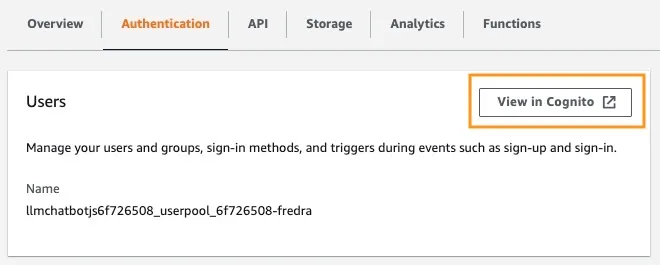

dev-branch. - Sigue los pasos en la guia de Getting started with existing code.

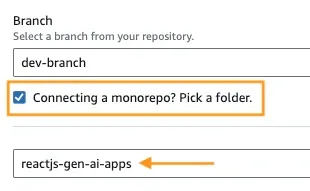

- En Paso 1 Añadir la nueva branch del repositorio, selecciona la branch dev-branch y ¿Conectar un monorepo? Elige una carpeta y escribe

reactjs-gen-ai-appscomo directorio raíz.

Add repository branch - Para el siguiente paso, Crear ajustes, selecciona

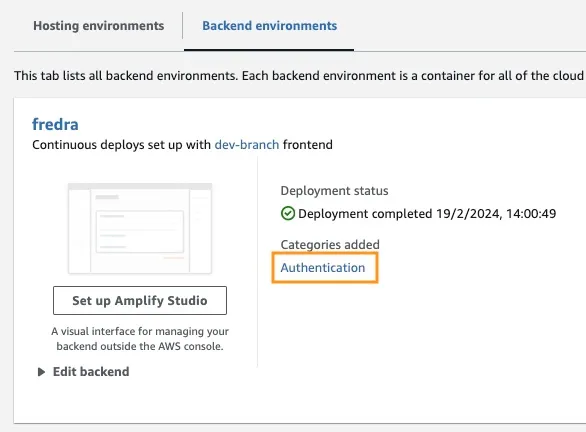

building-a-gen-ai-gen-ai-personal-assistant-reactjs-apps(this app)como nombre de la aplicación, en Envitonment selecciona Crea un nuevo entorno y escribedev.

- Si no hay ningún ROLE existente, crea un nuevo role para dar servicio a Amplify.

- Despliega tu aplicación.

hideSignUp: false en App.JSX, pero esto puede introducir un fallo de seguridad al dar a cualquiera acceso a él.Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.