Stream Amazon Bedrock responses for a more responsive UI

Pair Amazon Bedrock's invoke_model_with_response_stream with a bit of Streamlit's magic to give it the typewriter effect, creating a more responsive UI

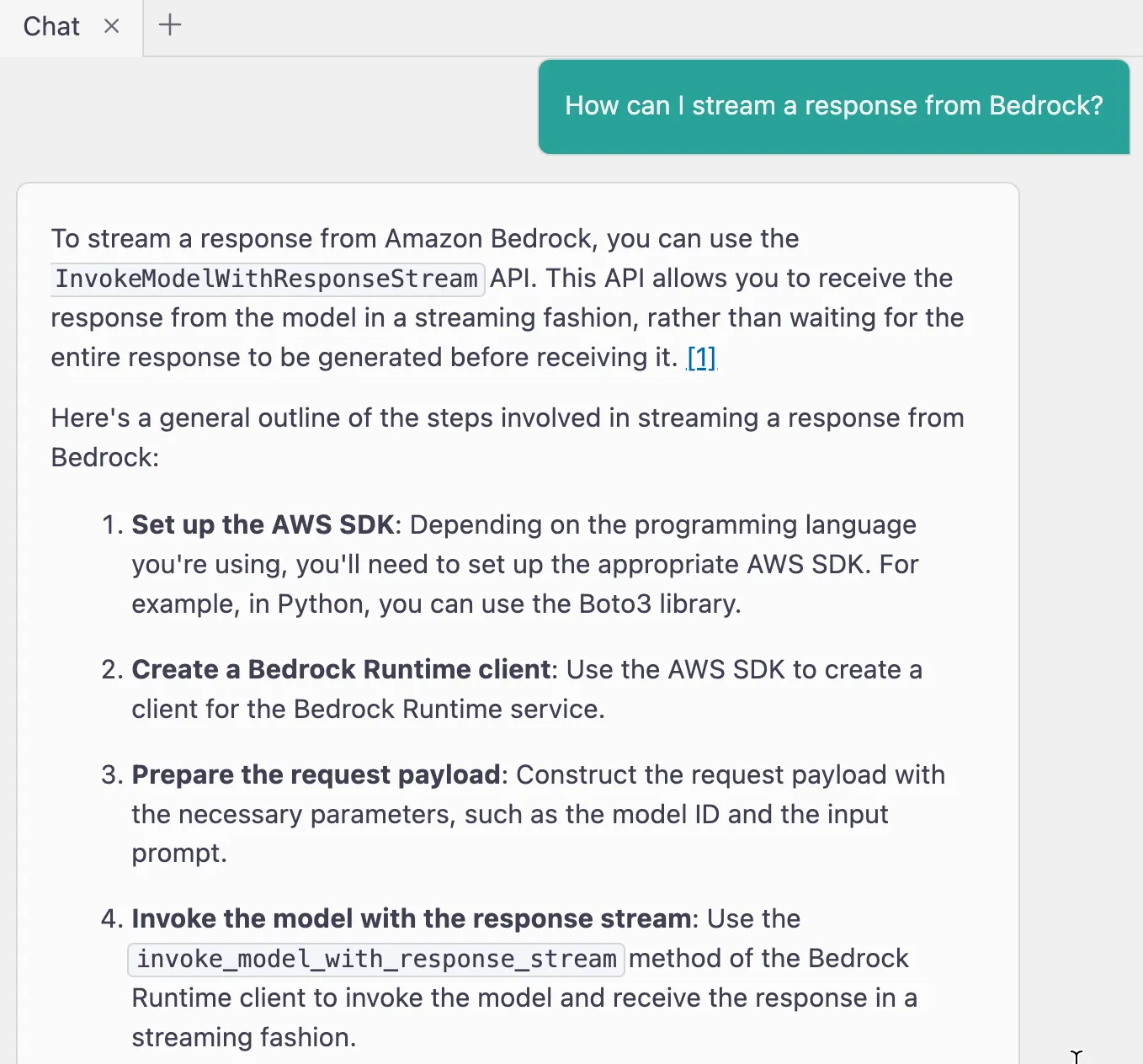

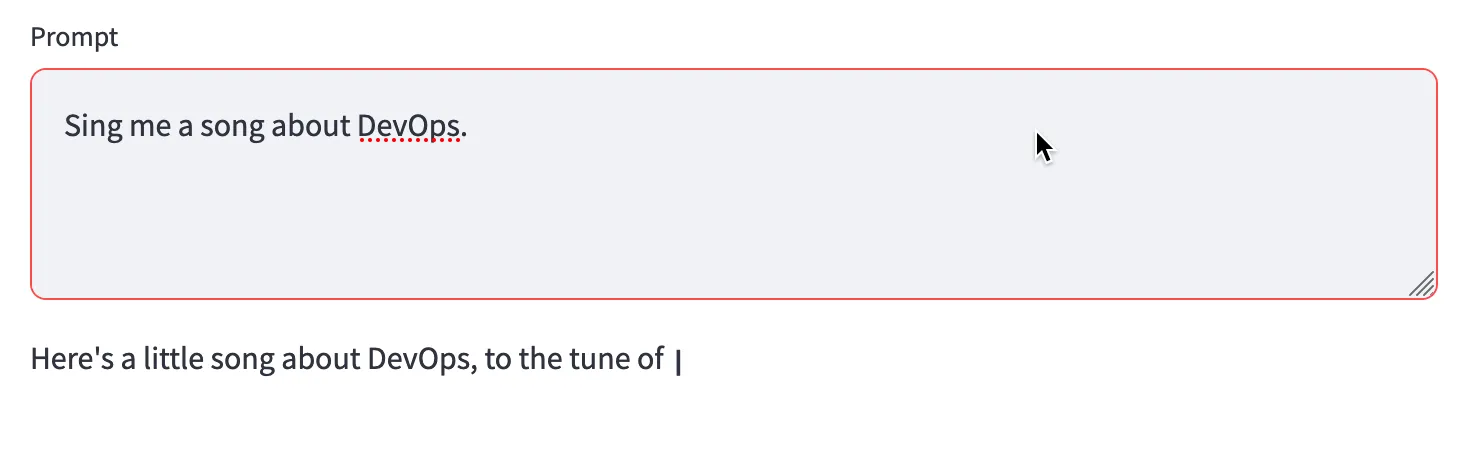

invoke_model API through a Python script run at the command line. It was pretty straightforward to migrate this send_prompt_to_bedrock function to my Streamlit app:send_prompt_to_bedrock uses invoke_model to send a user entered prompt to the Claude Sonnet model. When the response is returned, it is simply parsed and written to the page using Streamlit's write function.What is the capital of France? and got a response. A really short one: The capital of France is Paris. But what if I ask a question that warrants a much longer response? Maybe something like Sing me a song about DevOps. The user sits there and waits until the entire response has been returned from the API call and then the UI updates. My coworkers picked up on this and suggested a minor improvement -- stream the response so it prints to the UI earlier. We knew it was possible to do, but none of us knew the exact call to make and how to do it with Streamlit.

invoke_model_with_response_stream. Let use that!invoke_model call for this one:content_block_delta. And if it's not content_block_delta and instead is message_stop, we know we've hit the end of the response stream and return a new line.write function, we swap it out for write_stream which does all the fancy magic to make it look like typewriter output.

invoke_model_with_response_stream also gives us other benefits like better memory management and the potential to interrupt the response.invoke_model to invoke_model_with_response, except that we had to figure out how to process that response stream. In the final code below, you can see how we pull this all together and stream a response from Amazon Bedrock and use a bit of Streamlit's magic to give it the typewriter effect.