Simplify analyzing AWS WAF rules with GenAI using Amazon Bedrock

Use natural language queries to get insights from your WAF logs with the help of generative AI

- An AWS account where you have resources deployed and protected by an AWS WAF WebACL. If you do not have existing WAF configuration, you can create a new one.

- AWS Command Line Interface (CLI) installed on your local machine, or you can use AWS Cloud9 IDE

- Boto3 which is the AWS SDK for Python. Install on your local machine if you do not have it.

- Streamlit to create a web UI for the app. Install on your local machine or Cloud9 IDE

- Access to Amazon Bedrock foundation models. Request access for AI21 Labs Jurassic models if you have do not have it already

wafanalysis.py1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

import json

import boto3

import streamlit as st

from datetime import datetime, timedelta

# Initialize AWS clients

session = boto3.Session(profile_name='default')

waf = session.client(service_name='wafv2')

bedrock = session.client(service_name='bedrock-runtime')

bedrock_model_id = 'ai21.j2-ultra-v1' # Using AI21 Labs Jurassic model. For all model IDs, refer https://docs.aws.amazon.com/bedrock/latest/userguide/model-ids.html

# Function to fetch and analyze AWS WAF logs

def fetch_and_analyze_waf_logs(web_acl_arn, rule_metric_name, user_prompt):

# Get the current time and the time 60 minutes ago

current_time = datetime.utcnow()

start_time = current_time - timedelta(minutes=60)

# Fetch the AWS WAF logs for last 60 minutes from a specific WebACL

response = waf.get_sampled_requests(

WebAclArn=web_acl_arn,

RuleMetricName=rule_metric_name,

Scope='CLOUDFRONT', # Change this to REGIONAL if WebACL is associated with regional resources such as ALB or API Gateway

TimeWindow={

'StartTime': start_time,

'EndTime': current_time

},

MaxItems=100

)

# Create a prompt with WAF logs appended to it

input_data = "Analyze the following AWS WAF logs and provide insights for the following: " + str(user_prompt) + " " + str(response)

# FOR DEBUGGING: print the input prompt created in command line. Comment out if not required.

print("Input Prompt = ")

print(input_data)

# Provide inference parameters to the Amazon Bedrock model. This format is specific to AI21 model. For parameters for other models, refer https://docs.aws.amazon.com/bedrock/latest/userguide/model-parameters.html

body = json.dumps({

"prompt": input_data,

"maxTokens": 1024,

"temperature": 0,

"topP": 0.5,

"stopSequences": [],

"countPenalty": {"scale": 0 },

"presencePenalty": {"scale": 0 },

"frequencyPenalty": {"scale": 0 }

})

# Invoke Amazon Bedrock with the input data in JSON format

bedrock_response = bedrock.invoke_model(

modelId=bedrock_model_id,

body=body,

contentType='application/json',

accept='application/json'

)

# Decode the response body

response_body = json.loads(bedrock_response['body'].read().decode('utf-8'))

# Extract the text from the JSON response

response_text = response_body.get("completions", [])

if response_text:

response_text = response_text[0].get("data", {}).get("text", "")

return response_text

# Streamlit app to capture user input and display results

def main():

st.title("Analyze AWS WAF Logs with GenAI using Amazon Bedrock")

# Get user input for WebAclArn and RuleMetricName

web_acl_arn = st.text_input("Enter WebAclArn", value="")

rule_metric_name = st.text_input("Enter RuleMetricName", value="")

user_prompt = st.text_input("Enter your question", value="What rule is causing the 403 block?")

# Run the analysis when the user clicks the button

if st.button("Analyze WAF Logs"):

analysis = fetch_and_analyze_waf_logs(web_acl_arn, rule_metric_name, user_prompt)

st.write(analysis)

if __name__ == "__main__":

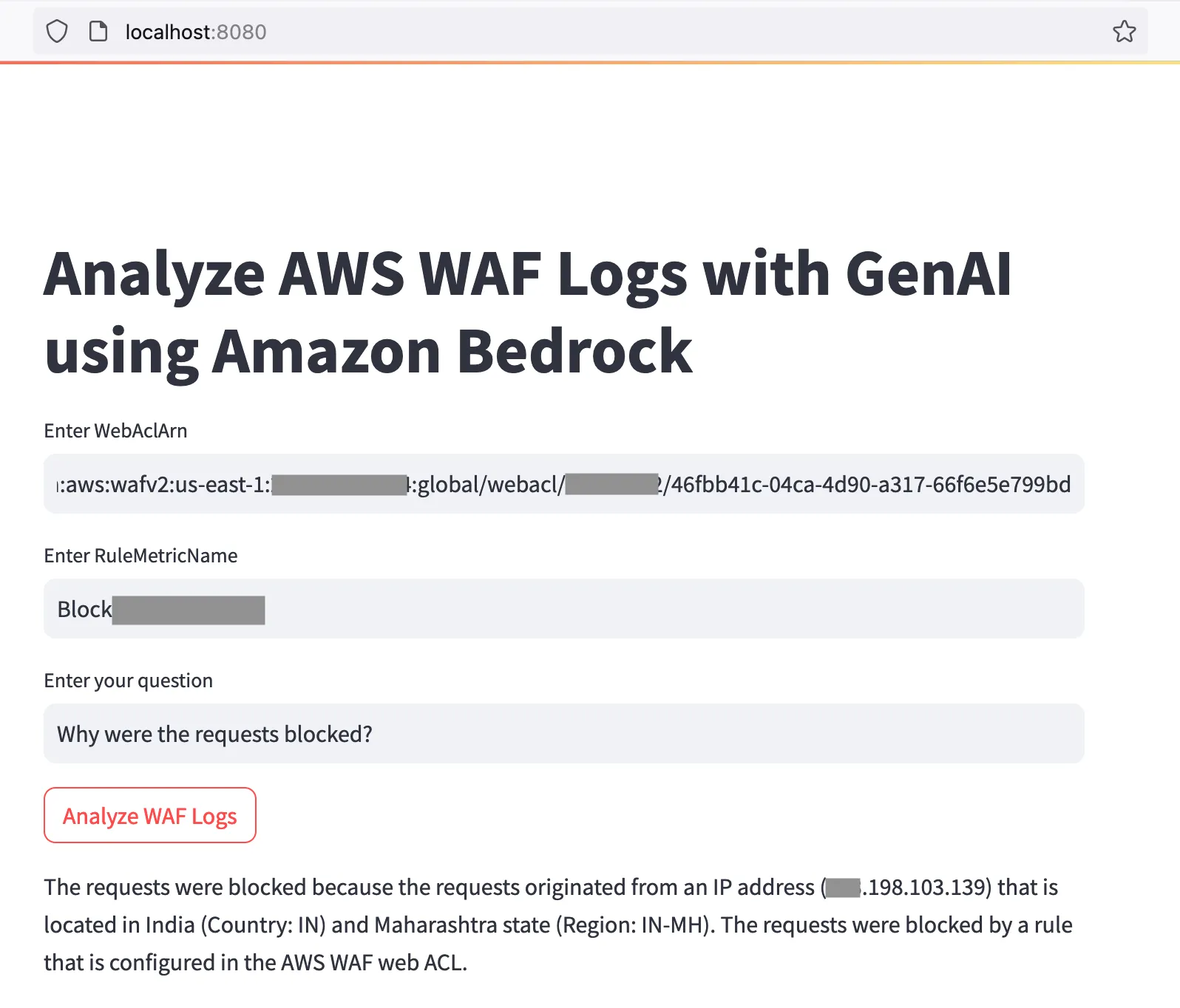

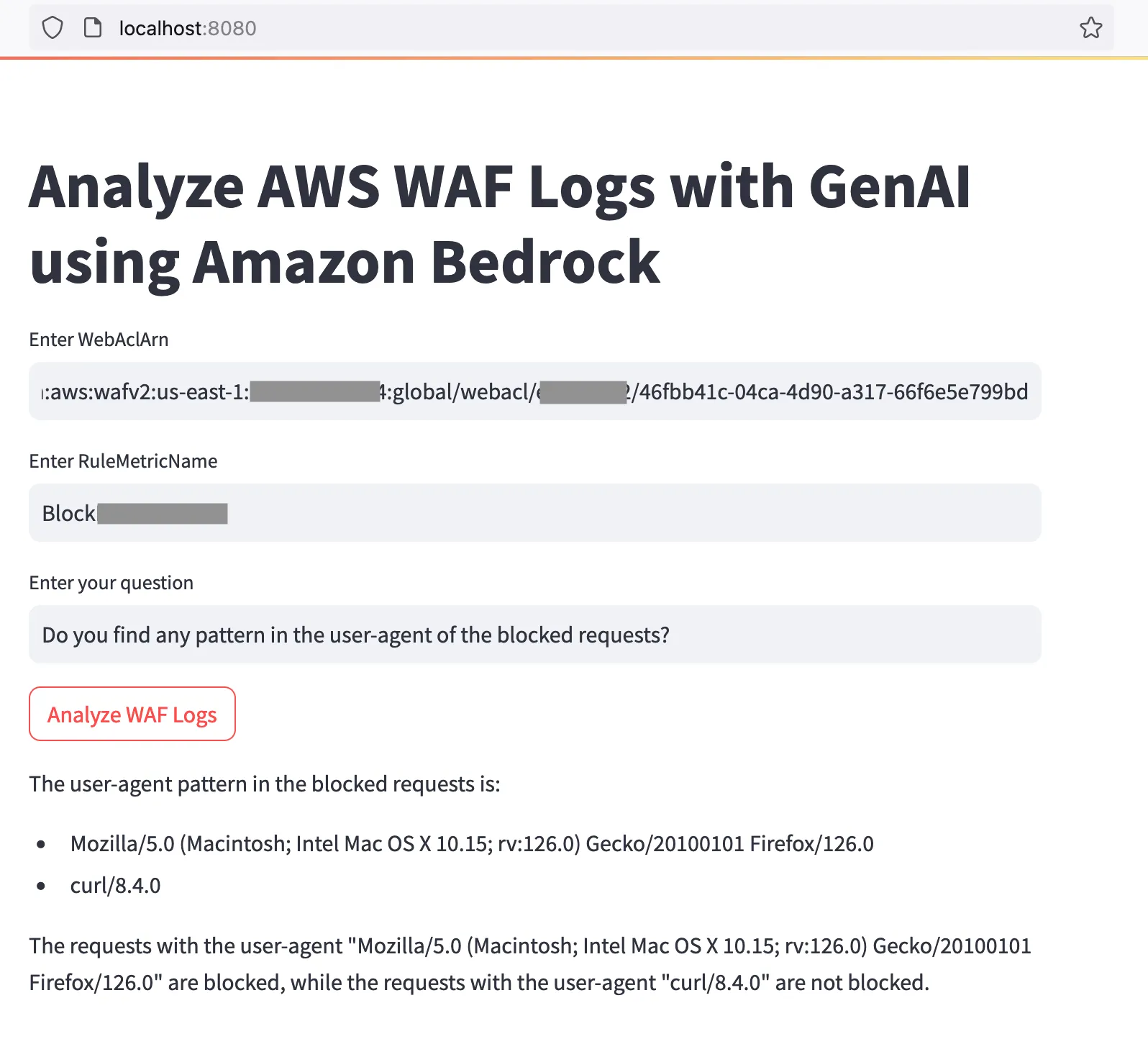

main()streamlit run wafanalysis.py --server.port 8080localhost:8080 in the address bar of your browser and it should open the UI.- ARN of your WAF WebACL. You can find the ARN by navigating to AWS WAF on the AWS console. Go to the list of your WebACLs, click the radio button next to the name of the WebACL and click the CopyARN button. The ARN should look something like this:

arn:aws:wafv2:us-east-1:1234567890:global/webacl/name-of-webacl/46abc41c-04ca-4d90-a317-66f6e5e123bd- The name of the WAF rule whose details you want to analyze. Copy this from the Rules tab of your WebACL on the AWS console

- Your question in natural language. For example, how many requests were blocked by this rule and what IPs did they come from?

- Click the Analyze WAF logs button and wait for a few seconds. The generative AI model will evaluate your question and provide a response on the screen.

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.