Developing Neural Searches with Custom Models

Are you excited with the OpenSearch models feature but need to use custom models? No problems. In this tutorial, you will learn how to use your own deployed model with OpenSearch.

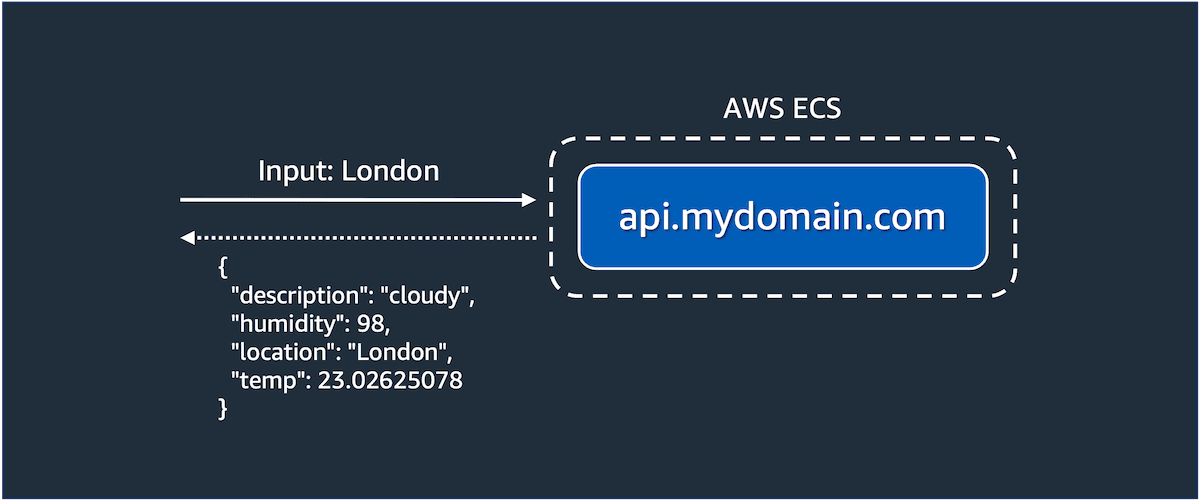

- Models from Hugging Face or custom models deployed onto a container or virtual machine

- Models deployed on Amazon SageMaker, including via JumpStart

- ...any API that you can connect to from the OpenSearch cluster.

git clone https://github.com/build-on-aws/getting-started-with-opensearch-modelscd getting-started-with-opensearch-models/custom-ml-apidocker compose up -d

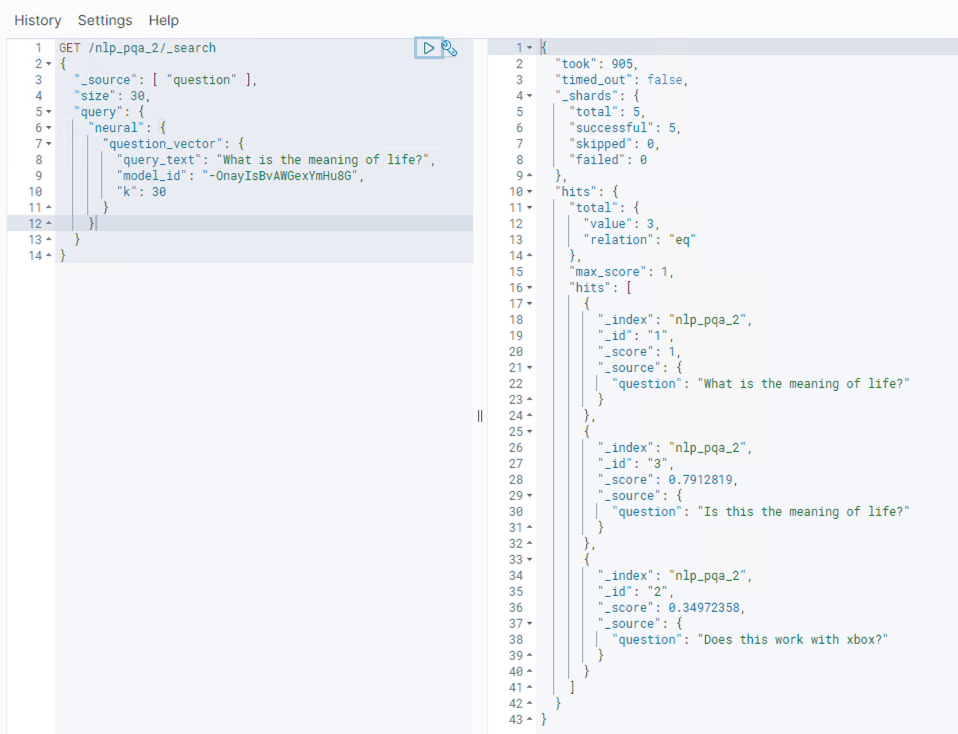

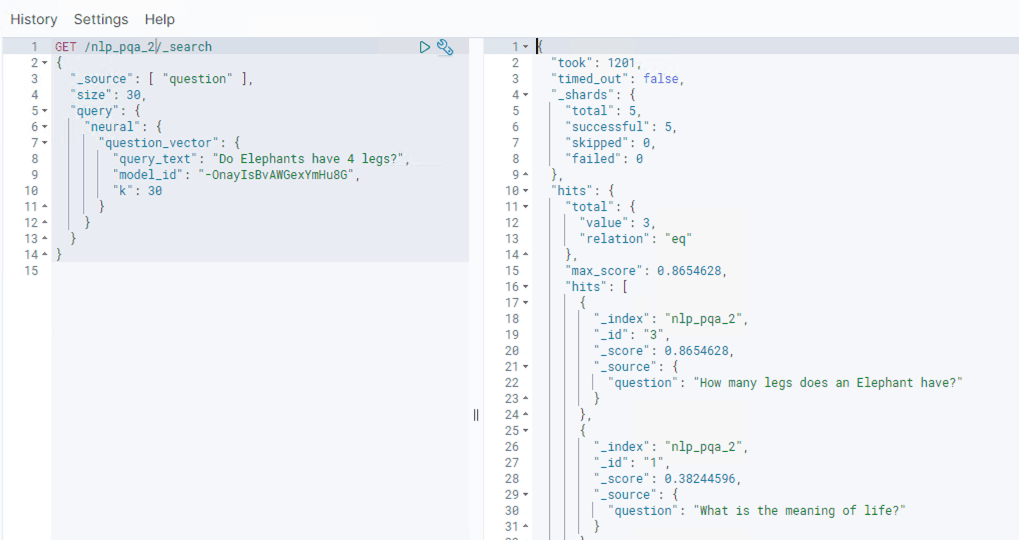

GET or a POST. In this case, a POST is used as a POST body is sent with the request:model_id of that task is retrieved using the task_id. With that, the model can be deployed:pre_process_function and a post_process_function is used. These allow the request and response to be transformed when working with a model, so it is in the shape expected by OpenSearch.

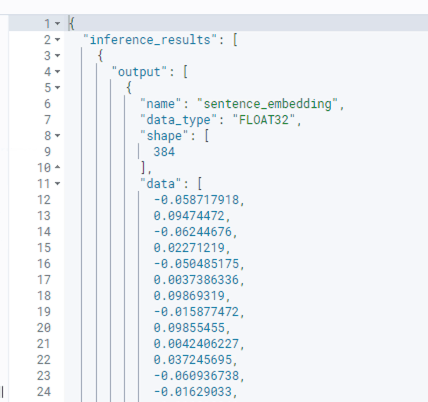

post_process_function then processes the APIs response. The example below has again formatted here for readability. For a working example, see the connector definition above:params.results. Any attributes in the response object can be accessed via the params object. This function is used to specify which attributes in the response to map and return to OpenSearch. Pre and post functions can be used as required to transform the request and response to the desired format.data_type is a string representation of the data type used in the data attribute, for example FLOAT32 or INT32.dimension attribute defined on the index vector property.post_process_function so that it matches.