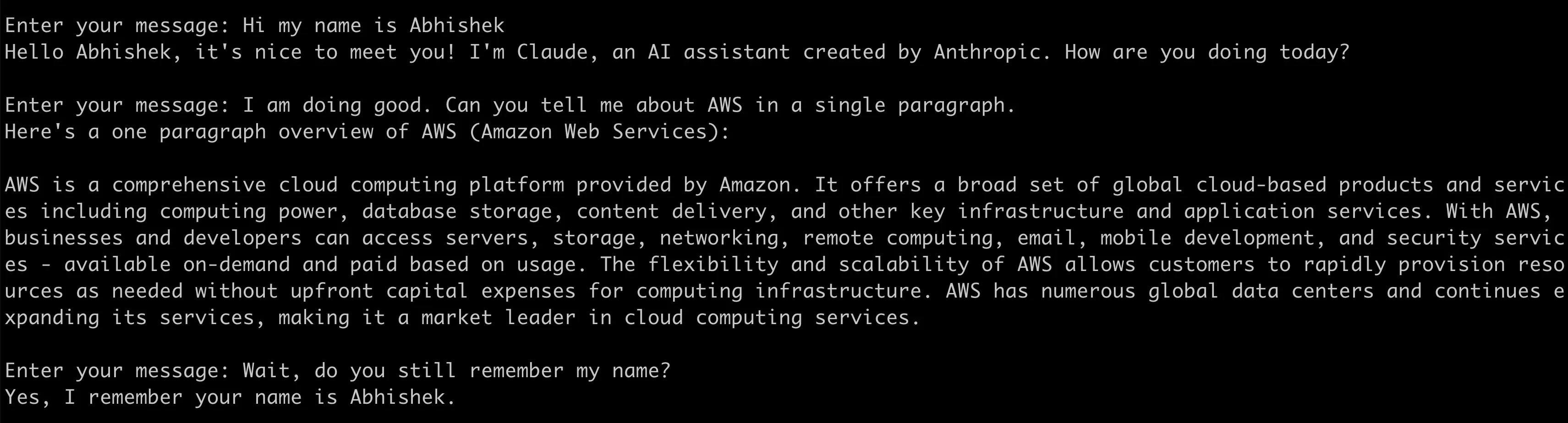

A single API for all your conversational generative AI applications

Use the Converse API to create conversational generative AI applications with a single API across multiple models

Converse API over InvokeModel (or InvokeModelWithResponseStream) APIs.- The API consists of two operations -

ConverseandConverseStream - The conversations are in the form of a

Messageobject, which are encapsulated in aContentBlock. - A

ContentBlockcan also have images, which are represented by anImageBlock. - A message can have one of two roles -

userorassistant - For streaming response, use the

ConverseStreamAPI - The streaming output (

ConverseStreamOutput) has multiple events, each of which has different response items such as the text output, metadata etc.

for loop in which:- Sent using the

ConverseAPI - The response is collected and added to existing list of messages

- The conversation continues, until the app is exited

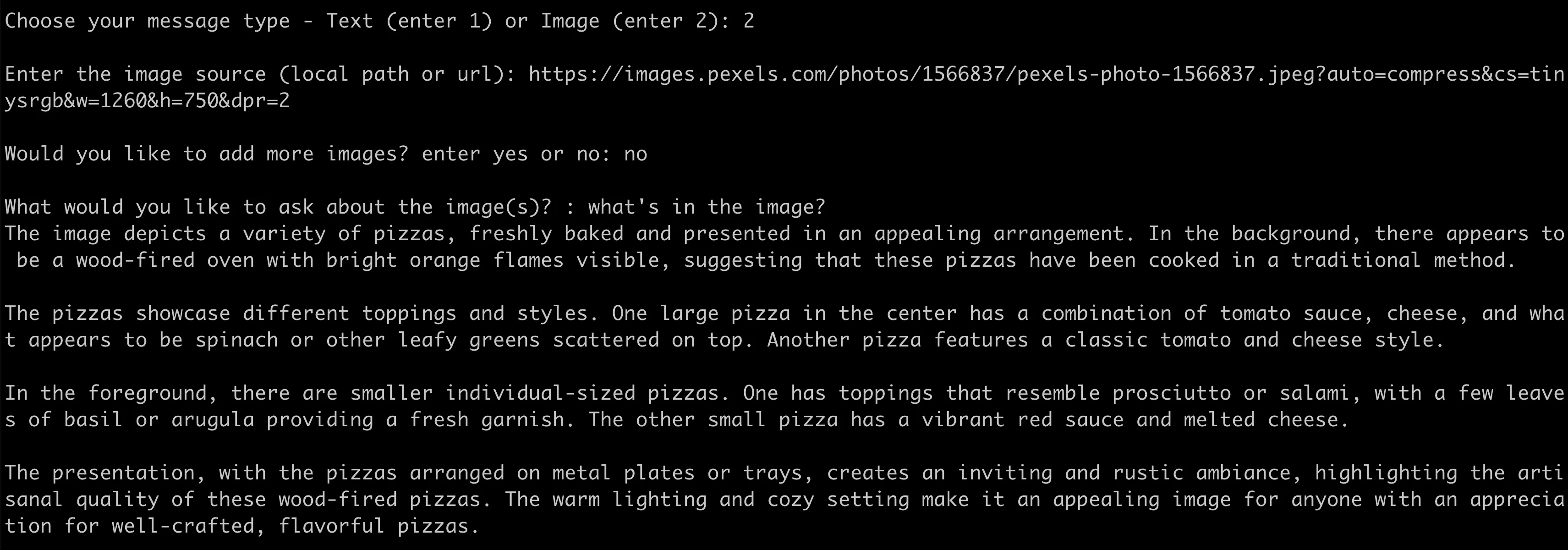

Converse API to build multi-modal application that work images - note that they only return text, for now.

imageContents is nothing but a []byte representation of the image.Converse API does to make your life easier.- It allows you to pass inference parameters specific to a model.

- You can also use the

ConverseAPI to implement tool use in your applications. - If you are using Mistral AI or Llama 2 Chat models, the

ConverseAPI will embed your input in a model-specific prompt template that enables conversations - one less thing to worry about!

Any opinions in this post are those of the individual author and may not reflect the opinions of AWS.